A profitable AI transformation begins with a powerful safety basis. With a speedy improve in AI improvement and adoption, organizations want visibility into their rising AI apps and instruments. Microsoft Safety offers risk safety, posture administration, information safety, compliance, and governance to safe AI purposes that you simply construct and use. These capabilities may also be used to assist enterprises safe and govern AI apps constructed with the DeepSeek R1 mannequin and acquire visibility and management over the usage of the seperate DeepSeek client app.

Safe and govern AI apps constructed with the DeepSeek R1 mannequin on Azure AI Foundry and GitHub

Develop with reliable AI

Final week, we introduced DeepSeek R1’s availability on Azure AI Foundry and GitHub, becoming a member of a various portfolio of greater than 1,800 fashions.

Prospects in the present day are constructing production-ready AI purposes with Azure AI Foundry, whereas accounting for his or her various safety, security, and privateness necessities. Just like different fashions supplied in Azure AI Foundry, DeepSeek R1 has undergone rigorous pink teaming and security evaluations, together with automated assessments of mannequin habits and intensive safety critiques to mitigate potential dangers. Microsoft’s internet hosting safeguards for AI fashions are designed to maintain buyer information inside Azure’s safe boundaries.

With Azure AI Content material Security, built-in content material filtering is accessible by default to assist detect and block malicious, dangerous, or ungrounded content material, with opt-out choices for flexibility. Moreover, the security analysis system permits clients to effectively check their purposes earlier than deployment. These safeguards assist Azure AI Foundry present a safe, compliant, and accountable setting for enterprises to confidently construct and deploy AI options. See Azure AI Foundry and GitHub for extra particulars.

Begin with Safety Posture Administration

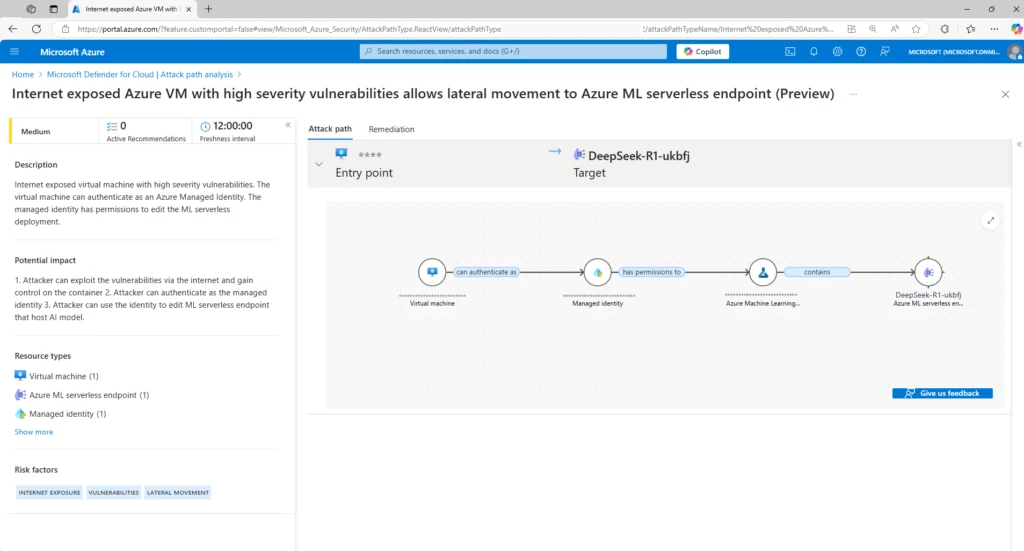

AI workloads introduce new cyberattack surfaces and vulnerabilities, particularly when builders leverage open-source sources. Subsequently, it’s vital to start out with safety posture administration, to find all AI inventories, comparable to fashions, orchestrators, grounding information sources, and the direct and oblique dangers round these parts. When builders construct AI workloads with DeepSeek R1 or different AI fashions, Microsoft Defender for Cloud’s AI safety posture administration capabilities might help safety groups acquire visibility into AI workloads, uncover AI cyberattack surfaces and vulnerabilities, detect cyberattack paths that may be exploited by dangerous actors, and get suggestions to proactively strengthen their safety posture in opposition to cyberthreats.

By mapping out AI workloads and synthesizing safety insights comparable to identification dangers, delicate information, and web publicity, Defender for Cloud repeatedly surfaces contextualized safety points and suggests risk-based safety suggestions tailor-made to prioritize vital gaps throughout your AI workloads. Related safety suggestions additionally seem inside the Azure AI useful resource itself within the Azure portal. This offers builders or workload homeowners with direct entry to suggestions and helps them remediate cyberthreats sooner.

Safeguard DeepSeek R1 AI workloads with cyberthreat safety

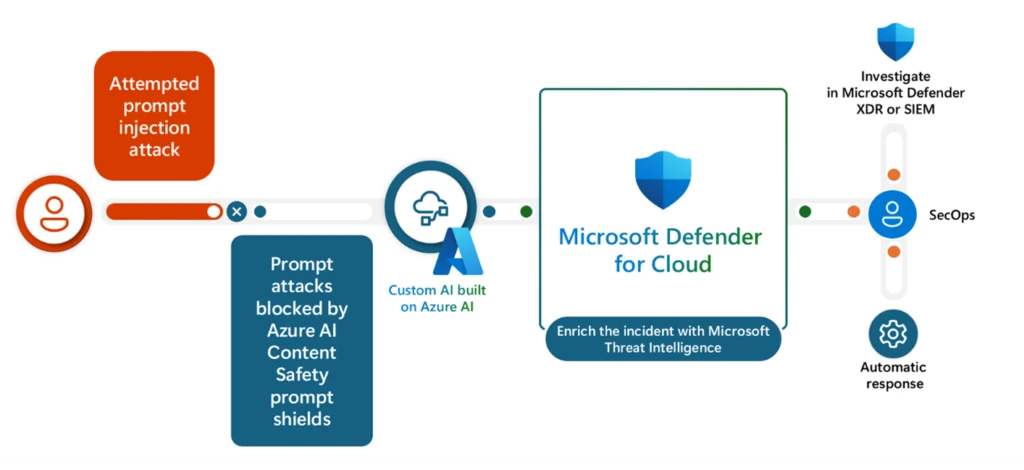

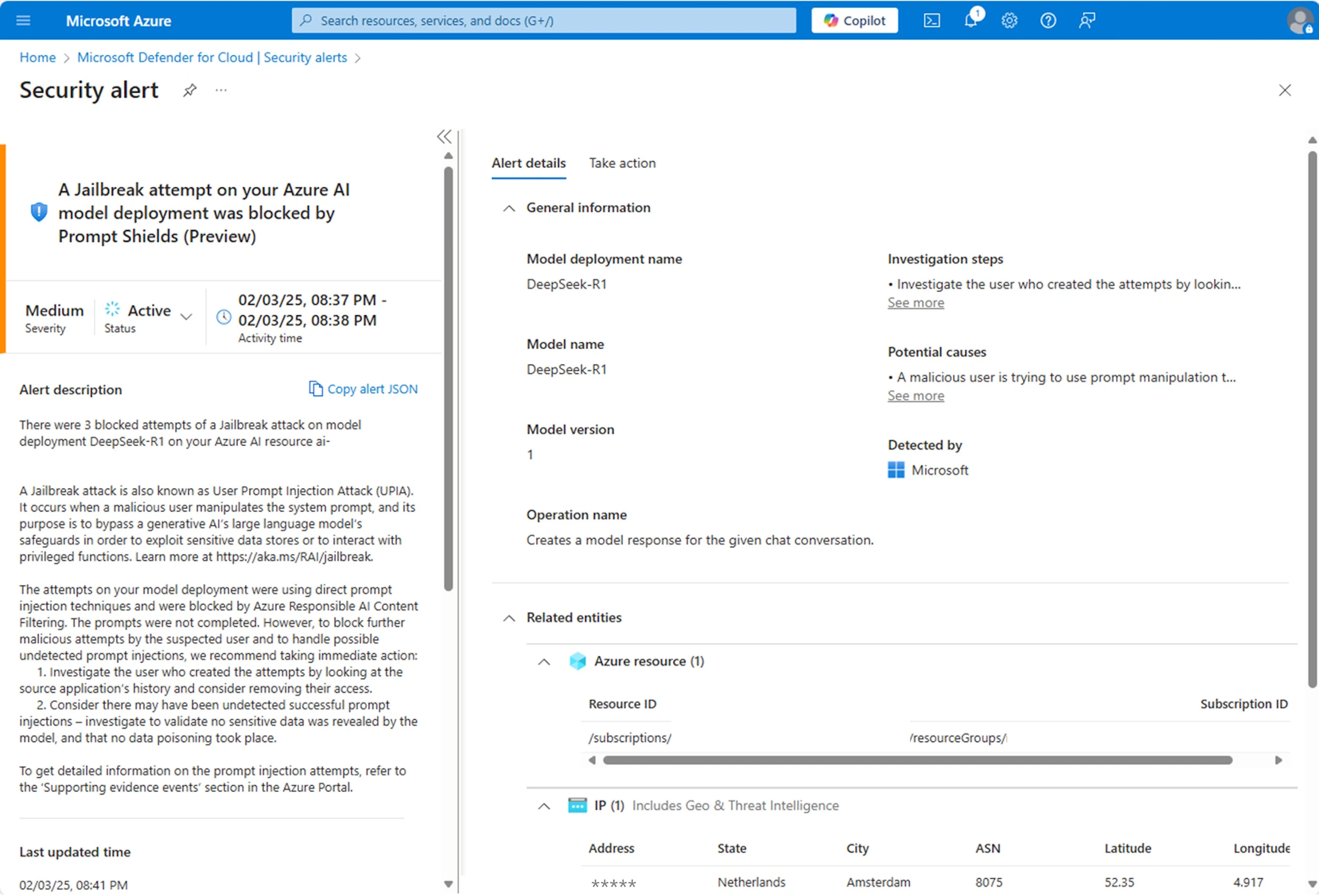

Whereas having a powerful safety posture reduces the chance of cyberattacks, the advanced and dynamic nature of AI requires lively monitoring in runtime as properly. No AI mannequin is exempt from malicious exercise and could be weak to immediate injection cyberattacks and different cyberthreats. Monitoring the newest fashions is vital to making sure your AI purposes are protected.

Built-in with Azure AI Foundry, Defender for Cloud repeatedly screens your DeepSeek AI purposes for uncommon and dangerous exercise, correlates findings, and enriches safety alerts with supporting proof. This offers your safety operations heart (SOC) analysts with alerts on lively cyberthreats comparable to jailbreak cyberattacks, credential theft, and delicate information leaks. For instance, when a immediate injection cyberattack happens, Azure AI Content material Security immediate shields can block it in real-time. The alert is then despatched to Microsoft Defender for Cloud, the place the incident is enriched with Microsoft Risk Intelligence, serving to SOC analysts perceive consumer behaviors with visibility into supporting proof, comparable to IP tackle, mannequin deployment particulars, and suspicious consumer prompts that triggered the alert.

Moreover, these alerts combine with Microsoft Defender XDR, permitting safety groups to centralize AI workload alerts into correlated incidents to grasp the total scope of a cyberattack, together with malicious actions associated to their generative AI purposes.

Safe and govern the usage of the DeepSeek app

Along with the DeepSeek R1 mannequin, DeepSeek additionally offers a client app hosted on its native servers, the place information assortment and cybersecurity practices could not align along with your organizational necessities, as is commonly the case with consumer-focused apps. This underscores the dangers organizations face if workers and companions introduce unsanctioned AI apps resulting in potential information leaks and coverage violations. Microsoft Safety offers capabilities to find the usage of third-party AI purposes in your group and offers controls for safeguarding and governing their use.

Safe and acquire visibility into DeepSeek app utilization

Microsoft Defender for Cloud Apps offers ready-to-use threat assessments for greater than 850 Generative AI apps, and the record of apps is up to date repeatedly as new ones change into widespread. This implies that you would be able to uncover the usage of these Generative AI apps in your group, together with the DeepSeek app, assess their safety, compliance, and authorized dangers, and arrange controls accordingly. For instance, for high-risk AI apps, safety groups can tag them as unsanctioned apps and block consumer’s entry to the apps outright.

Complete information safety

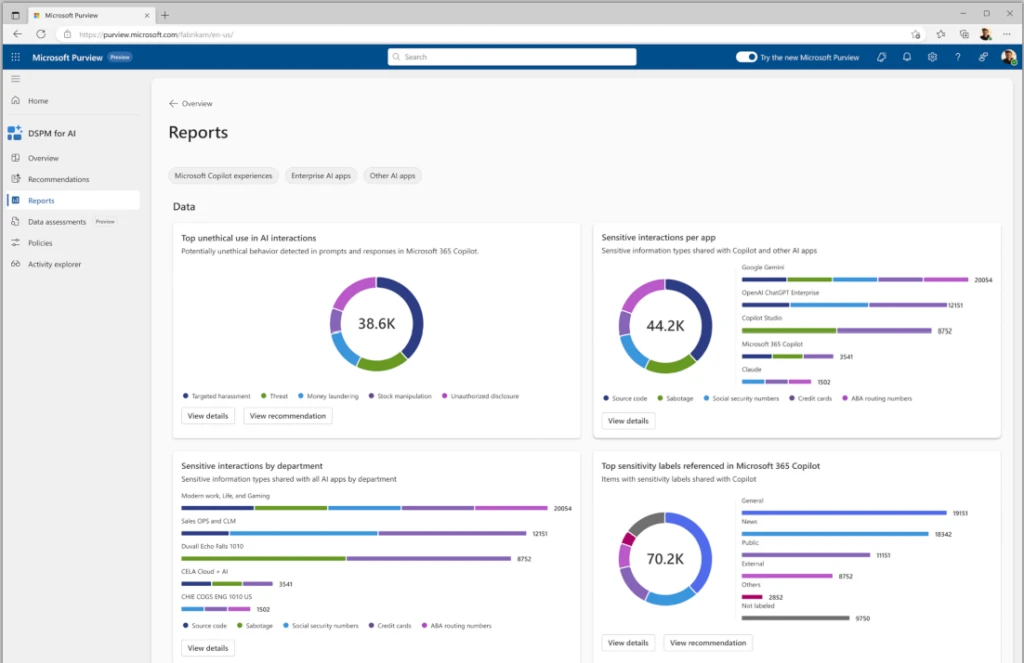

As well as, Microsoft Purview Information Safety Posture Administration (DSPM) for AI offers visibility into information safety and compliance dangers, comparable to delicate information in consumer prompts and non-compliant utilization, and recommends controls to mitigate the dangers. For instance, the stories in DSPM for AI can supply insights on the kind of delicate information being pasted to Generative AI client apps, together with the DeepSeek client app, so information safety groups can create and fine-tune their information safety insurance policies to guard that information and stop information leaks.

Forestall delicate information leaks and exfiltration

The leakage of organizational information is among the many high considerations for safety leaders concerning AI utilization, highlighting the significance for organizations to implement controls that stop customers from sharing delicate data with exterior third-party AI purposes.

Microsoft Purview Information Loss Prevention (DLP) allows you to stop customers from pasting delicate information or importing information containing delicate content material into Generative AI apps from supported browsers. Your DLP coverage can even adapt to insider threat ranges, making use of stronger restrictions to customers which are categorized as ‘elevated threat’ and fewer stringent restrictions for these categorized as ‘low-risk’. For instance, elevated-risk customers are restricted from pasting delicate information into AI purposes, whereas low-risk customers can proceed their productiveness uninterrupted. By leveraging these capabilities, you possibly can safeguard your delicate information from potential dangers from utilizing exterior third-party AI purposes. Safety admins can then examine these information safety dangers and carry out insider threat investigations inside Purview. These identical information safety dangers are surfaced in Defender XDR for holistic investigations.

This can be a fast overview of a few of the capabilities that will help you safe and govern AI apps that you simply construct on Azure AI Foundry and GitHub, in addition to AI apps that customers in your group use. We hope you discover this handy!

To be taught extra and to get began with securing your AI apps, check out the extra sources beneath:

Study extra with Microsoft Safety

To be taught extra about Microsoft Safety options, go to our web site. Bookmark the Safety weblog to maintain up with our professional protection on safety issues. Additionally, comply with us on LinkedIn (Microsoft Safety) and X (@MSFTSecurity) for the newest information and updates on cybersecurity.