Introduction

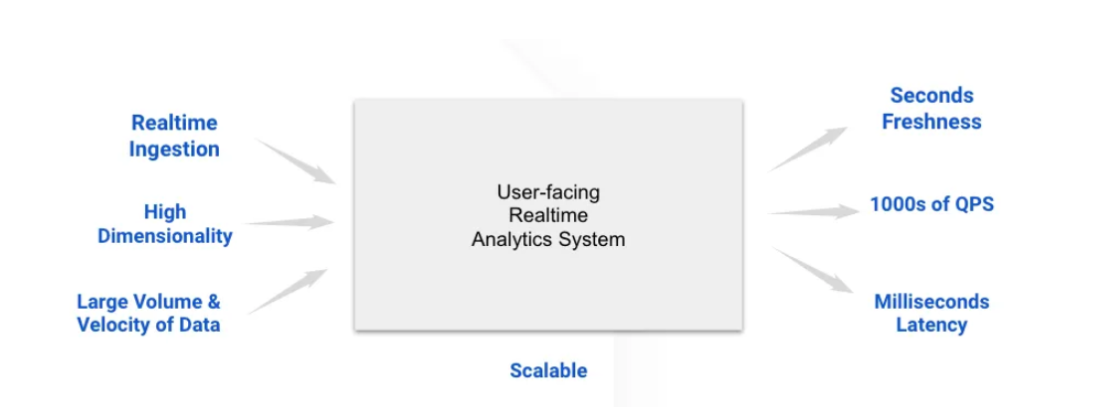

In in the present day’s fast-paced software program growth setting, guaranteeing optimum software efficiency is essential. Monitoring real-time metrics akin to response occasions, error charges, and useful resource utilization might help keep excessive availability and ship a seamless consumer expertise. Apache Pinot, an open-source OLAP datastore, presents the flexibility to deal with real-time information ingestion and low-latency querying, making it an acceptable resolution for monitoring software efficiency at scale. On this article, we’ll discover easy methods to implement a real-time monitoring system utilizing Apache Pinot, with a give attention to establishing Kafka for information streaming, defining Pinot schemas and tables, querying efficiency information with Python, and visualizing metrics with instruments like Grafana.

Studying Goals

- Find out how Apache Pinot can be utilized to construct a real-time monitoring system for monitoring software efficiency metrics in a distributed setting.

- Learn to write and execute SQL queries in Python to retrieve and analyze real-time efficiency metrics from Apache Pinot.

- Acquire hands-on expertise in establishing Apache Pinot, defining schemas, and configuring tables to ingest and retailer software metrics information in real-time from Kafka.

- Perceive easy methods to combine Apache Pinot with visualization instruments like Grafana or Apache Superset.

This text was printed as part of the Information Science Blogathon.

Use Case: Actual-time Software Efficiency Monitoring

Let’s discover a state of affairs the place we ’re managing a distributed software serving tens of millions of customers throughout a number of areas. To take care of optimum efficiency, we have to monitor numerous efficiency metrics:

- Response Instances– How shortly our software responds to consumer requests.

- Error Charges: The frequency of errors in your software.

- CPU and Reminiscence Utilization: The sources your software is consuming.

Deploy Apache Pinot to create a real-time monitoring system that ingests, shops, and queries efficiency information, enabling fast detection and response to points.

System Structure

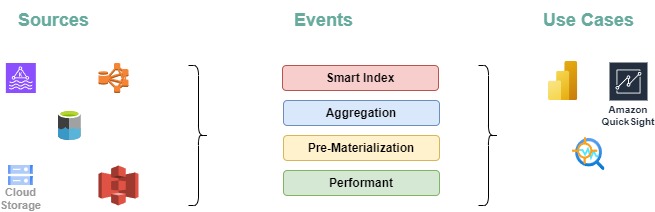

- Information Sources:

- Metrics and logs are collected from totally different software companies.

- These logs are streamed to Apache Kafka for real-time ingestion.

- Information Ingestion:

- Apache Pinot ingests this information straight from Kafka subjects, offering real-time processing with minimal delay.

- Pinot shops the info in a columnar format, optimized for quick querying and environment friendly storage.

- Querying:

- Pinot acts because the question engine, permitting you to run complicated queries towards real-time information to realize insights into software efficiency.

- Pinot’s distributed structure ensures that queries are executed shortly, at the same time as the amount of knowledge grows.

- Visualization:

- The outcomes from Pinot queries might be visualized in real-time utilizing instruments like Grafana or Apache Superset, providing dynamic dashboards for monitoring KPI’s.

- Visualization is essential to creating the info actionable, permitting you to watch KPIs, set alerts, and reply to points in real-time.

Setting Up Kafka for Actual-Time Information Streaming

Step one is to arrange Apache Kafka to deal with real-time streaming of our software’s logs and metrics. Kafka is a distributed streaming platform that permits us to publish and subscribe to streams of information in real-time. Every microservice in our software can produce log messages or metrics to Kafka subjects, which Pinot will later eat

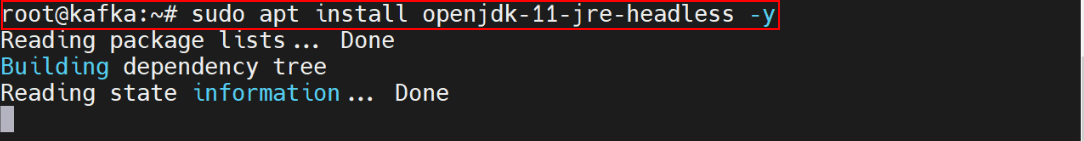

Set up Java

To run Kafka, we will likely be putting in Java on our system-

sudo apt set up openjdk-11-jre-headless -y

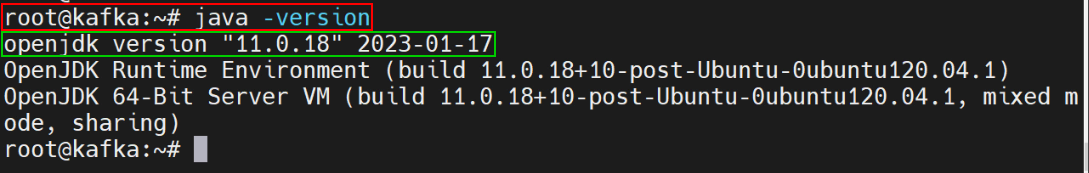

Confirm the Java Model

java –model

Downloading Kafka

wget https://downloads.apache.org/kafka/3.4.0/kafka_2.13-3.4.0.tgzsudo mkdir /usr/native/kafka-server

sudo tar xzf kafka_2.13-3.4.0.tgzAdditionally we have to transfer the extracted information to the folder given below-

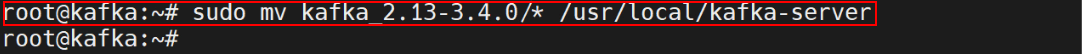

sudo mv kafka_2.13-3.4.0/* /usr/native/kafka-server

Reset the Configuration Information by the Command

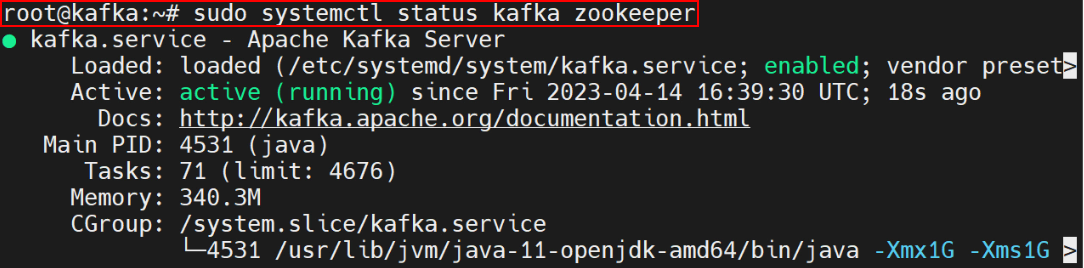

sudo systemctl daemon-reloadBeginning Kafka

Assuming Kafka and Zookeeper are already put in, Kafka might be began utilizing beneath instructions:

# Begin Zookeeper

zookeeper-server-start.sh config/zookeeper.properties

# Begin Kafka server

kafka-server-start.sh config/server.properties

Creating Kafka Subjects

Subsequent, creation of a Kafka subject for our software metrics. Subjects are the channels via which information flows in Kafka. Right here, we’ve created a subject named app-metrics with 3 partitions and a replication issue of 1. The variety of partitions distributes the info throughout Kafka brokers, whereas the replication issue controls the extent of redundancy by figuring out what number of copies of the info exist.

kafka-topics.sh --create --topic app-metrics --bootstrap-server localhost:9092 --partitions 3 --replication-factor 1Publishing Information to Kafka

Our software can publish metrics to the Kafka subject in real-time. This script simulates sending software metrics to the Kafka subject each second. The metrics embody particulars akin to service identify, endpoint, standing code, response time, CPU utilization, reminiscence utilization, and timestamp.

from confluent_kafka import Producer

import json

import time

# Kafka producer configuration

conf = {'bootstrap.servers': "localhost:9092"}

producer = Producer(**conf)

# Operate to ship a message to Kafka

def send_metrics():

metrics = {

"service_name": "auth-service",

"endpoint": "/login",

"status_code": 200,

"response_time_ms": 123.45,

"cpu_usage": 55.2,

"memory_usage": 1024.7,

"timestamp": int(time.time() * 1000)

}

producer.produce('app-metrics', worth=json.dumps(metrics))

producer.flush()

# Simulate sending metrics each 2 seconds

whereas True:

send_metrics()

time.sleep(2)

Defining Pinot Schema and Desk Configuration

With Kafka arrange and streaming information, the subsequent step is to configure Apache Pinot to ingest and retailer this information. This entails defining a schema and making a desk in Pinot.

Schema Definition

The schema defines the construction of the info that Pinot will ingest. It specifies the size (attributes) and metrics (measurable portions) that will likely be saved, in addition to the info sorts for every subject. Create a JSON file named “app_performance_ms_schema.json” with the next content material:

{

"schemaName": "app_performance_ms",

"dimensionFieldSpecs": [

{"name": "service", "dataType": "STRING"},

{"name": "endpoint", "dataType": "STRING"},

{"name": "s_code", "dataType": "INT"}

],

"metricFieldSpecs": [

{"name": "response_time", "dataType": "DOUBLE"},

{"name": "cpu_usage", "dataType": "DOUBLE"},

{"name": "memory_usage", "dataType": "DOUBLE"}

],

"dateTimeFieldSpecs": [

{

"name": "timestamp",

"dataType": "LONG",

"format": "1:MILLISECONDS:EPOCH",

"granularity": "1:MILLISECONDS"

}

]

}Desk Configuration

The desk configuration file tells Pinot easy methods to handle the info, together with particulars on information ingestion from Kafka, indexing methods, and retention insurance policies.

Create one other JSON file named “app_performance_metrics_table.json” with the next content material:

{

"tableName": "appPerformanceMetrics",

"tableType": "REALTIME",

"segmentsConfig": {

"timeColumnName": "timestamp",

"schemaName": "appMetrics",

"replication": "1"

},

"tableIndexConfig": {

"loadMode": "MMAP",

"streamConfigs": {

"streamType": "kafka",

"stream.kafka.subject.identify": "app_performance_metrics",

"stream.kafka.dealer.record": "localhost:9092",

"stream.kafka.client.sort": "lowlevel"

}

}

}This configuration specifies that the desk will ingest information from the app_performance_metrics Kafka subject in real-time. It makes use of the timestamp column as the first time column and configures indexing to help environment friendly queries.

Deploying the Schema and Desk Configuration

As soon as the schema and desk configuration are prepared, we will deploy them to Pinot utilizing the next instructions:

bin/pinot-admin.sh AddSchema -schemaFile app_performance_ms_schema.json -exec

bin/pinot-admin.sh AddTable -tableConfigFile app_performance_metrics_table.json -schemaFile app_performance_ms_schema.json -exec

After deployment, Apache Pinot will begin ingesting information from the Kafka subject app-metrics and making it obtainable for querying.

Querying Information to Monitor KPIs

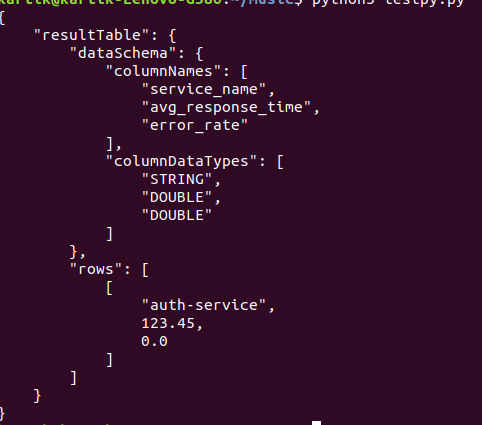

As Pinot ingests information, now you can begin querying it to watch key efficiency indicators (KPIs). Pinot helps SQL-like queries, permitting us to retrieve and analyze information shortly. Right here’s a Python script that queries the common response time and error charge for every service over the previous 5 minutes:

import requests

import json

# Pinot dealer URL

pinot_broker_url = "http://localhost:8099/question/sql"

# SQL question to get common response time and error charge

question = """

SELECT service_name,

AVG(response_time_ms) AS avg_response_time,

SUM(CASE WHEN status_code >= 400 THEN 1 ELSE 0 END) / COUNT(*) AS error_rate

FROM appPerformanceMetrics

WHERE timestamp >= in the past('PT5M')

GROUP BY service_name

"""

# Execute the question

response = requests.put up(pinot_broker_url, information=question, headers={"Content material-Sort": "software/json"})

if response.status_code == 200:

consequence = response.json()

print(json.dumps(consequence, indent=4))

else:

print("Question failed with standing code:", response.status_code)

This script sends a SQL question to Pinot to calculate the common response time and error charge for every service within the final 5 minutes. These metrics are essential for understanding the real-time efficiency of our software.

Understanding the Question Outcomes

- Common Response Time: Supplies perception into how shortly every service is responding to requests. Greater values may point out efficiency bottlenecks.

- Error Fee: Reveals the proportion of requests that resulted in errors (standing codes >= 400). A excessive error charge may sign issues with the service.

Visualizing the Information: Integrating Pinot with Grafana

Grafana is a well-liked open-source visualization instrument that helps integration with Apache Pinot. By connecting Grafana to Pinot, we will create real-time dashboards that show metrics like response occasions, error charges, and useful resource utilization. Instance dashboard can embody the next information-

- Response Instances frequency: A line chart with space exhibiting the common response time for every service over the previous 24 hours.

- Error Charges: A stacked bar chart highlighting companies with excessive error charges, serving to you establish problematic areas shortly.

- Classes Utilization: An space chart displaying CPU and reminiscence utilization developments throughout totally different companies.

This visualization setup supplies a complete view of our software’s well being and efficiency, enabling us to watch KPIs repeatedly and take proactive measures when points come up.

Superior Issues

As our real-time monitoring system with Apache Pinot expands, there are a number of superior features to handle for sustaining its effectiveness:

- Information Retention and Archiving:

- Problem: As your software generates rising quantities of knowledge, managing storage effectively turns into essential to keep away from inflated prices and efficiency slowdowns.

- Answer: Implementing information retention insurance policies helps handle information quantity by archiving or deleting older information which are not wanted for fast evaluation. Apache Pinot automates these processes via its phase administration and information retention mechanisms.

- Scaling Pinot:

- Problem: The rising quantity of knowledge and question requests can pressure a single Pinot occasion or cluster setup.

- Answer: Apache Pinot helps horizontal scaling, enabling you to develop your cluster by including extra nodes. This ensures that the system can deal with elevated information ingestion and question masses successfully, sustaining efficiency as your software grows.

- Alerting :

- Problem: Detecting and responding to efficiency points with out automated alerts might be difficult, probably delaying downside decision.

- Answer: Combine alerting programs to obtain notifications when metrics exceed predefined thresholds. You should utilize instruments like Grafana or Prometheus to arrange alerts, guaranteeing you’re promptly knowledgeable of any anomalies or points in your software’s efficiency.

- Efficiency Optimization:

- Problem: With a rising dataset and sophisticated queries, sustaining environment friendly question efficiency can grow to be difficult.

- Answer: Constantly optimize your schema design, indexing methods, and question patterns. Make the most of Apache Pinot’s instruments to watch and handle efficiency bottlenecks. Make use of partitioning and sharding strategies to raised distribute information and queries throughout the cluster.

Conclusion

Efficient real-time monitoring is crucial for guaranteeing the efficiency and reliability of recent functions. Apache Pinot presents a robust resolution for real-time information processing and querying, making it well-suited for complete monitoring programs. By implementing the methods mentioned and contemplating superior subjects like scaling and safety, you possibly can construct a strong and scalable monitoring system that helps you keep forward of potential efficiency points, guaranteeing a clean expertise on your customers.

Key Takeaways

- Apache Pinot is adept at dealing with real-time information ingestion and supplies low-latency question efficiency, making it a robust instrument for monitoring software efficiency metrics. It integrates nicely with streaming platforms like Kafka, enabling fast evaluation of metrics akin to response occasions, error charges, and useful resource utilization.

- Kafka streams software logs and metrics, which Apache Pinot then ingests. Configuring Kafka subjects and linking them with Pinot permits for steady processing and querying of efficiency information, guaranteeing up-to-date insights.

- Correctly defining schemas and configuring tables in Apache Pinot is essential for environment friendly information administration. The schema outlines the info construction and kinds, whereas the desk configuration controls information ingestion and indexing, supporting efficient real-time evaluation.

- Apache Pinot helps SQL-like queries for in-depth information evaluation. When used with visualization instruments akin to Grafana or Apache Superset, it permits the creation of dynamic dashboards that present real-time visibility into software efficiency, aiding within the swift detection and determination of points.

Steadily Requested Questions

A. Apache Pinot is optimized for low-latency querying, making it splendid for situations the place real-time insights are essential. Its capacity to ingest information from streaming sources like Kafka and deal with large-scale, high-throughput information units permits it to offer up-to-the-minute analytics on software efficiency metrics.

A. Apache Pinot is designed to ingest real-time information by straight consuming messages from Kafka subjects. It helps each low-level and high-level Kafka customers, permitting Pinot to course of and retailer information with minimal delay, making it obtainable for fast querying.

A. To arrange a real-time monitoring system with Apache Pinot, you want:

Information Sources: Software logs and metrics streamed to Kafka.

Apache Pinot: For real-time information ingestion and querying.

Schema and Desk Configuration: Definitions in Pinot for storing and indexing the metrics information.

Visualization Instruments: Instruments like Grafana or Apache Superset for creating real-time dashboards

A. Sure, Apache Pinot helps integration with different information streaming platforms like Apache Pulsar and AWS Kinesis. Whereas this text focuses on Kafka, the identical rules apply when utilizing totally different streaming platforms, although configuration particulars will differ.

The media proven on this article will not be owned by Analytics Vidhya and is used on the Creator’s discretion.