Within the dynamic world of synthetic intelligence and tremendous development of Generative AI, builders are consistently searching for revolutionary methods to extract significant perception from textual content. This weblog publish walks you thru an thrilling venture that harnesses the facility of Google’s Gemini AI to create an clever English Educator Software that analyzes textual content paperwork and offers tough phrases, medium phrases, their synonyms, antonyms, use-cases and likewise offers the necessary questions with a solution from the textual content. I imagine schooling is the sector that advantages most from the developments of Generative AIs or LLMs and it’s GREAT!

Studying Aims

- Integrating Google Gemini AI fashions into Python-based APIs.

- Perceive combine and make the most of the English Educator App API to reinforce language studying purposes with real-time information and interactive options.

- Learn to leverage the English Educator App API to construct custom-made academic instruments, bettering person engagement and optimizing language instruction.

- Implementation of clever textual content evaluation utilizing superior AI prompting.

- Managing complicated AI interplay error-free with error-handling methods.

This text was printed as part of the Knowledge Science Blogathon.

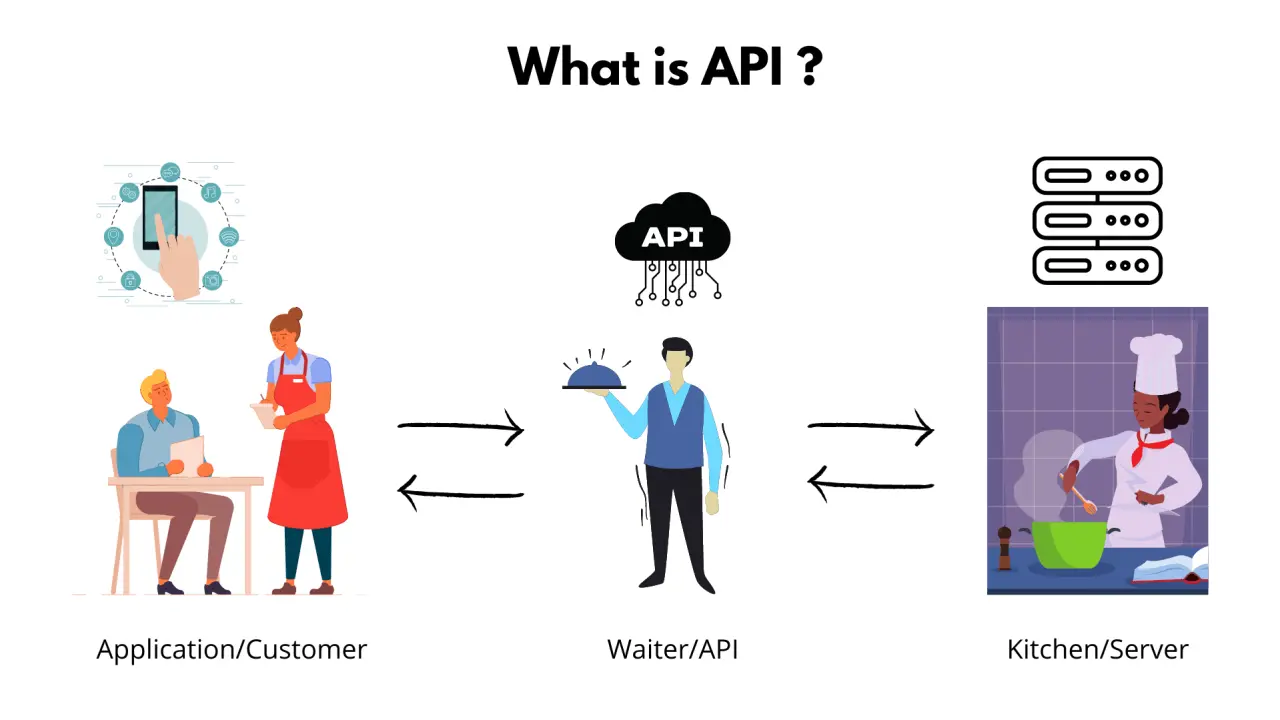

What are APIs?

API (Software Programming Interfaces) function a digital bridge between completely different software program purposes. They’re outlined as a set of protocols and guidelines that allow seamless communication, permitting builders to entry particular functionalities with out diving into complicated underlying implementation.

What’s REST API?

REST (Representational State Switch) is an architectural model for designing networked purposes. It makes use of customary HTTP strategies to carry out operations on assets.

Vital REST strategies are:

- GET: Retrieve information from a server.

- POST: Create new assets.

- PUT: Replace present assets utterly.

- PATCH: Partially replace present assets.

- DELETE: Take away assets.

Key traits embody:

- Stateless communication

- Uniform interface

- Shopper-Serve structure

- Cacheable assets

- Layered system design

REST APIs use URLs to establish assets and usually return information in JSON codecs. They supply a standardized, scalable strategy for various purposes to speak over the web, making them elementary in fashionable internet and cell improvement.

Pydantic and FastAPI: A Excellent Pair

Pydantic revolutionizes information validation in Python by permitting builders to create strong information fashions with sort hints and validation guidelines. It ensures information integrity and offers crystal-clear interface definitions, catching potential errors earlier than they propagate by way of the system.

FastAPI enhances Pydantic superbly, providing a contemporary, high-performance asynchronous internet framework for constructing APIs.

Its key benefit of FastAPI:

- Computerized interactive API documentation

- Excessive-speed efficiency

- Constructed-in assist for Asynchronous Server Gateway Interface

- Intuitive information validation

- Clear and simple syntax

A Temporary on Google Gemini

Google Gemini represents a breakthrough in multimodal AI fashions, able to processing complicated data throughout textual content, code, audio, and picture. For this venture, I leverage the ‘gemini-1.5-flash’ mannequin, which offers:

- Speedy and clever textual content processing utilizing prompts.

- Superior pure language understanding.

- Versatile system directions for custom-made outputs utilizing prompts.

- Potential to generate a nuanced, context-aware response.

Venture Setup and Atmosphere Configuration

Establishing the event surroundings is essential for a easy implementation. We use Conda to create an remoted, reproducible surroundings

# Create a brand new conda surroundings

conda create -n educator-api-env python=3.11

# Activate the surroundings

conda activate educator-api-env

# Set up required packages

pip set up "fastapi[standard]" google-generativeai python-dotenvVenture Architectural Elements

Our API is structured into three major elements:

- fashions.py : Outline information buildings and validation

- companies.py : Implements AI-powered textual content extractor companies

- foremost.py : Create API endpoints and handles request routing

Constructing the API: Code Implementation

Getting Google Gemini API Key and Safety setup for the venture.

Create a .env file within the venture root, Seize your Gemini API Key from right here, and put your key within the .env file

GOOGLE_API_KEY="ABCDEFGH-67xGHtsf"This file might be securely accessed by the service module utilizing os.getenv(“<google-api-key>”). So your necessary secret key is not going to be public.

Pydantic Fashions: Making certain Knowledge Integrity

We outline structured fashions that assure information consistency for the Gemini response. We’ll implement two information fashions for every information extraction service from the textual content.

Vocabulary information extraction mannequin:

- WordDetails: It should construction and validate the extracted phrase from the AI

from pydantic import BaseModel, Discipline

from typing import Record, Optionally available

class WordDetails(BaseModel):

phrase: str = Discipline(..., description="Extracted vocabulary phrase")

synonyms: Record[str] = Discipline(

default_factory=listing, description="Synonyms of the phrase"

)

antonyms: Record[str] = Discipline(

default_factory=listing, description="Antonyms of the phrase"

)

usecase: Optionally available[str] = Discipline(None, description="Use case of the phrase")

instance: Optionally available[str] = Discipline(None, description="Instance sentence")- VocabularyResponse: It should construction and validate the extracted phrases into two classes very tough phrases and medium tough phrases.

class VocabularyResponse(BaseModel):

difficult_words: Record[WordDetails] = Discipline(

..., description="Record of adverse vocabulary phrases"

)

medium_words: Record[WordDetails] = Discipline(

..., description="Record of medium vocabulary phrases"

)Query and Reply extraction mannequin

- QuestionAnswerModel: It should construction and validate the extracted questions and solutions.

class QuestionAnswerModel(BaseModel):

query: str = Discipline(..., description="Query")

reply: str = Discipline(..., description="Reply")- QuestionAnswerResponse: It should construction and validate the extracted responses from the AI.

class QuestionAnswerResponse(BaseModel):

questions_and_answers: Record[QuestionAnswerModel] = Discipline(

..., description="Record of questions and solutions"

)These fashions present computerized validation, sort checking, and clear interface definitions, stopping potential runtime errors.

Service Module: Clever Textual content Processing

The service module has two companies:

This service GeminiVocabularyService amenities:

- Makes use of Gemini to establish difficult phrases.

- Generates complete phrase insights.

- Implement strong JSON parsing.

- Manages potential error situations.

First, now we have to import all the required libraries and arrange the logging and surroundings variables.

import os

import json

import logging

from fastapi import HTTPException

import google.generativeai as genai

from dotenv import load_dotenv

# Configure logging

logging.basicConfig(stage=logging.INFO)

logger = logging.getLogger(__name__)

# Load surroundings variables

load_dotenv()This GeminiVocabularyService class has three technique.

The __init__ Methodology has necessary Gemini configuration, Google API Key, Setting generative mannequin, and immediate for the vocabulary extraction.

Immediate:

"""You might be an skilled vocabulary extractor.

For the given textual content:

1. Establish 3-5 difficult vocabulary phrases

2. Present the next for EACH phrase in a STRICT JSON format:

- phrase: The precise phrase

- synonyms: Record of 2-3 synonyms

- antonyms: Record of 2-3 antonyms

- usecase: A short rationalization of the phrase's utilization

- instance: An instance sentence utilizing the phrase

IMPORTANT: Return ONLY a legitimate JSON that matches this construction:

{

"difficult_words": [

{

"word": "string",

"synonyms": ["string1", "string2"],

"antonyms": ["string1", "string2"],

"usecase": "string",

"instance": "string"

}

],

"medium_words": [

{

"word": "string",

"synonyms": ["string1", "string2"],

"antonyms": ["string1", "string2"],

"usecase": "string",

"instance": "string"

}

],

}

""" Code Implementation

class GeminiVocabularyService:

def __init__(self):

_google_api_key = os.getenv("GOOGLE_API_KEY")

# Retrieve API Key

self.api_key = _google_api_key

if not self.api_key:

elevate ValueError(

"Google API Secret is lacking. Please set GOOGLE_API_KEY in .env file."

)

# Configure Gemini API

genai.configure(api_key=self.api_key)

# Technology Configuration

self.generation_config = {

"temperature": 0.7,

"top_p": 0.95,

"max_output_tokens": 8192,

}

# Create Generative Mannequin

self.vocab_model = genai.GenerativeModel(

model_name="gemini-1.5-flash",

generation_config=self.generation_config, # sort: ignore

system_instruction="""

You might be an skilled vocabulary extractor.

For the given textual content:

1. Establish 3-5 difficult vocabulary phrases

2. Present the next for EACH phrase in a STRICT JSON format:

- phrase: The precise phrase

- synonyms: Record of 2-3 synonyms

- antonyms: Record of 2-3 antonyms

- usecase: A short rationalization of the phrase's utilization

- instance: An instance sentence utilizing the phrase

IMPORTANT: Return ONLY a legitimate JSON that matches this construction:

{

"difficult_words": [

{

"word": "string",

"synonyms": ["string1", "string2"],

"antonyms": ["string1", "string2"],

"usecase": "string",

"instance": "string"

}

],

"medium_words": [

{

"word": "string",

"synonyms": ["string1", "string2"],

"antonyms": ["string1", "string2"],

"usecase": "string",

"instance": "string"

}

],

}

""",

)The extracted_vocabulary technique has the chat course of, and response from the Gemini by sending textual content enter utilizing the sending_message_async() perform. This technique has one non-public utility perform _parse_response(). This non-public utility perform will validate the response from the Gemini, examine the required parameters then parse the information to the extracted vocabulary perform. It should additionally log the errors resembling JSONDecodeError, and ValueError for higher error administration.

Code Implementation

The extracted_vocabulary technique:

async def extract_vocabulary(self, textual content: str) -> dict:

attempt:

# Create a brand new chat session

chat_session = self.vocab_model.start_chat(historical past=[])

# Ship message and await response

response = await chat_session.send_message_async(textual content)

# Extract and clear the textual content response

response_text = response.textual content.strip()

# Try to extract JSON

return self._parse_response(response_text)

besides Exception as e:

logger.error(f"Vocabulary extraction error: {str(e)}")

logger.error(f"Full response: {response_text}")

elevate HTTPException(

status_code=500, element=f"Vocabulary extraction failed: {str(e)}"

)The _parsed_response technique:

def _parse_response(self, response_text: str) -> dict:

# Take away markdown code blocks if current

response_text = response_text.substitute("```json", "").substitute("```", "").strip()

attempt:

# Try to parse JSON

parsed_data = json.masses(response_text)

# Validate the construction

if (

not isinstance(parsed_data, dict)

or "difficult_words" not in parsed_data

):

elevate ValueError("Invalid JSON construction")

return parsed_data

besides json.JSONDecodeError as json_err:

logger.error(f"JSON Decode Error: {json_err}")

logger.error(f"Problematic response: {response_text}")

elevate HTTPException(

status_code=400, element="Invalid JSON response from Gemini"

)

besides ValueError as val_err:

logger.error(f"Validation Error: {val_err}")

elevate HTTPException(

status_code=400, element="Invalid vocabulary extraction response"

)The entire CODE of the GeminiVocabularyService module.

class GeminiVocabularyService:

def __init__(self):

_google_api_key = os.getenv("GOOGLE_API_KEY")

# Retrieve API Key

self.api_key = _google_api_key

if not self.api_key:

elevate ValueError(

"Google API Secret is lacking. Please set GOOGLE_API_KEY in .env file."

)

# Configure Gemini API

genai.configure(api_key=self.api_key)

# Technology Configuration

self.generation_config = {

"temperature": 0.7,

"top_p": 0.95,

"max_output_tokens": 8192,

}

# Create Generative Mannequin

self.vocab_model = genai.GenerativeModel(

model_name="gemini-1.5-flash",

generation_config=self.generation_config, # sort: ignore

system_instruction="""

You might be an skilled vocabulary extractor.

For the given textual content:

1. Establish 3-5 difficult vocabulary phrases

2. Present the next for EACH phrase in a STRICT JSON format:

- phrase: The precise phrase

- synonyms: Record of 2-3 synonyms

- antonyms: Record of 2-3 antonyms

- usecase: A short rationalization of the phrase's utilization

- instance: An instance sentence utilizing the phrase

IMPORTANT: Return ONLY a legitimate JSON that matches this construction:

{

"difficult_words": [

{

"word": "string",

"synonyms": ["string1", "string2"],

"antonyms": ["string1", "string2"],

"usecase": "string",

"instance": "string"

}

],

"medium_words": [

{

"word": "string",

"synonyms": ["string1", "string2"],

"antonyms": ["string1", "string2"],

"usecase": "string",

"instance": "string"

}

],

}

""",

)

async def extract_vocabulary(self, textual content: str) -> dict:

attempt:

# Create a brand new chat session

chat_session = self.vocab_model.start_chat(historical past=[])

# Ship message and await response

response = await chat_session.send_message_async(textual content)

# Extract and clear the textual content response

response_text = response.textual content.strip()

# Try to extract JSON

return self._parse_response(response_text)

besides Exception as e:

logger.error(f"Vocabulary extraction error: {str(e)}")

logger.error(f"Full response: {response_text}")

elevate HTTPException(

status_code=500, element=f"Vocabulary extraction failed: {str(e)}"

)

def _parse_response(self, response_text: str) -> dict:

# Take away markdown code blocks if current

response_text = response_text.substitute("```json", "").substitute("```", "").strip()

attempt:

# Try to parse JSON

parsed_data = json.masses(response_text)

# Validate the construction

if (

not isinstance(parsed_data, dict)

or "difficult_words" not in parsed_data

):

elevate ValueError("Invalid JSON construction")

return parsed_data

besides json.JSONDecodeError as json_err:

logger.error(f"JSON Decode Error: {json_err}")

logger.error(f"Problematic response: {response_text}")

elevate HTTPException(

status_code=400, element="Invalid JSON response from Gemini"

)

besides ValueError as val_err:

logger.error(f"Validation Error: {val_err}")

elevate HTTPException(

status_code=400, element="Invalid vocabulary extraction response"

)

Query-Reply Technology Service

This Query Reply Service amenities:

- Creates contextually wealthy comprehension questions.

- Generates exact, informative solutions.

- Handles complicated textual content evaluation requirement.

- JSON and Worth error dealing with.

This QuestionAnswerService has three strategies:

__init__ technique

The __init__ technique is usually the identical because the Vocabulary service class aside from the immediate.

Immediate:

"""

You might be an skilled at creating complete comprehension questions and solutions.

For the given textual content:

1. Generate 8-10 numerous questions protecting:

- Vocabulary which means

- Literary gadgets

- Grammatical evaluation

- Thematic insights

- Contextual understanding

IMPORTANT: Return ONLY a legitimate JSON on this EXACT format:

{

"questions_and_answers": [

{

"question": "string",

"answer": "string"

}

]

}

Tips:

- Questions ought to be clear and particular

- Solutions ought to be concise and correct

- Cowl completely different ranges of comprehension

- Keep away from sure/no questions

""" Code Implementation:

The __init__ technique of QuestionAnswerService class

def __init__(self):

_google_api_key = os.getenv("GOOGLE_API_KEY")

# Retrieve API Key

self.api_key = _google_api_key

if not self.api_key:

elevate ValueError(

"Google API Secret is lacking. Please set GOOGLE_API_KEY in .env file."

)

# Configure Gemini API

genai.configure(api_key=self.api_key)

# Technology Configuration

self.generation_config = {

"temperature": 0.7,

"top_p": 0.95,

"max_output_tokens": 8192,

}

self.qa_model = genai.GenerativeModel(

model_name="gemini-1.5-flash",

generation_config=self.generation_config, # sort: ignore

system_instruction="""

You might be an skilled at creating complete comprehension questions and solutions.

For the given textual content:

1. Generate 8-10 numerous questions protecting:

- Vocabulary which means

- Literary gadgets

- Grammatical evaluation

- Thematic insights

- Contextual understanding

IMPORTANT: Return ONLY a legitimate JSON on this EXACT format:

{

"questions_and_answers": [

{

"question": "string",

"answer": "string"

}

]

}

Tips:

- Questions ought to be clear and particular

- Solutions ought to be concise and correct

- Cowl completely different ranges of comprehension

- Keep away from sure/no questions

""",

)The Query and Reply Extraction

The extract_questions_and_answers technique has a chat session with Gemini, a full immediate for higher extraction of questions and solutions from the enter textual content, an asynchronous message despatched to the Gemini API utilizing send_message_async(full_prompt), after which stripping response information for clear information. This technique additionally has a personal utility perform similar to the earlier one.

Code Implementation:

extract_questions_and_answers

async def extract_questions_and_answers(self, textual content: str) -> dict:

"""

Extracts questions and solutions from the given textual content utilizing the supplied mannequin.

"""

attempt:

# Create a brand new chat session

chat_session = self.qa_model.start_chat(historical past=[])

full_prompt = f"""

Analyze the next textual content and generate complete comprehension questions and solutions:

{textual content}

Make sure the questions and solutions present deep insights into the textual content's which means, model, and context.

"""

# Ship message and await response

response = await chat_session.send_message_async(full_prompt)

# Extract and clear the textual content response

response_text = response.textual content.strip()

# Try to parse and validate the response

return self._parse_response(response_text)

besides Exception as e:

logger.error(f"Query and reply extraction error: {str(e)}")

logger.error(f"Full response: {response_text}")

elevate HTTPException(

status_code=500, element=f"Query-answer extraction failed: {str(e)}"

)_parse_response

def _parse_response(self, response_text: str) -> dict:

"""

Parses and validates the JSON response from the mannequin.

"""

# Take away markdown code blocks if current

response_text = response_text.substitute("```json", "").substitute("```", "").strip()

attempt:

# Try to parse JSON

parsed_data = json.masses(response_text)

# Validate the construction

if (

not isinstance(parsed_data, dict)

or "questions_and_answers" not in parsed_data

):

elevate ValueError("Response have to be a listing of questions and solutions.")

return parsed_data

besides json.JSONDecodeError as json_err:

logger.error(f"JSON Decode Error: {json_err}")

logger.error(f"Problematic response: {response_text}")

elevate HTTPException(

status_code=400, element="Invalid JSON response from the mannequin"

)

besides ValueError as val_err:

logger.error(f"Validation Error: {val_err}")

elevate HTTPException(

status_code=400, element="Invalid question-answer extraction response"

)API Endpoints: Connecting Customers to AI

The principle file defines two major POST endpoint:

It’s a publish technique that may primarily devour enter information from the shoppers and ship it to the AI APIs by way of the vocabulary Extraction Service. It should additionally examine the enter textual content for minimal phrase necessities and in spite of everything, it’ll validate the response information utilizing the Pydantic mannequin for consistency and retailer it within the storage.

@app.publish("/extract-vocabulary/", response_model=VocabularyResponse)

async def extract_vocabulary(textual content: str):

# Validate enter

if not textual content or len(textual content.strip()) < 10:

elevate HTTPException(status_code=400, element="Enter textual content is just too brief")

# Extract vocabulary

outcome = await vocab_service.extract_vocabulary(textual content)

# Retailer vocabulary in reminiscence

key = hash(textual content)

vocabulary_storage[key] = VocabularyResponse(**outcome)

return vocabulary_storage[key]This publish technique, could have largely the identical because the earlier POST technique besides it’ll use Query Reply Extraction Service.

@app.publish("/extract-question-answer/", response_model=QuestionAnswerResponse)

async def extract_question_answer(textual content: str):

# Validate enter

if not textual content or len(textual content.strip()) < 10:

elevate HTTPException(status_code=400, element="Enter textual content is just too brief")

# Extract vocabulary

outcome = await qa_service.extract_questions_and_answers(textual content)

# Retailer outcome for retrieval (utilizing hash of textual content as key for simplicity)

key = hash(textual content)

qa_storage[key] = QuestionAnswerResponse(**outcome)

return qa_storage[key]There are two major GET technique:

First, the get-vocabulary technique will examine the hash key with the shoppers’ textual content information, if the textual content information is current within the storage the vocabulary might be introduced as JSON information. This technique is used to indicate the information on the CLIENT SIDE UI on the internet web page.

@app.get("/get-vocabulary/", response_model=Optionally available[VocabularyResponse])

async def get_vocabulary(textual content: str):

"""

Retrieve the vocabulary response for a beforehand processed textual content.

"""

key = hash(textual content)

if key in vocabulary_storage:

return vocabulary_storage[key]

else:

elevate HTTPException(

status_code=404, element="Vocabulary outcome not discovered for the supplied textual content"

)Second, the get-question-answer technique may also examine the hash key with the shoppers’ textual content information similar to the earlier technique, and can produce the JSON response saved within the storage to the CLIENT SIDE UI.

@app.get("/get-question-answer/", response_model=Optionally available[QuestionAnswerResponse])

async def get_question_answer(textual content: str):

"""

Retrieve the question-answer response for a beforehand processed textual content.

"""

key = hash(textual content)

if key in qa_storage:

return qa_storage[key]

else:

elevate HTTPException(

status_code=404,

element="Query-answer outcome not discovered for the supplied textual content",

)Key Implementation Function

To run the appliance, now we have to import the libraries and instantiate a FastAPI service.

Import Libraries

from fastapi import FastAPI, HTTPException

from fastapi.middleware.cors import CORSMiddleware

from typing import Optionally available

from .fashions import VocabularyResponse, QuestionAnswerResponse

from .companies import GeminiVocabularyService, QuestionAnswerServiceInstantiate FastAPI Software

# FastAPI Software

app = FastAPI(title="English Educator API")Cross-origin Useful resource Sharing (CORS) Help

Cross-origin useful resource sharing (CORS) is an HTTP-header-based mechanism that enables a server to point any origins resembling area, scheme, or port apart from its personal from which a browser ought to allow loading assets. For safety causes, the browser restricts CORS HTTP requests initiated from scripts.

# FastAPI Software

app = FastAPI(title="English Educator API")

# Add CORS middleware

app.add_middleware(

CORSMiddleware,

allow_origins=["*"],

allow_credentials=True,

allow_methods=["*"],

allow_headers=["*"],

)In-memory validation mechanism: Easy Key Phrase Storage

We use easy key-value-based storage for the venture however you should utilize MongoDB.

# Easy key phrase storage

vocabulary_storage = {}

qa_storage = {}Enter Validation mechanisms and Complete error dealing with.

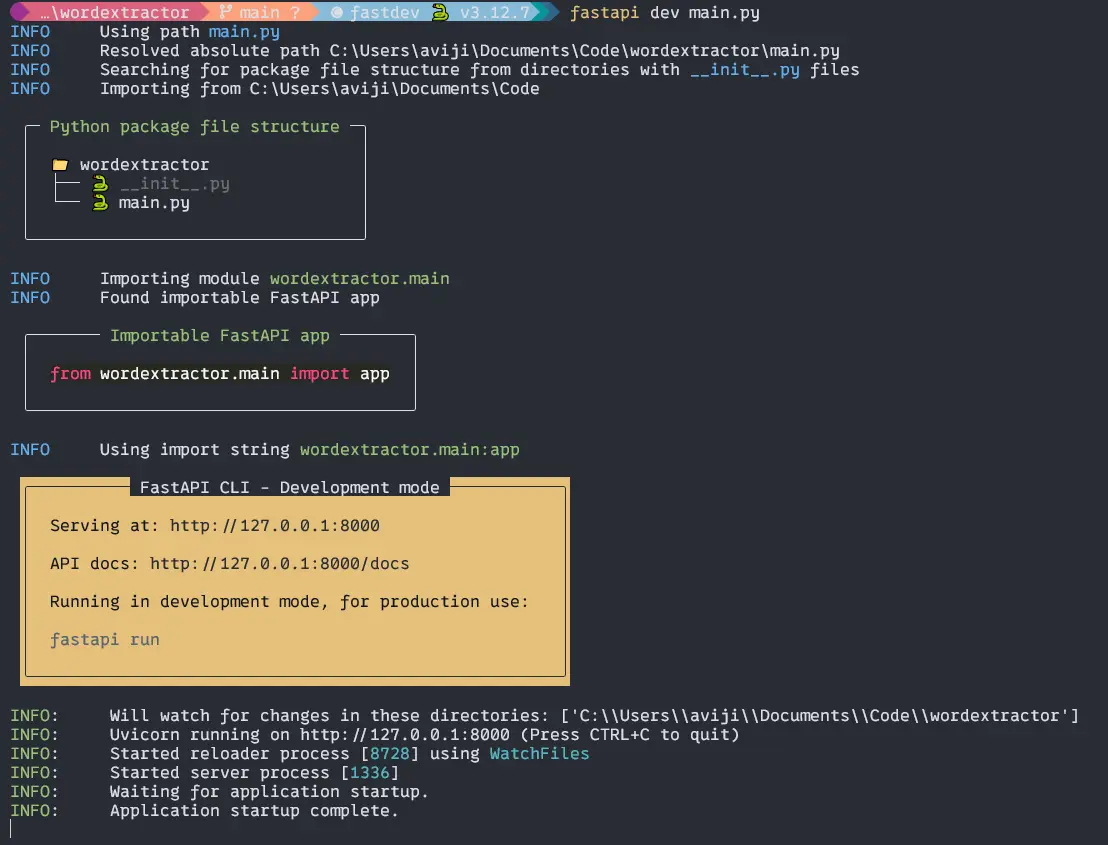

Now’s the time to run the appliance.

To run the appliance in improvement mode, now we have to make use of FasyAPI CLI which can put in with the FastAPI.

Kind the code to your terminal within the utility root.

$ fastapi dev foremost.pyOutput:

Then for those who CTRL + Proper Click on on the hyperlink http://127.0.0.1:8000 you’ll get a welcome display screen on the internet browser.

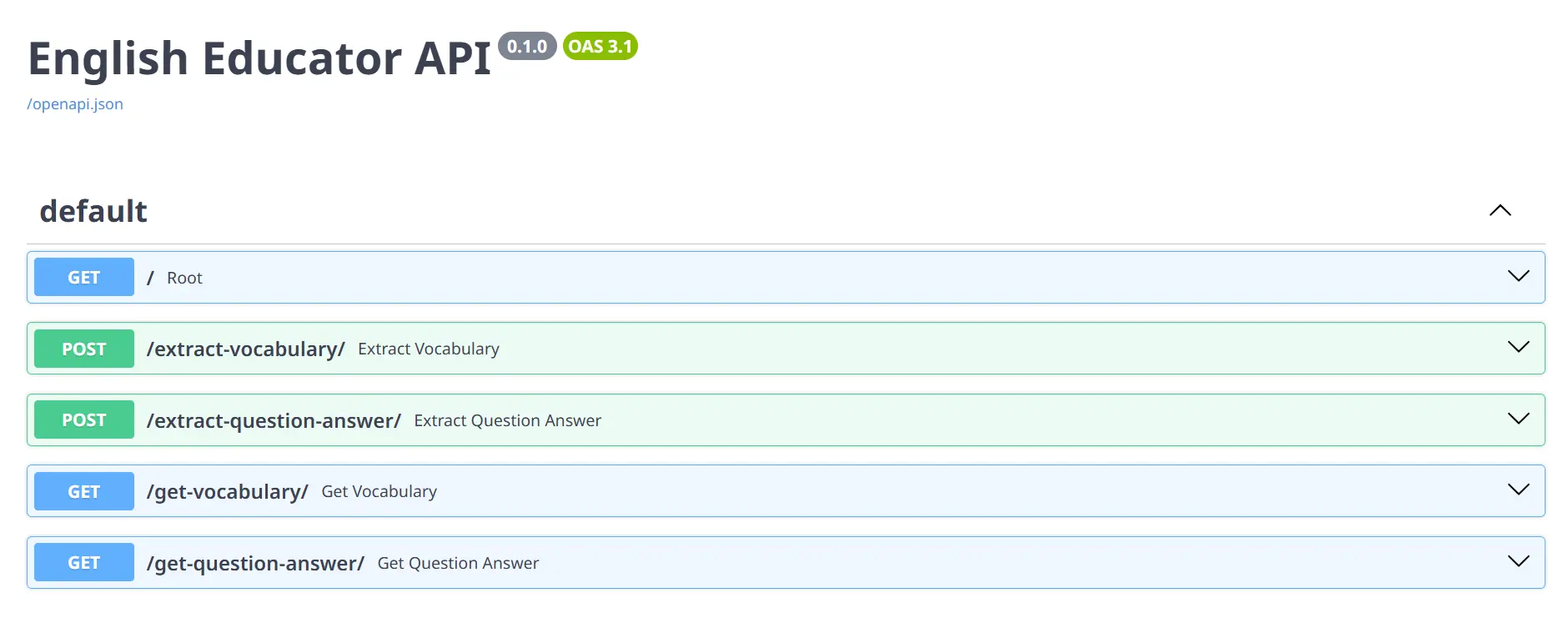

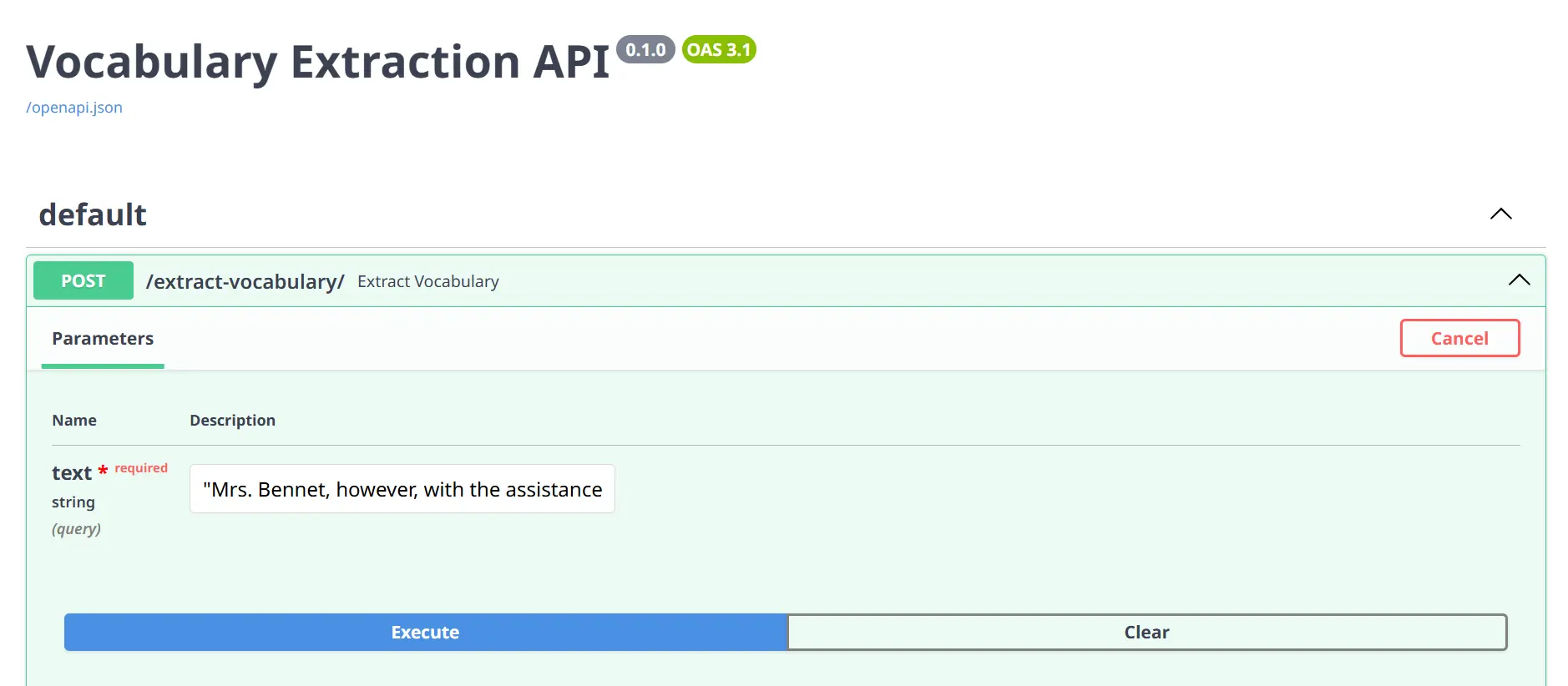

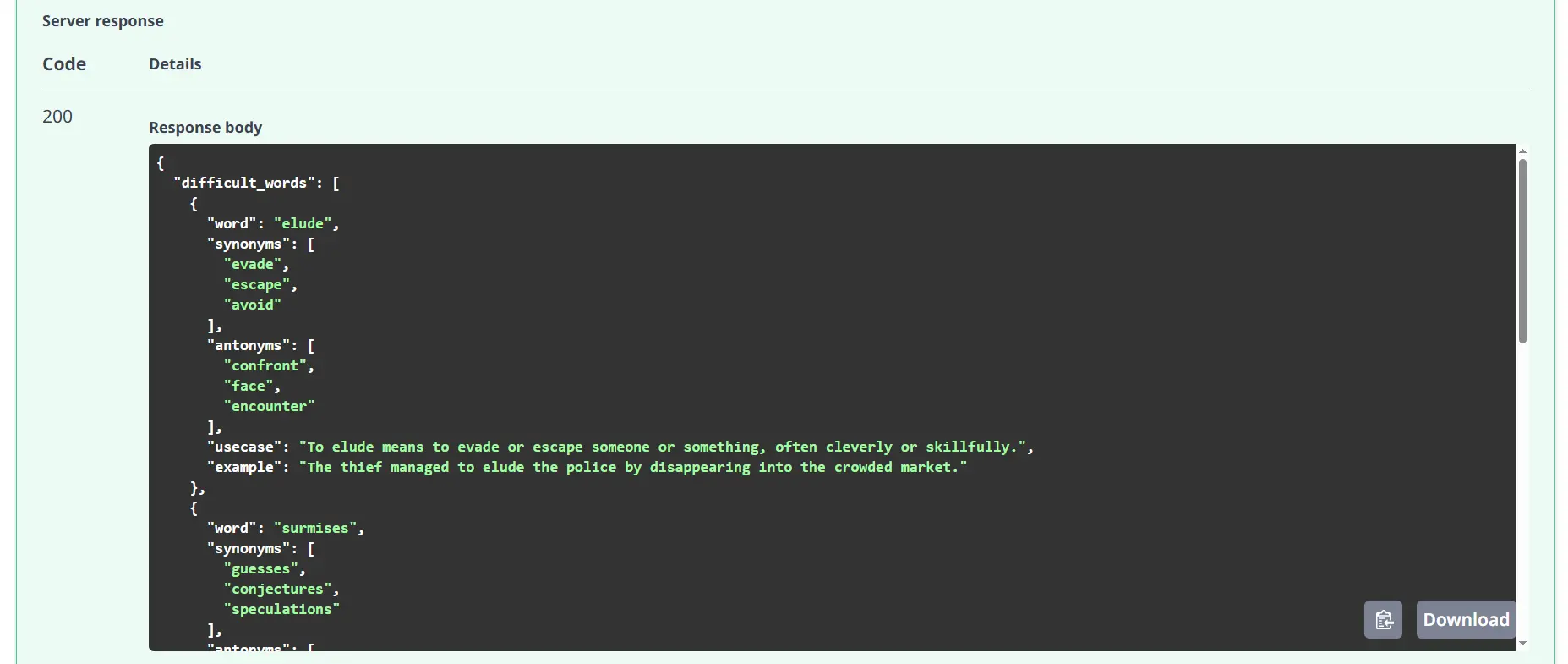

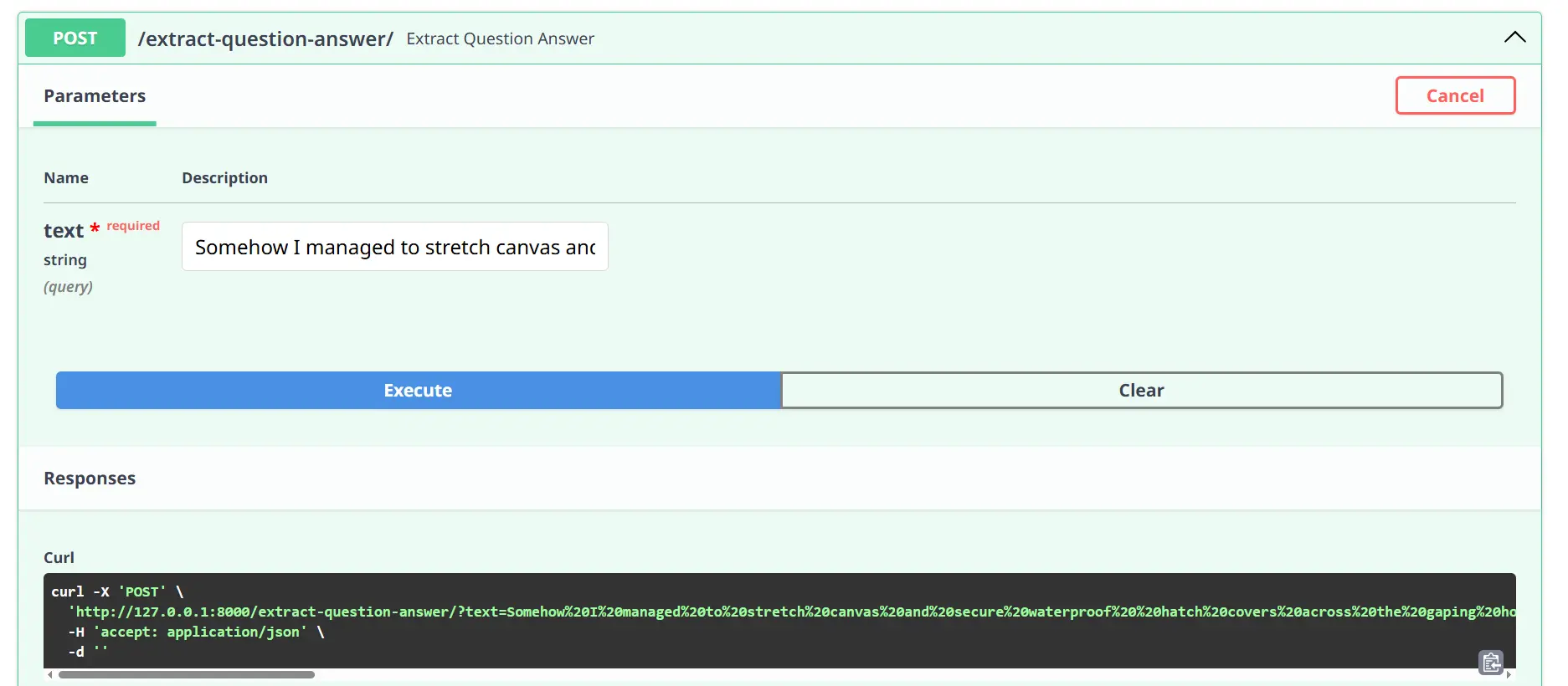

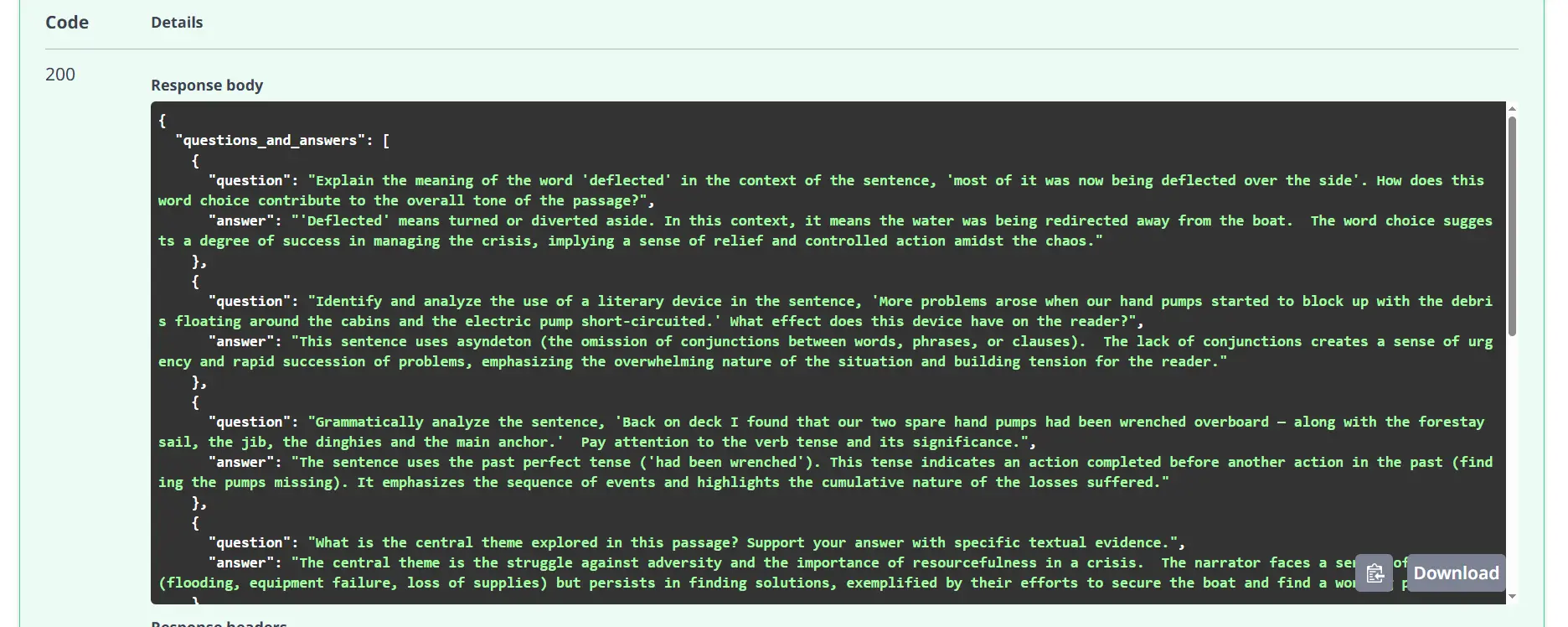

To go to the docs web page of FastAPI simply click on on the following URL or sort http://127.0.0.1:8000/docs in your browser, and you will note all of the HTTP strategies on the web page for testing.

Now to check the API, Click on on any of the POST strategies and TRY IT OUT, put any textual content you wish to within the enter discipline, and execute. You’ll get the response based on the companies resembling vocabulary, and query reply.

Execute:

Response:

Execute:

Response:

Testing Get Strategies

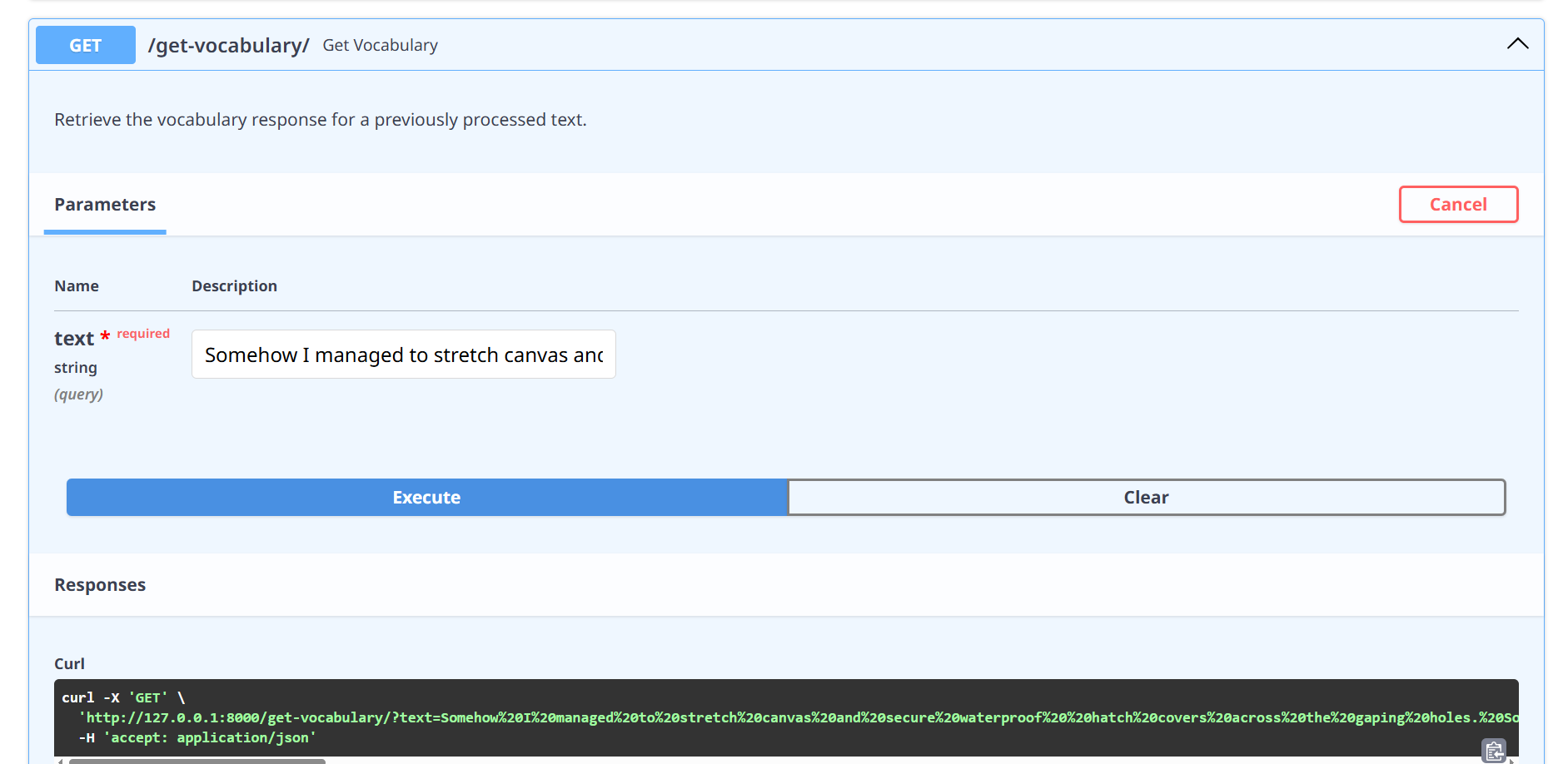

Get vocabulary from the storage.

Execute:

Put the identical textual content you placed on the POST technique on the enter discipline.

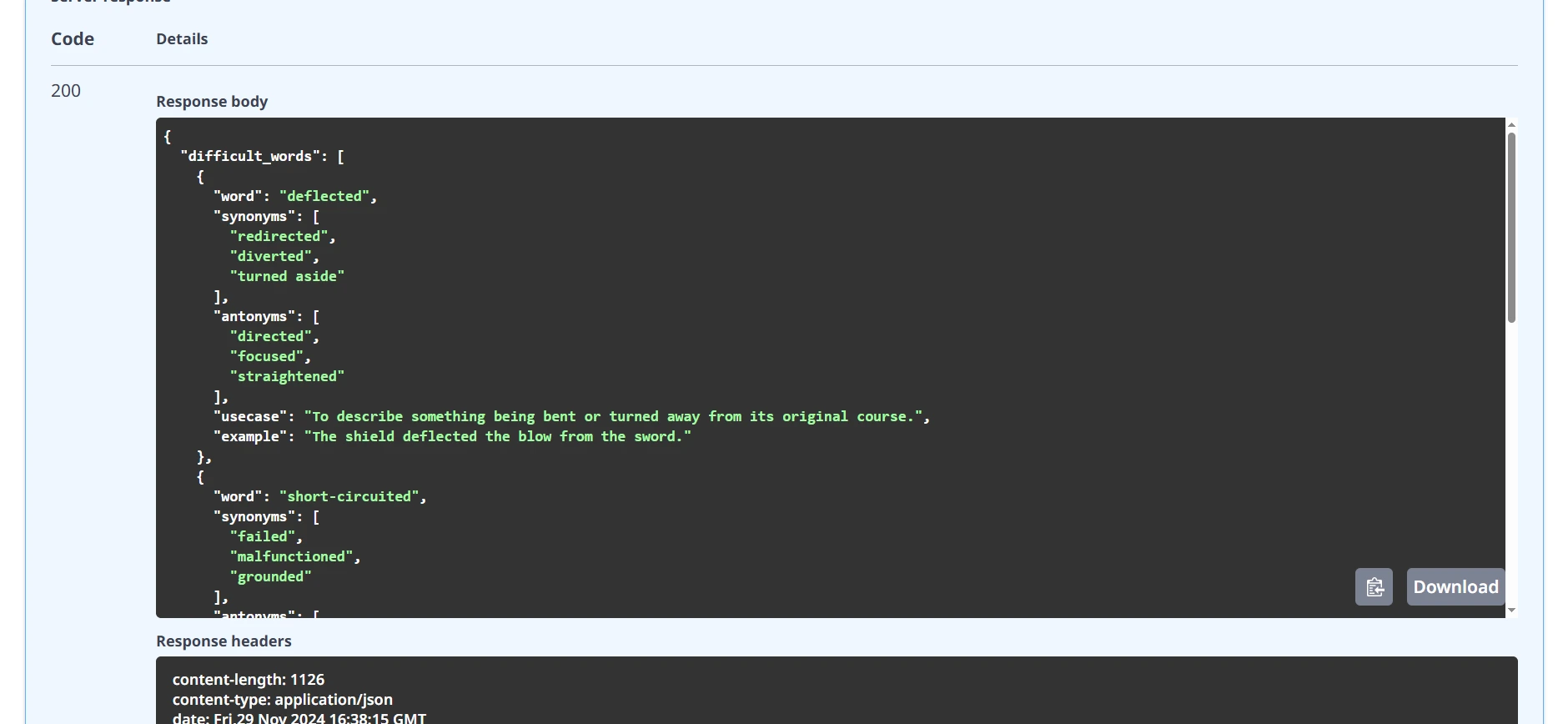

Response:

You’ll get the beneath output from the storage.

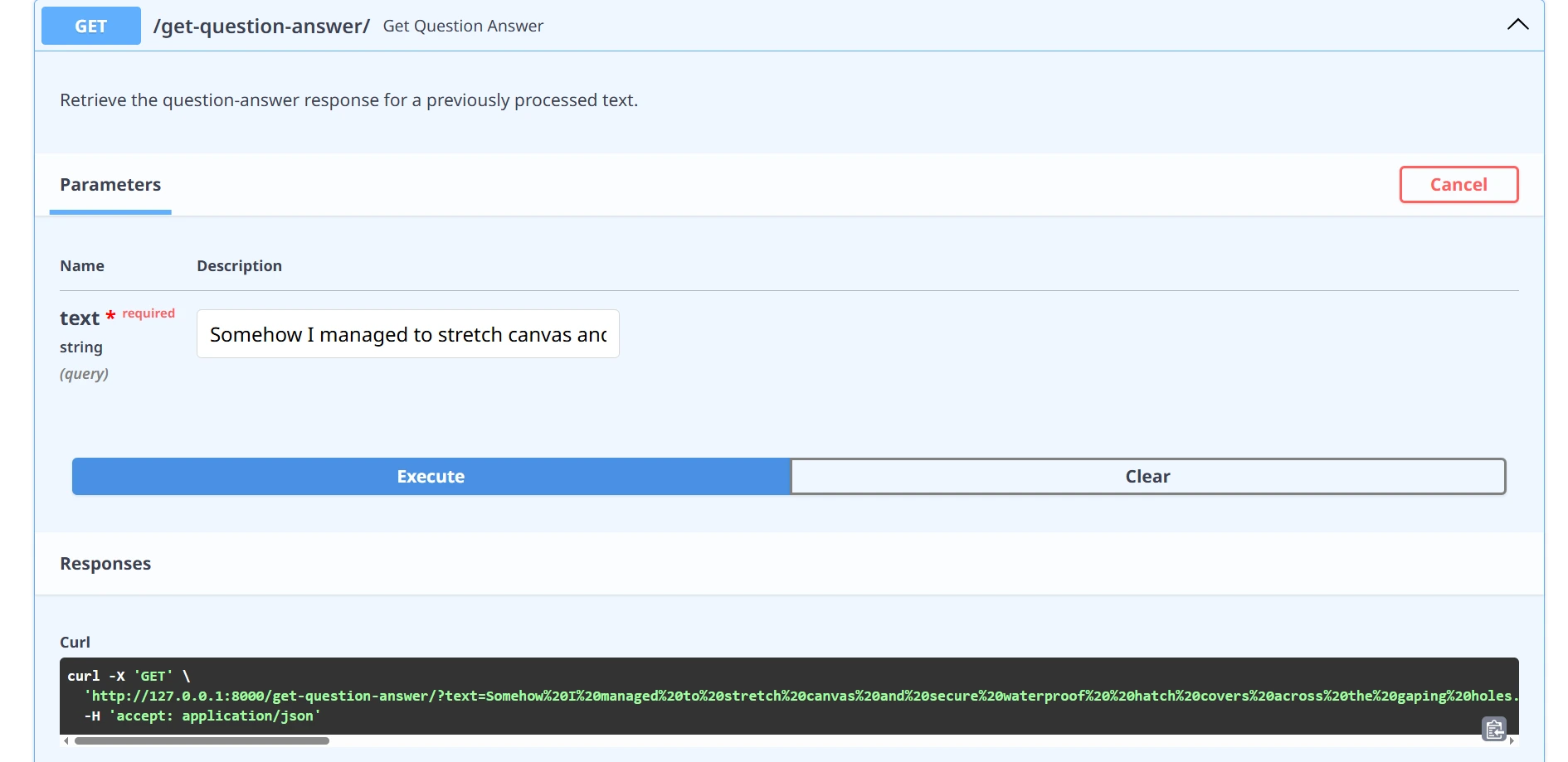

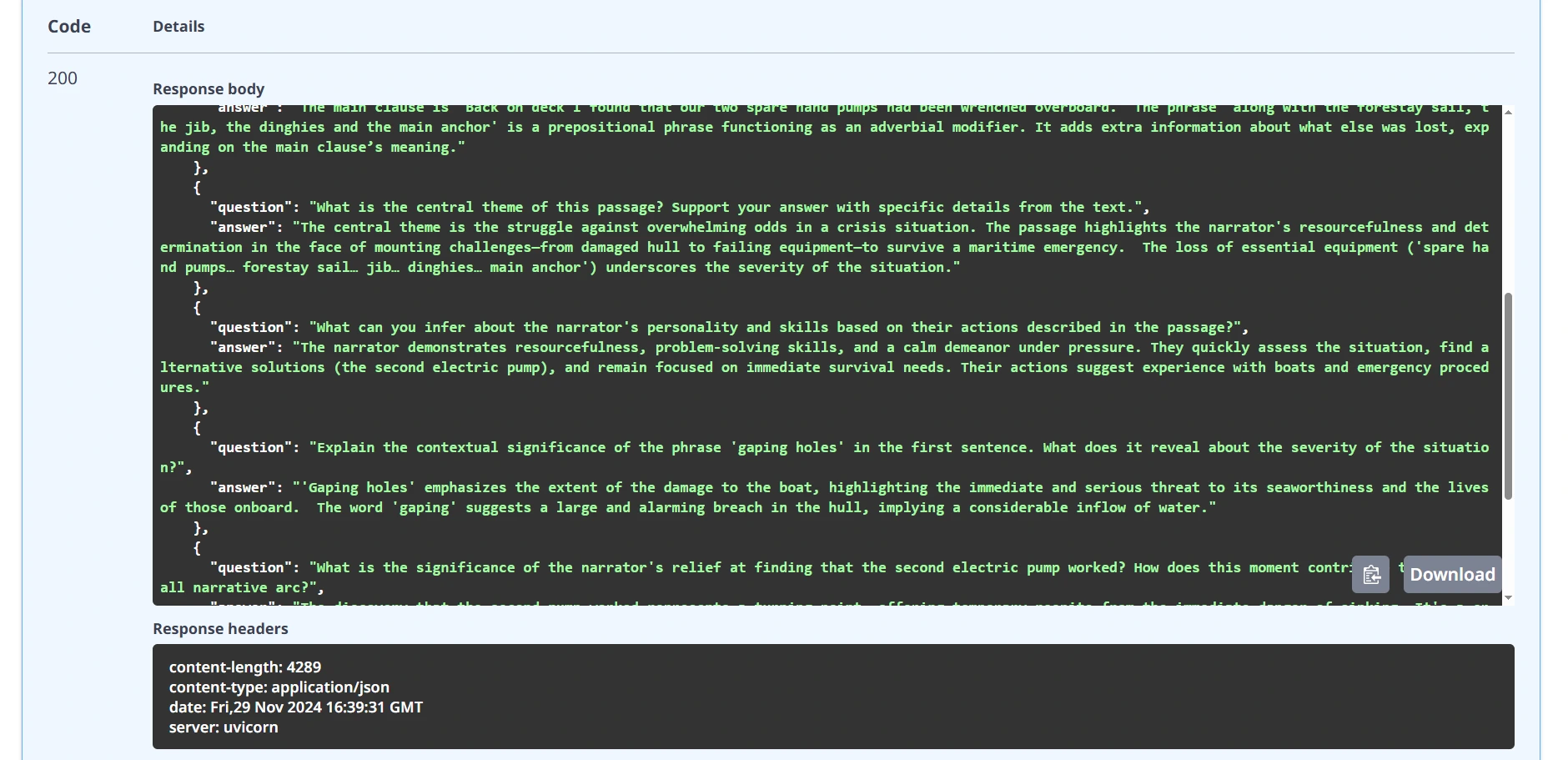

and likewise for question-and-answer

Execute:

Response:

That might be absolutely working internet server API for English educators utilizing Google Gemini AI.

Additional Improvement Alternative

The present implementation opens doorways to thrilling future enhancements:

- Discover persistent storage options to retain information successfully throughout periods.

- Combine strong authentication mechanisms for enhanced safety.

- Advance textual content evaluation capabilities with extra subtle options.

- Design and construct an intuitive front-end interface for higher person interplay.

- Implement environment friendly charge limiting and caching methods to optimize efficiency.

Sensible Concerns and Limitations

Whereas our API demonstrates highly effective capabilities, it’s best to take into account:

- Take into account API utilization prices and charge limits when planning utilization to keep away from surprising costs and guarantee scalability.

- Be aware of processing time for complicated texts, as longer or intricate inputs might end in slower response occasions.

- Put together for steady mannequin updates from Google, which can affect the API’s efficiency or capabilities over time.

- Perceive that AI-generated responses can fluctuate, so it’s necessary to account for potential inconsistencies in output high quality.

Conclusion

Now we have created a versatile, clever API that transforms textual content evaluation by way of the synergy of Google Gemini, FastAPI, and Pydantic. This answer demonstrates how fashionable AI applied sciences might be leveraged to extract deep, significant insights from textual information.

You may get all of the code of the venture within the CODE REPO.

Key Takeaways

- AI-powered APIs can present clever, context-aware textual content evaluation.

- FastAPI simplifies complicated API improvement with computerized documentation.

- The English Educator App API empowers builders to create interactive and customized language studying experiences.

- Integrating the English Educator App API can streamline content material supply, bettering each academic outcomes and person engagement.

Ceaselessly Requested Query

A. The present model makes use of environment-based API key administration and consists of elementary enter validation. For manufacturing, further safety layers are really useful.

A. At all times evaluation Google Gemini’s present phrases of service and licensing for industrial implementations.

A. Efficiency relies on Gemini API response occasions, enter complexity, and your particular processing necessities.

A. The English Educator App API offers instruments for educators to create customized language studying experiences, providing options like vocabulary extraction, pronunciation suggestions, and superior textual content evaluation.

The media proven on this article shouldn’t be owned by Analytics Vidhya and is used on the Creator’s discretion.