Introduction

Think about sifting by hundreds of pictures to search out that one excellent shot—tedious, proper? Now, image a system that may do that in seconds, immediately presenting you with probably the most related pictures based mostly in your question. On this article, you’ll dive into the fascinating world of picture similarity search, the place we’ll remodel pictures into numerical vectors utilizing the highly effective VGG16 mannequin. With these vectors listed by FAISS, a instrument designed to swiftly and precisely find related gadgets, you’ll learn to construct a streamlined and environment friendly search system. By the top, you’ll not solely grasp the magic behind vector embeddings and FAISS but in addition acquire hands-on expertise to implement your individual high-speed picture retrieval system.

Studying Goals

- Perceive how vector embeddings convert complicated information into numerical representations for evaluation.

- Study the position of VGG16 in producing picture embeddings and its utility in picture similarity search.

- Achieve perception into FAISS and its capabilities for indexing and quick retrieval of comparable vectors.

- Develop expertise to implement a picture similarity search system utilizing VGG16 and FAISS.

- Discover challenges and options associated to high-dimensional information and environment friendly similarity searches.

This text was printed as part of the Knowledge Science Blogathon.

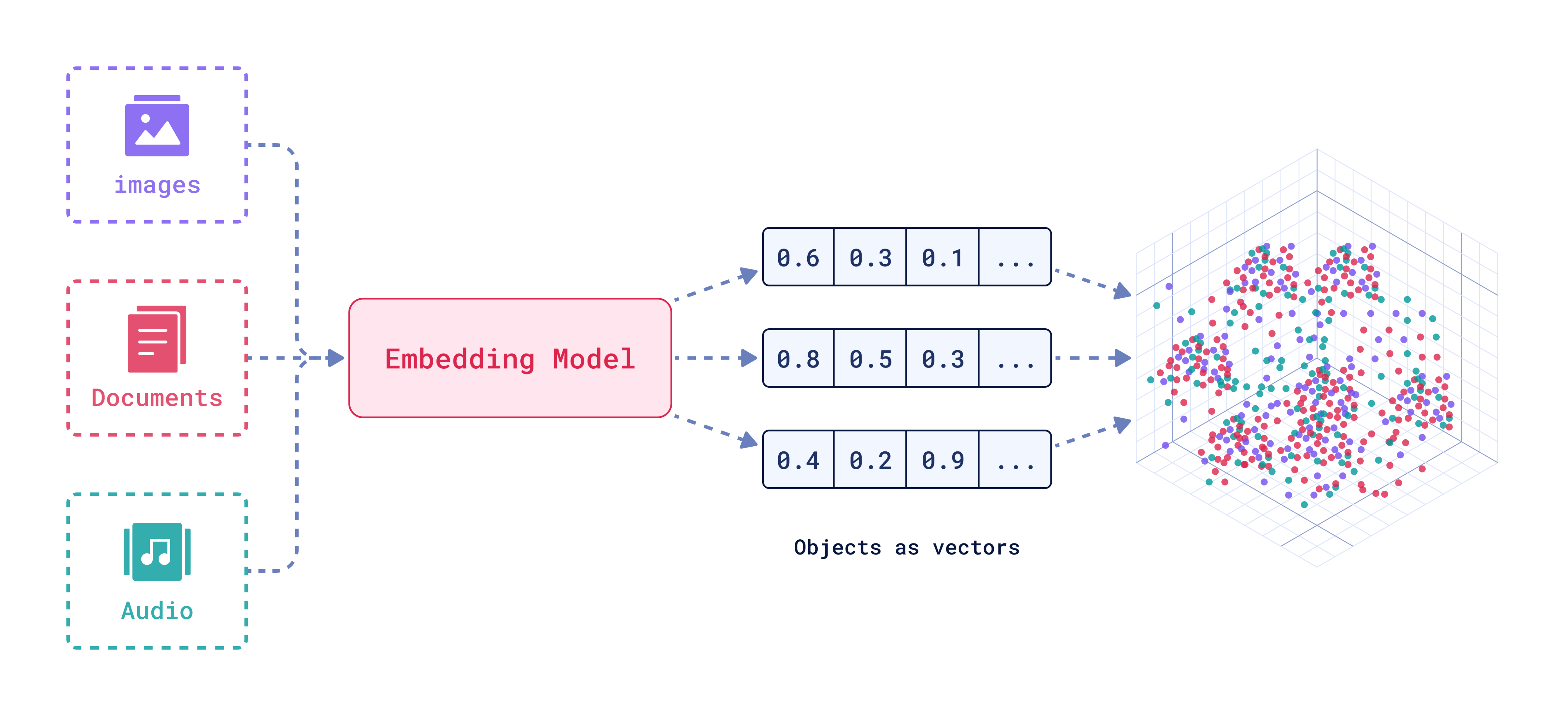

Understanding Vector Embeddings

Vector embeddings are a approach to signify information like pictures, textual content, or audio as numerical vectors. On this illustration related gadgets are positioned close to one another in a high-dimensional area, which helps computer systems rapidly discover and evaluate associated info.

Benefits of Vector Embeddings

Allow us to now discover benefits of vector embeddings intimately.

- Vector embeddings are time environment friendly as distance between vectors may be computed quickly.

- Embeddings deal with giant dataset effectively making them scalable and appropriate for large information functions.

- Excessive-dimensional information, comparable to pictures, can signify lower-dimensional areas with out shedding important info. This illustration simplifies storage and enhances area effectivity. It captures the semantic which means between information gadgets, resulting in extra correct leads to duties requiring contextual understanding, comparable to NLP and picture recognition.

- Vector embeddings are versatile as they are often utilized to completely different information sorts

- Pre-trained embeddings and vector databases can be found which cut back the necessity for in depth coaching of information thus we save on computational sources.

- Historically characteristic engineering strategies require guide creation and number of options, embeddings automate characteristic engineering course of by studying options from information.

- Embeddings are extra adaptable to new inputs higher as in comparison with rule-based fashions.

- Graph based mostly approaches additionally seize complicated relations however require extra complicated information constructions and algorithms, embeddings are computationally much less in depth.

What’s VGG16?

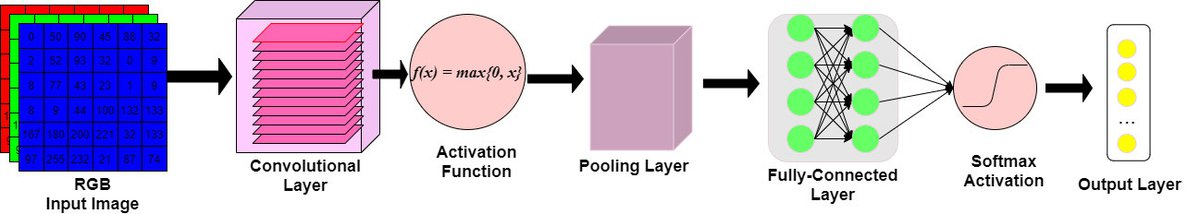

We can be utilizing VGG16 to calculate our picture embeddings .VGG16 is a Convolutional Neural Community it’s an object detection and classification algorithm. The 16 stands for the 16 layers with learnable weights within the community.

Beginning with an enter picture and resizing it to 224×224 pixels with three coloration channels . These are actually handed to a convolutional layers that are like a sequence of filters that take a look at small elements of the picture. Every filter right here captures completely different options comparable to edges, colours, textures and so forth. VGG16 makes use of 3X3 filters which signifies that they take a look at 3X3 pixel space at a time. There are 13 convolutional layers in VGG16.That is adopted by the Activation Operate (ReLU) which stands for Rectified Linear Unit and it provides non linearity to the mannequin which permits it to study extra complicated patters.

Subsequent are the Pooling Layers which cut back the scale of the picture illustration by taking a very powerful info from small patches which shrinks the picture whereas conserving the necessary options.VGG16 makes use of 2X2 pooling layers which implies it reduces the picture measurement by half. Lastly the Absolutely Linked Layer receives the output and makes use of all the knowledge that the convolutional layers have realized to reach at a last conclusion. The output layer determines which class the enter picture almost definitely belongs to by producing possibilities for every class utilizing a softmax perform.

First load the mannequin then use VGG16 with pre-trained weights however take away the final layer that offers the ultimate classification. Now resize and preprocess the pictures to suit the mannequin’s necessities which is 224 X 224 pixels and at last compute embeddings by passing the pictures by the mannequin from one of many totally linked layers.

Utilizing FAISS for Indexing

Fb AI Analysis developed Fb AI Similarity Search (FAISS) to effectively search and cluster dense vectors. FAISS excels at dealing with large-scale datasets and rapidly discovering gadgets much like a question.

What’s Similarity Looking ?

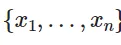

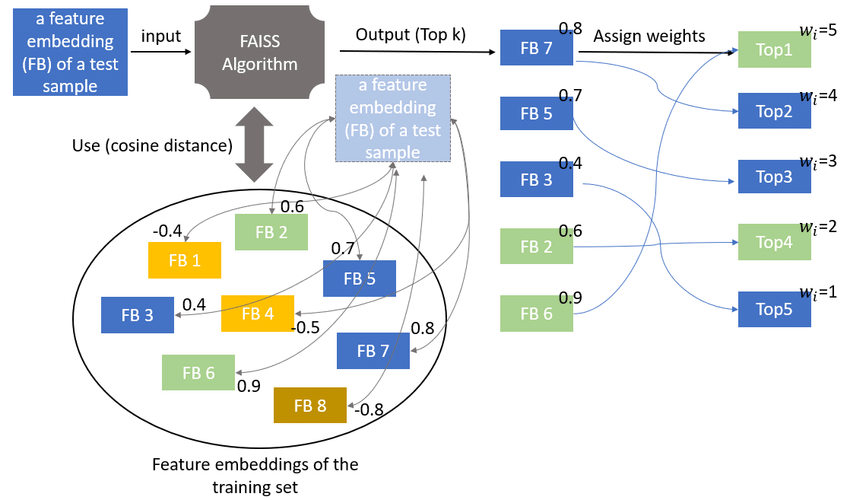

FAISS constructs an index in RAM once you present a vector of dimension (d_i). This index, an object with an add technique, shops the (x_i) vector, assuming a hard and fast dimension for (x_i).

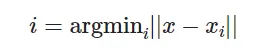

After the construction is constructed when a brand new vector x is offered in dimension d it performs the next operation. Computing the argmin is the search operation on the index.

FAISS discover the gadgets within the index closest to the brand new merchandise.

Right here || .|| represents the Euclidean distance (L2). The closeness is measured utilizing Euclidean distance.

Code Implementation utilizing Vector Embeddings

We’ll now look into code Implementation for detection of comparable pictures utilizing vector embeddings.

Step 1: Import Libraries

Import obligatory libraries for picture processing, mannequin dealing with, and similarity looking out.

import cv2

import numpy as np

import faiss

import os

from keras.functions.vgg16 import VGG16, preprocess_input

from keras.preprocessing import picture

from keras.fashions import Mannequin

from google.colab.patches import cv2_imshowStep 2: Load Pictures from Folder

Outline a perform to load all pictures from a specified folder and its subfolders.

# Load pictures from folder and subfolders

def load_images_from_folder(folder):

pictures = []

image_paths = []

for root, dirs, information in os.stroll(folder):

for file in information:

if file.endswith(('jpg', 'jpeg', 'png')):

img_path = os.path.be a part of(root, file)

img = cv2.imread(img_path)

if img shouldn't be None:

pictures.append(img)

image_paths.append(img_path)

return pictures, image_pathsStep 3: Load Pre-trained Mannequin and Take away High Layers

Load the VGG16 mannequin pre-trained on ImageNet and modify it to output embeddings from the ‘fc1’ layer.

# Load pre-trained mannequin and take away high layers

base_model = VGG16(weights="imagenet")

mannequin = Mannequin(inputs=base_model.enter, outputs=base_model.get_layer('fc1').output)Step 4: Compute Embeddings Utilizing VGG16

Outline a perform to compute picture embeddings utilizing the modified VGG16 mannequin.

# Compute embeddings utilizing VGG16

def compute_embeddings(pictures)

embeddings = []

for img in pictures:

img = cv2.resize(img, (224, 224))

img = picture.img_to_array(img)

img = np.expand_dims(img, axis=0)

img = preprocess_input(img)

img_embedding = mannequin.predict(img)

embeddings.append(img_embedding.flatten())

return np.array(embeddings)Step 5: Create FAISS Index

Outline a perform to create a FAISS index from the computed embeddings.

def create_index(embeddings):

d = embeddings.form[1]

index = faiss.IndexFlatL2(d)

index.add(embeddings)

return indexStep 6: Load Pictures and Compute Embeddings

Load pictures, compute their embeddings, and create a FAISS index.

pictures, image_paths = load_images_from_folder('pictures')

embeddings = compute_embeddings(pictures)

index = create_index(embeddings)Step 7: Seek for Related Pictures

Outline a perform to seek for probably the most related pictures within the FAISS index.

def search_similar_images(index, query_embedding, top_k=1):

D, I = index.search(query_embedding, top_k)

return IStep 8: Instance Utilization

Load a question picture, compute its embedding, and seek for related pictures.

# Seek for related pictures

def search_similar_images(index, query_embedding, top_k=1):

D, I = index.search(query_embedding, top_k)

return IStep 9: Show Outcomes

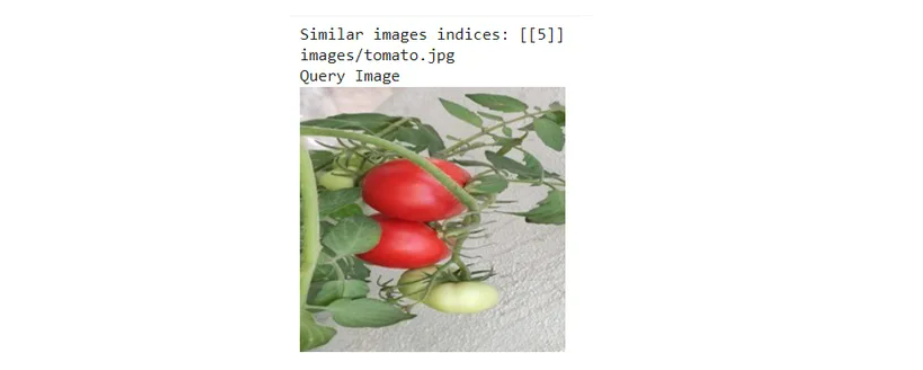

Print the indices and file paths of the same pictures.

print("Related pictures indices:", similar_images_indices)

for idx in similar_images_indices[0]:

print(image_paths[idx])Step 10: Show Pictures Utilizing cv2_imshow

Show the question picture and probably the most related pictures utilizing OpenCV’s cv2_imshow.

print("Question Picture")

cv2_imshow(query_image)

cv2.waitKey(0) # Watch for a key press to shut the picture

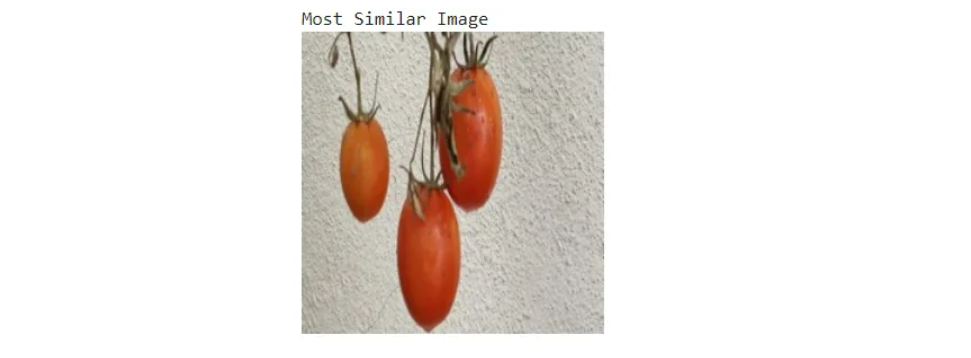

for idx in similar_images_indices[0]:

similar_image = cv2.imread(image_paths[idx])

print("Most Related Picture")

cv2_imshow(similar_image)

cv2.waitKey(0)

cv2.destroyAllWindows()

I’ve made use of Vegetable pictures dataset for code implementation an identical dataset may be discovered right here.

Challenges Confronted

- Storing excessive dimensional embeddings for numerous pictures consumes a considerable amount of reminiscence.

- Producing embeddings and performing similarity searches may be computationally intensive

- Variations in picture high quality, measurement and format can have an effect on the accuracy of embeddings.

- Creating and updating the FAISS index may be time-consuming incase of very giant datasets.

Conclusion

We explored the creation of a picture similarity search system by leveraging vector embeddings and FAISS (Fb AI Similarity Search). We started by understanding vector embeddings and their position in representing pictures as numerical vectors, facilitating environment friendly similarity searches. Utilizing the VGG16 mannequin, we computed picture embeddings by processing and resizing pictures to extract significant options. We then created a FAISS index to effectively handle and search by these embeddings.

Lastly, we demonstrated tips on how to question this index to search out related pictures, highlighting the benefits and challenges of working with high-dimensional information and similarity search strategies. This method underscores the ability of mixing deep studying fashions with superior indexing strategies to boost picture retrieval and comparability capabilities.

Key Takeaways

- Vector embeddings remodel complicated information like pictures into numerical vectors for environment friendly similarity searches.

- VGG16 mannequin extracts significant options from pictures, creating detailed embeddings for comparability.

- FAISS indexing accelerates similarity searches by effectively managing and querying giant units of picture embeddings.

- Picture similarity search leverages superior indexing strategies to rapidly determine and retrieve comparable pictures.

- Dealing with high-dimensional information includes challenges in reminiscence utilization and computational sources however is essential for efficient similarity searches.

Often Requested Questions

A. Vector embeddings are numerical representations of information components, comparable to pictures, textual content, or audio. They place related gadgets shut to one another in a high-dimensional area, enabling environment friendly similarity searches and comparisons by computer systems.

A. Vector embeddings simplify and speed up discovering and evaluating related gadgets in giant datasets. They permit for environment friendly computation of distances between information factors, making them very best for duties requiring fast similarity searches and contextual understanding.

A. FAISS, developed by Fb AI Analysis, effectively searches and clusters dense vectors. Designed for large-scale datasets, it rapidly retrieves related gadgets by creating and looking out by an index of vector embeddings.

A. FAISS operates by creating an index from the vector embeddings of your information. If you provide a brand new vector, FAISS searches the index to search out the closest vectors. It usually measures similarity utilizing Euclidean distance (L2).

The media proven on this article shouldn’t be owned by Analytics Vidhya and is used on the Creator’s discretion.