Since generative AI started to garner public curiosity, the pc imaginative and prescient analysis subject has deepened its curiosity in growing AI fashions able to understanding and replicating bodily legal guidelines; nonetheless, the problem of instructing machine studying methods to simulate phenomena corresponding to gravity and liquid dynamics has been a major focus of analysis efforts for not less than the previous 5 years.

Since latent diffusion fashions (LDMs) got here to dominate the generative AI scene in 2022, researchers have more and more targeted on LDM structure’s restricted capability to know and reproduce bodily phenomena. Now, this difficulty has gained further prominence with the landmark improvement of OpenAI’s generative video mannequin Sora, and the (arguably) extra consequential latest launch of the open supply video fashions Hunyuan Video and Wan 2.1.

Reflecting Badly

Most analysis aimed toward enhancing LDM understanding of physics has targeted on areas corresponding to gait simulation, particle physics, and different features of Newtonian movement. These areas have attracted consideration as a result of inaccuracies in fundamental bodily behaviors would instantly undermine the authenticity of AI-generated video.

Nonetheless, a small however rising strand of analysis concentrates on one in every of LDM’s largest weaknesses – it is relative lack of ability to supply correct reflections.

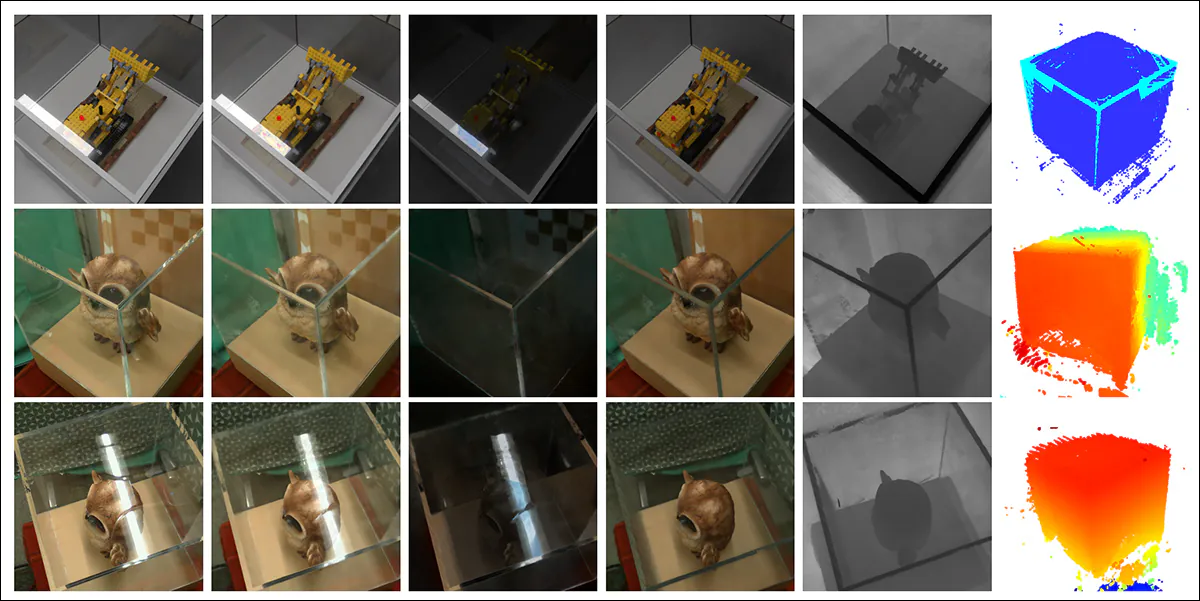

From the January 2025 paper ‘Reflecting Actuality: Enabling Diffusion Fashions to Produce Trustworthy Mirror Reflections’, examples of ‘reflection failure’ versus the researchers’ personal strategy. Supply: https://arxiv.org/pdf/2409.14677

This difficulty was additionally a problem in the course of the CGI period and stays so within the subject of video gaming, the place ray-tracing algorithms simulate the trail of sunshine because it interacts with surfaces. Ray-tracing calculates how digital gentle rays bounce off or go by way of objects to create lifelike reflections, refractions, and shadows.

Nonetheless, as a result of every further bounce enormously will increase computational value, real-time functions should commerce off latency towards accuracy by limiting the variety of allowed light-ray bounces.

![A representation of a virtually-calculated light-beam in a traditional 3D-based (i.e., CGI) scenario, using technologies and principles first developed in the 1960s, and which came to fulmination between 1982-93 (the span between Tron [1982] and Jurassic Park [1993]. Source: https://www.unrealengine.com/en-US/explainers/ray-tracing/what-is-real-time-ray-tracing](https://www.unite.ai/wp-content/uploads/2025/04/ray-tracing.jpg)

A illustration of a virtually-calculated light-beam in a standard 3D-based (i.e., CGI) state of affairs, utilizing applied sciences and ideas first developed within the Sixties, and which got here to fulmination between 1982-93 (the span between ‘Tron’ [1982] and ‘Jurassic Park’ [1993]. Supply: https://www.unrealengine.com/en-US/explainers/ray-tracing/what-is-real-time-ray-tracing

For example, depicting a chrome teapot in entrance of a mirror might contain a ray-tracing course of the place gentle rays bounce repeatedly between reflective surfaces, creating an nearly infinite loop with little sensible profit to the ultimate picture. Usually, a mirrored image depth of two to 3 bounces already exceeds what the viewer can understand. A single bounce would end in a black mirror, because the gentle should full not less than two journeys to kind a visual reflection.

Every further bounce sharply will increase computational value, typically doubling render instances, making quicker dealing with of reflections one of the vital important alternatives for enhancing ray-traced rendering high quality.

Naturally, reflections happen, and are important to photorealism, in far much less apparent eventualities – such because the reflective floor of a metropolis avenue or a battlefield after the rain; the reflection of the opposing avenue in a store window or glass doorway; or within the glasses of depicted characters, the place objects and environments could also be required to seem.

A simulated twin-reflection achieved by way of conventional compositing for an iconic scene in ‘The Matrix’ (1999).

Picture Issues

For that reason, frameworks that have been in style previous to the arrival of diffusion fashions, corresponding to Neural Radiance Fields (NeRF), and a few more moderen challengers corresponding to Gaussian Splatting have maintained their very own struggles to enact reflections in a pure means.

The REF2-NeRF undertaking (pictured under) proposed a NeRF-based modeling methodology for scenes containing a glass case. On this methodology, refraction and reflection have been modeled utilizing components that have been dependent and unbiased of the viewer’s perspective. This strategy allowed the researchers to estimate the surfaces the place refraction occurred, particularly glass surfaces, and enabled the separation and modeling of each direct and mirrored gentle elements.

Examples from the Ref2Nerf paper. Supply: https://arxiv.org/pdf/2311.17116

Different NeRF-facing reflection options of the final 4-5 years have included NeRFReN, Reflecting Actuality, and Meta’s 2024 Planar Reflection-Conscious Neural Radiance Fields undertaking.

For GSplat, papers corresponding to Mirror-3DGS, Reflective Gaussian Splatting, and RefGaussian have supplied options relating to the reflection downside, whereas the 2023 Nero undertaking proposed a bespoke methodology of incorporating reflective qualities into neural representations.

MirrorVerse

Getting a diffusion mannequin to respect reflection logic is arguably harder than with explicitly structural, non-semantic approaches corresponding to Gaussian Splatting and NeRF. In diffusion fashions, a rule of this sort is simply prone to develop into reliably embedded if the coaching information accommodates many various examples throughout a variety of eventualities, making it closely depending on the distribution and high quality of the unique dataset.

Historically, including explicit behaviors of this sort is the purview of a LoRA or the fine-tuning of the bottom mannequin; however these should not ultimate options, since a LoRA tends to skew output in direction of its personal coaching information, even with out prompting, whereas fine-tunes – apart from being costly – can fork a significant mannequin irrevocably away from the mainstream, and engender a number of associated customized instruments that may by no means work with any different pressure of the mannequin, together with the unique one.

Basically, enhancing diffusion fashions requires that the coaching information pay better consideration to the physics of reflection. Nonetheless, many different areas are additionally in want of comparable particular consideration. Within the context of hyperscale datasets, the place customized curation is dear and tough, addressing each single weak point on this means is impractical.

Nonetheless, options to the LDM reflection downside do crop up from time to time. One latest such effort, from India, is the MirrorVerse undertaking, which affords an improved dataset and coaching methodology able to enhancing of the state-of-the-art on this explicit problem in diffusion analysis.

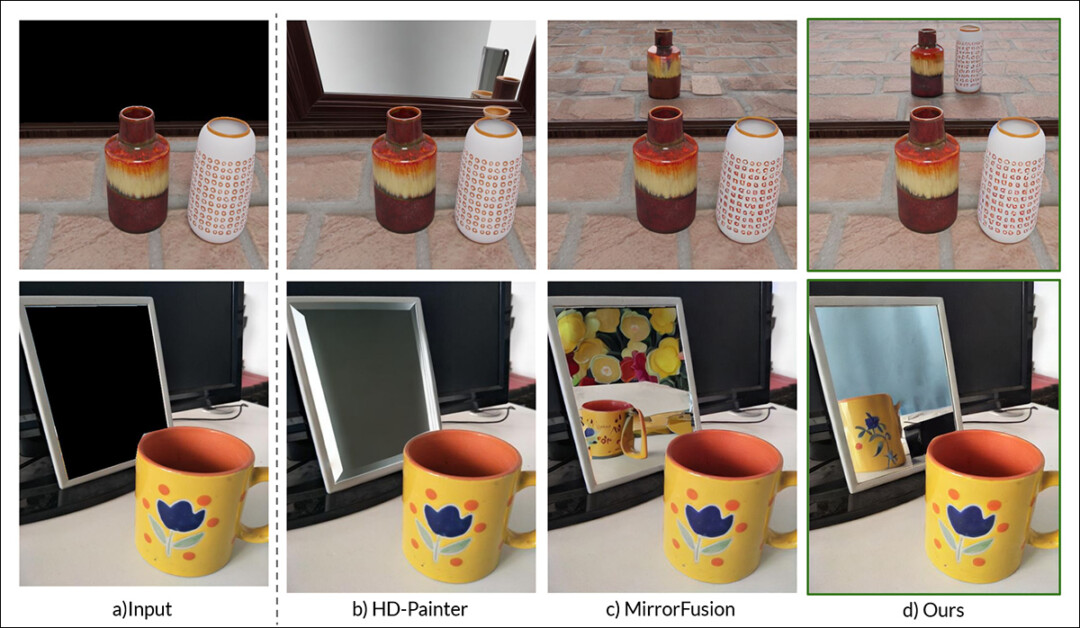

Rightmost, the outcomes from MirrorVerse pitted towards two prior approaches (central two columns). Supply: https://arxiv.org/pdf/2504.15397

As we will see within the instance above (the characteristic picture within the PDF of the brand new research), MirrorVerse improves on latest choices tackling the identical downside, however is way from excellent.

Within the higher proper picture, we see that the ceramic jars are considerably to the proper of the place they need to be, and within the picture under, which ought to technically not characteristic a mirrored image of the cup in any respect, an inaccurate reflection has been shoehorned into the proper–hand space, towards the logic of pure reflective angles.

Subsequently we’ll check out the brand new methodology not a lot as a result of it might characterize the present state-of-the-art in diffusion-based reflection, however equally for instance the extent to which this may occasionally show to be an intractable difficulty for latent diffusion fashions, static and video alike, because the requisite information examples of reflectivity are most definitely to be entangled with explicit actions and eventualities.

Subsequently this explicit perform of LDMs could proceed to fall in need of structure-specific approaches corresponding to NeRF, GSplat, and in addition conventional CGI.

The new paper is titled MirrorVerse: Pushing Diffusion Fashions to Realistically Replicate the World, and comes from three researchers throughout Imaginative and prescient and AI Lab, IISc Bangalore, and the Samsung R&D Institute at Bangalore. The paper has an related undertaking web page, in addition to a dataset at Hugging Face, with supply code launched at GitHub.

Methodology

The researchers observe from the outset the problem that fashions corresponding to Secure Diffusion and Flux have in respecting reflection-based prompts, illustrating the difficulty adroitly:

From the paper: Present state-of-the-art text-to-image fashions, SD3.5 and Flux, exhibiting important challenges in producing constant and geometrically correct reflections when prompted to generate them in a scene.

The researchers have developed MirrorFusion 2.0, a diffusion-based generative mannequin aimed toward enhancing the photorealism and geometric accuracy of mirror reflections in artificial imagery. Coaching for the mannequin was based mostly on the researchers’ personal newly-curated dataset, titled MirrorGen2, designed to deal with the generalization weaknesses noticed in earlier approaches.

MirrorGen2 expands on earlier methodologies by introducing random object positioning, randomized rotations, and express object grounding, with the purpose of guaranteeing that reflections stay believable throughout a wider vary of object poses and placements relative to the mirror floor.

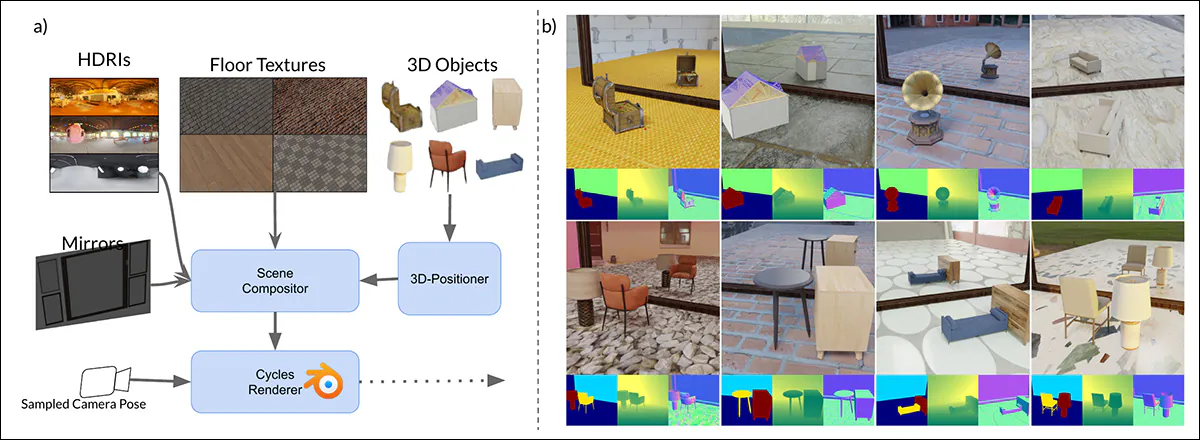

Schema for the era of artificial information in MirrorVerse: the dataset era pipeline utilized key augmentations by randomly positioning, rotating, and grounding objects throughout the scene utilizing the 3D-Positioner. Objects are additionally paired in semantically constant mixtures to simulate advanced spatial relationships and occlusions, permitting the dataset to seize extra lifelike interactions in multi-object scenes.

To additional strengthen the mannequin’s potential to deal with advanced spatial preparations, the MirrorGen2 pipeline incorporates paired object scenes, enabling the system to higher characterize occlusions and interactions between a number of components in reflective settings.

The paper states:

‘Classes are manually paired to make sure semantic coherence – as an example, pairing a chair with a desk. Throughout rendering, after positioning and rotating the first [object], an extra [object] from the paired class is sampled and organized to stop overlap, guaranteeing distinct spatial areas throughout the scene.’

In regard to express object grounding, right here the authors ensured that the generated objects have been ‘anchored’ to the bottom within the output artificial information, reasonably than ‘hovering’ inappropriately, which might happen when artificial information is generated at scale, or with extremely automated strategies.

Since dataset innovation is central to the novelty of the paper, we’ll proceed sooner than standard to this part of the protection.

Knowledge and Exams

SynMirrorV2

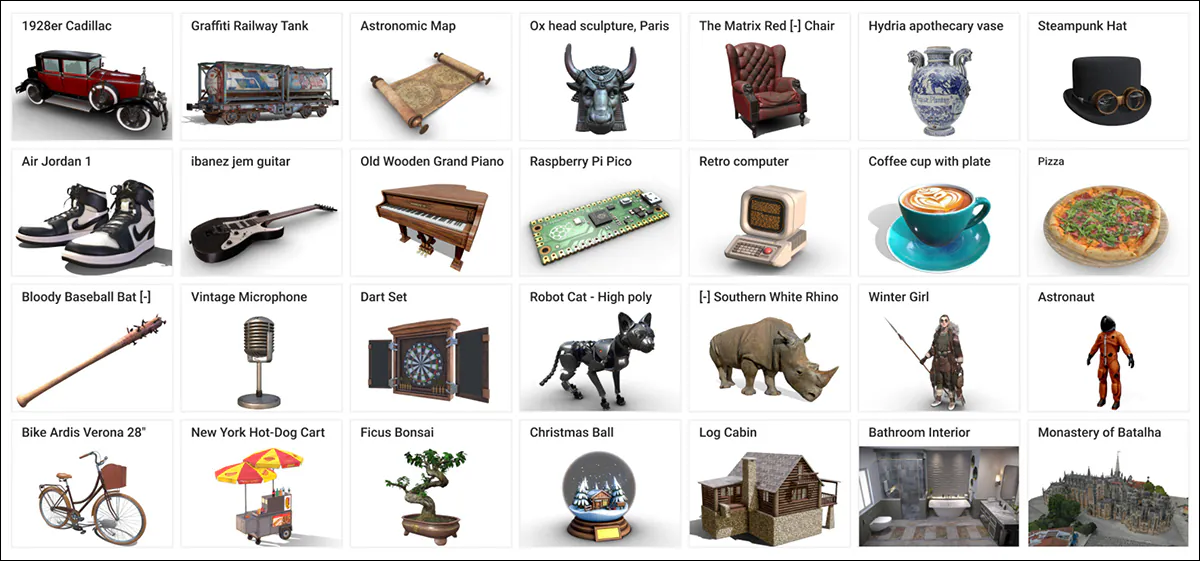

The researchers’ SynMirrorV2 dataset was conceived to enhance the variety and realism of mirror reflection coaching information, that includes 3D objects sourced from the Objaverse and Amazon Berkeley Objects (ABO) datasets, with these choices subsequently refined by way of OBJECT 3DIT, in addition to the filtering course of from the V1 MirrorFusion undertaking, to eradicate low-quality asset. This resulted in a refined pool of 66,062 objects.

Examples from the Objaverse dataset, used within the creation of the curated dataset for the brand new system. Supply: https://arxiv.org/pdf/2212.08051

Scene development concerned inserting these objects onto textured flooring from CC-Textures and HDRI backgrounds from the PolyHaven CGI repository, utilizing both full-wall or tall rectangular mirrors. Lighting was standardized with an area-light positioned above and behind the objects, at a forty-five diploma angle. Objects have been scaled to suit inside a unit dice and positioned utilizing a precomputed intersection of the mirror and digicam viewing frustums, guaranteeing visibility.

Randomized rotations have been utilized across the y-axis, and a grounding method used to stop ‘floating artifacts’.

To simulate extra advanced scenes, the dataset additionally included a number of objects organized in line with semantically coherent pairings based mostly on ABO classes. Secondary objects have been positioned to keep away from overlap, creating 3,140 multi-object scenes designed to seize various occlusions and depth relationships.

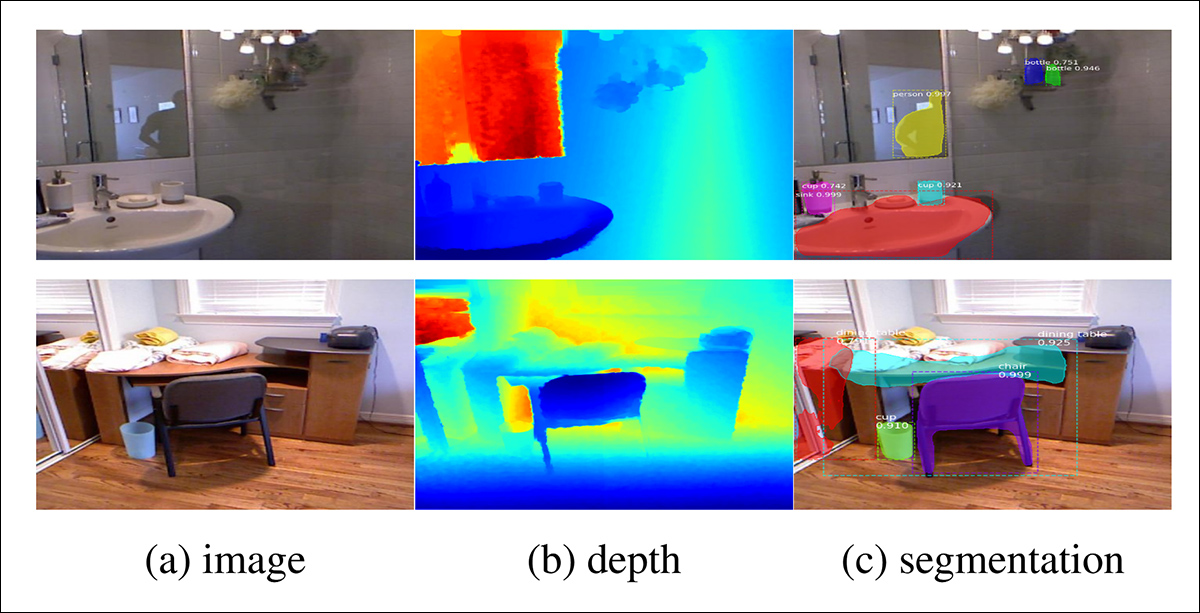

Examples of rendered views from the authors’ dataset containing a number of (greater than two) objects, with illustrations of object segmentation and depth map visualizations seen under.

Coaching Course of

Acknowledging that artificial realism alone was inadequate for strong generalization to real-world information, the researchers developed a three-stage curriculum studying course of for coaching MirrorFusion 2.0.

In Stage 1, the authors initialized the weights of each the conditioning and era branches with the Secure Diffusion v1.5 checkpoint, and fine-tuned the mannequin on the single-object coaching break up of the SynMirrorV2 dataset. Not like the above-mentioned Reflecting Actuality undertaking, the researchers didn’t freeze the era department. They then skilled the mannequin for 40,000 iterations.

In Stage 2, the mannequin was fine-tuned for an extra 10,000 iterations, on the multiple-object coaching break up of SynMirrorV2, so as to educate the system to deal with occlusions, and the extra advanced spatial preparations present in lifelike scenes.

Lastly, In Stage 3, an extra 10,000 iterations of finetuning have been performed utilizing real-world information from the MSD dataset, utilizing depth maps generated by the Matterport3D monocular depth estimator.

Examples from the MSD dataset, with real-world scenes analyzed into depth and segmentation maps. Supply: https://arxiv.org/pdf/1908.09101

Throughout coaching, textual content prompts have been omitted for 20 p.c of the coaching time so as to encourage the mannequin to make optimum use of the out there depth info (i.e., a ‘masked’ strategy).

Coaching befell on 4 NVIDIA A100 GPUs for all levels (the VRAM spec shouldn’t be equipped, although it might have been 40GB or 80GB per card). A studying price of 1e-5 was used on a batch measurement of 4 per GPU, underneath the AdamW optimizer.

This coaching scheme progressively elevated the problem of duties introduced to the mannequin, starting with easier artificial scenes and advancing towards more difficult compositions, with the intention of growing strong real-world transferability.

Testing

The authors evaluated MirrorFusion 2.0 towards the earlier state-of-the-art, MirrorFusion, which served because the baseline, and performed experiments on the MirrorBenchV2 dataset, protecting each single and multi-object scenes.

Further qualitative assessments have been performed on samples from the MSD dataset, and the Google Scanned Objects (GSO) dataset.

The analysis used 2,991 single-object pictures from seen and unseen classes, and 300 two-object scenes from ABO. Efficiency was measured utilizing Peak Sign-to-Noise Ratio (PSNR); Structural Similarity Index (SSIM); and Realized Perceptual Picture Patch Similarity (LPIPS) scores, to evaluate reflection high quality on the masked mirror area. CLIP similarity was used to guage textual alignment with the enter prompts.

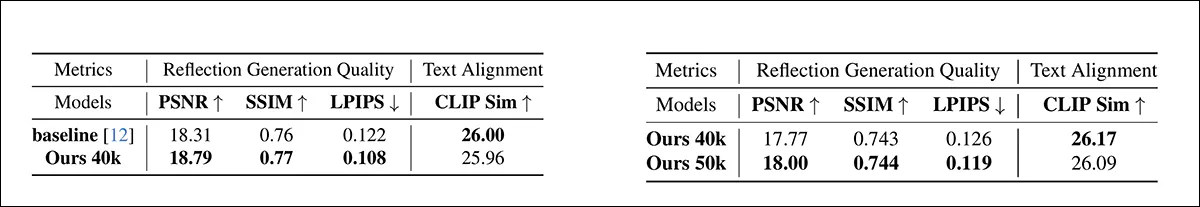

In quantitative assessments, the authors generated pictures utilizing 4 seeds for a selected immediate, and choosing the ensuing picture with one of the best SSIM rating. The 2 reported tables of outcomes for the quantitative assessments are proven under.

Left, Quantitative outcomes for single object reflection era high quality on the MirrorBenchV2 single object break up. MirrorFusion 2.0 outperformed the baseline, with one of the best outcomes proven in daring. Proper, quantitative outcomes for a number of object reflection era high quality on the MirrorBenchV2 a number of object break up. MirrorFusion 2.0 skilled with a number of objects outperformed the model skilled with out them, with one of the best outcomes proven in daring.

The authors remark:

‘[The results] present that our methodology outperforms the baseline methodology and finetuning on a number of objects improves the outcomes on advanced scenes.’

The majority of outcomes, and people emphasised by the authors, regard qualitative testing. As a result of dimensions of those illustrations, we will solely partially reproduce the paper’s examples.

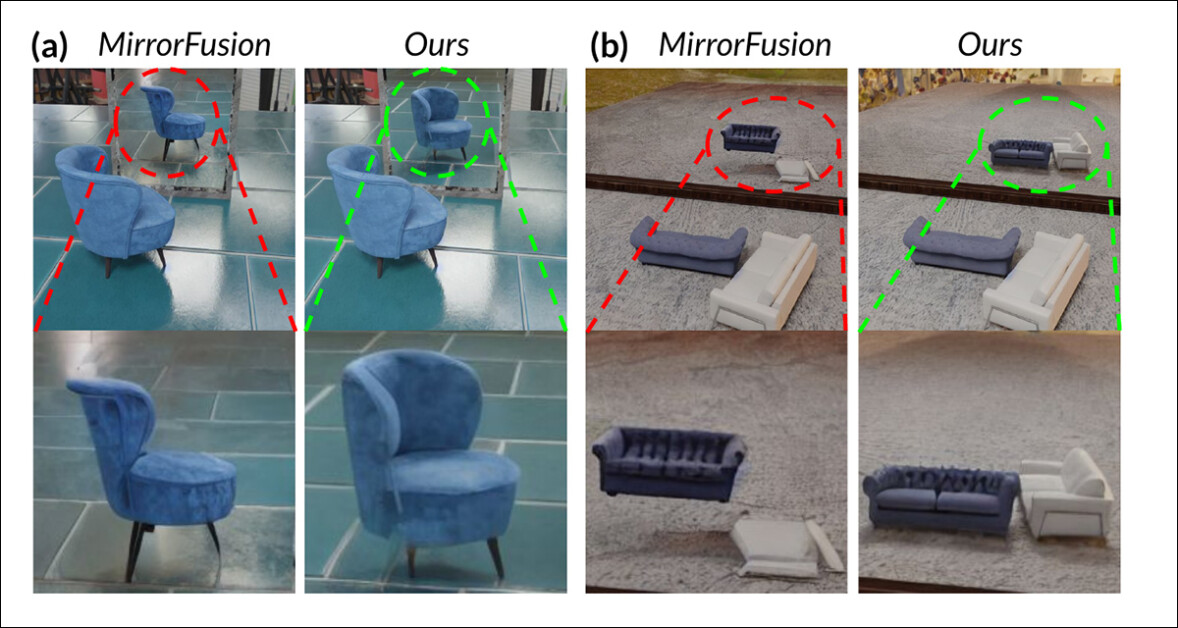

Comparability on MirrorBenchV2: the baseline failed to keep up correct reflections and spatial consistency, exhibiting incorrect chair orientation and distorted reflections of a number of objects, whereas (the authors contend) MirrorFusion 2.0 appropriately renders the chair and the sofas, with correct place, orientation, and construction.

Of those subjective outcomes, the researchers opine that the baseline mannequin did not precisely render object orientation and spatial relationships in reflections, typically producing artifacts corresponding to incorrect rotation and floating objects. MirrorFusion 2.0, skilled on SynMirrorV2, the authors contend, preserves right object orientation and positioning in each single-object and multi-object scenes, leading to extra lifelike and coherent reflections.

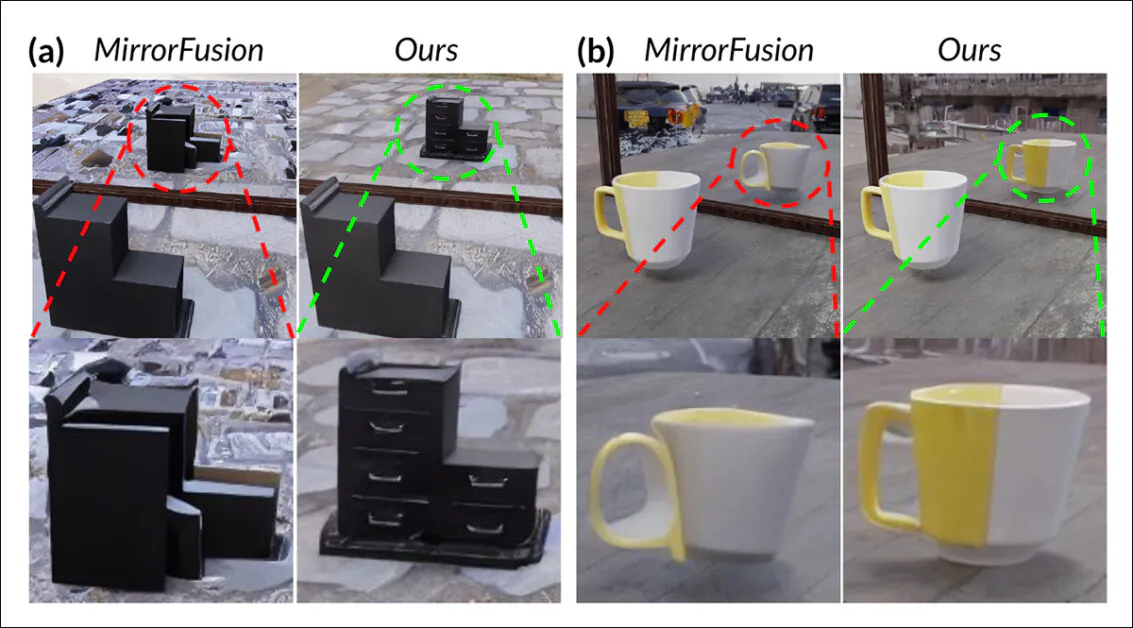

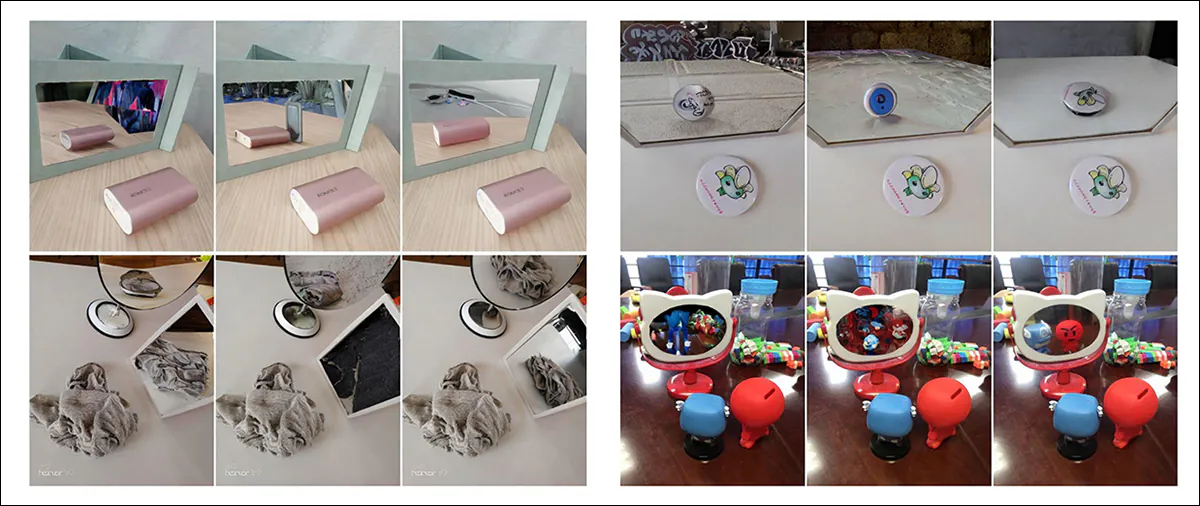

Beneath we see qualitative outcomes on the aforementioned GSO dataset:

Comparability on the GSO dataset. The baseline misrepresents object construction and produced incomplete, distorted reflections, whereas MirrorFusion 2.0, the authors contend, preserves spatial integrity and generates correct geometry, colour, and element, even on out-of-distribution objects.

Right here the authors remark:

‘MirrorFusion 2.0 generates considerably extra correct and lifelike reflections. For example, in Fig. 5 (a – above), MirrorFusion 2.0 appropriately displays the drawer handles (highlighted in inexperienced), whereas the baseline mannequin produces an implausible reflection (highlighted in purple).

‘Likewise, for the “White-Yellow mug” in Fig. 5 (b), MirrorFusion 2.0 delivers a convincing geometry with minimal artifacts, not like the baseline, which fails to precisely seize the thing’s geometry and look.’

The ultimate qualitative take a look at was towards the aforementioned real-world MSD dataset (partial outcomes proven under):

Actual-world scene outcomes evaluating MirrorFusion, MirrorFusion 2.0, and MirrorFusion 2.0, fine-tuned on the MSD dataset. MirrorFusion 2.0, the authors contend, captures advanced scene particulars extra precisely, together with cluttered objects on a desk, and the presence of a number of mirrors inside a three-dimensional setting. Solely partial outcomes are proven right here, because of the dimensions of the leads to the unique paper, to which we refer the reader for full outcomes and higher decision.

Right here the authors observe that whereas MirrorFusion 2.0 carried out effectively on MirrorBenchV2 and GSO information, it initially struggled with advanced real-world scenes within the MSD dataset. Wonderful-tuning the mannequin on a subset of MSD improved its potential to deal with cluttered environments and a number of mirrors, leading to extra coherent and detailed reflections on the held-out take a look at break up.

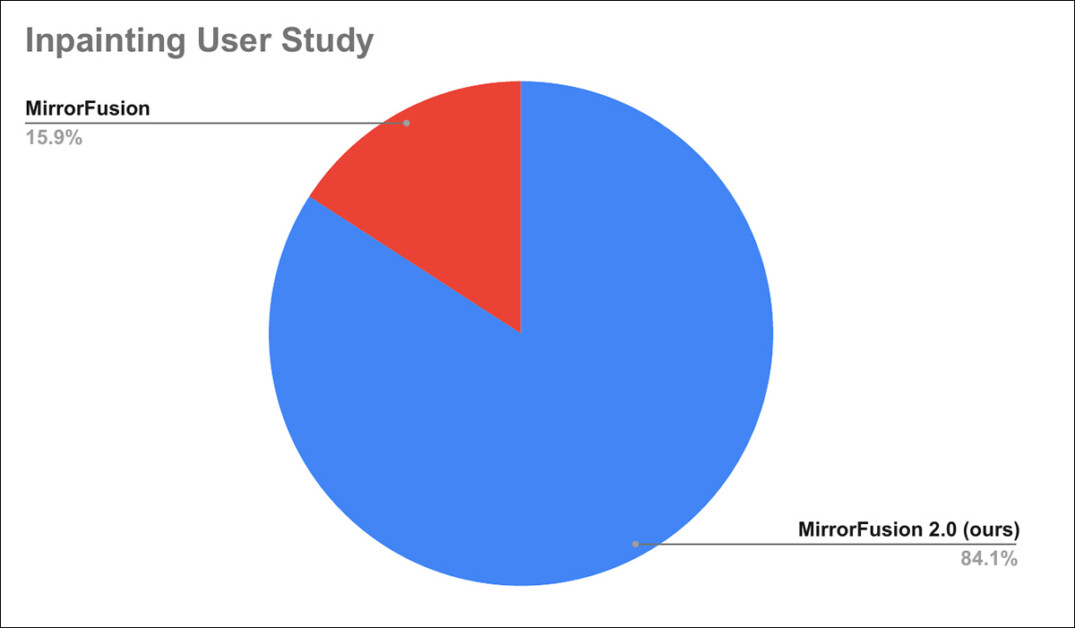

Moreover, a consumer research was performed, the place 84% of customers are reported to have most well-liked generations from MirrorFusion 2.0 over the baseline methodology.

Outcomes of the consumer research.

Since particulars of the consumer research have been relegated to the appendix of the paper, we refer the reader to that for the specifics of the research.

Conclusion

Though a number of of the outcomes proven within the paper are spectacular enhancements on the state-of-the-art, the state-of-the-art for this explicit pursuit is so abysmal that even an unconvincing combination resolution can win out with a modicum of effort. The basic structure of a diffusion mannequin is so inimical to the dependable studying and demonstration of constant physics, that the issue itself is really posed, and never apparently not disposed towards a chic resolution.

Additional, including information to current fashions is already the usual methodology of remedying shortfalls in LDM efficiency, with all of the disadvantages listed earlier. It’s affordable to imagine that if future high-scale datasets have been to pay extra consideration to the distribution (and annotation) of reflection-related information factors, we might count on that the ensuing fashions would deal with this state of affairs higher.

But the identical is true of a number of different bugbears in LDM output – who can say which ones most deserves the trouble and cash concerned within the form of resolution that the authors of the brand new paper suggest right here?

First revealed Monday, April 28, 2025