Introducing Hunyuan3D-1.0, a game-changer on the planet of 3D asset creation. Think about producing high-quality 3D fashions in beneath 10 seconds—no extra lengthy waits or cumbersome processes. This modern instrument combines cutting-edge AI and a two-stage framework to create practical, multi-view photographs earlier than remodeling them into exact, high-fidelity 3D belongings. Whether or not you’re a sport developer, product designer, or digital artist, Hunyuan3D-1.0 empowers you to hurry up your workflow with out compromising on high quality. Discover how this know-how can reshape your artistic course of and take your initiatives to the subsequent stage. The way forward for 3D asset technology is right here, and it’s sooner, smarter, and extra environment friendly than ever earlier than.

Studying Goals

- Find out how Hunyuan3D-1.0 simplifies 3D modeling by producing high-quality belongings in beneath 10 seconds.

- Discover the Two-Stage Strategy of Hunyuan3D-1.0.

- Uncover how superior AI-driven processes like adaptive steerage and super-resolution improve each velocity and high quality in 3D modeling.

- Uncover the various use instances of this know-how, together with gaming, e-commerce, healthcare, and extra.

- Perceive how Hunyuan3D-1.0 opens up 3D asset creation to a broader viewers, making it sooner, cost-effective, and scalable for companies.

This text was printed as part of the Information Science Blogathon.

Options of Hunyuan3D-1.0

The distinctiveness of Hunyuan3D-1.0 lies in its groundbreaking method to creating 3D fashions, combining superior AI know-how with a streamlined, two-stage course of. In contrast to conventional strategies, which require hours of guide work and sophisticated modeling software program, this technique automates the creation of high-quality 3D belongings from scratch in beneath 10 seconds. It achieves this by first producing multi-view 2D photographs of a product or object utilizing refined AI algorithms. These photographs are then seamlessly reworked into detailed, practical 3D fashions with a powerful stage of constancy.

What makes this proposal actually modern is its means to considerably scale back the time and ability required for 3D modeling, which is usually a labor-intensive and technical course of. By simplifying this into an easy-to-use system, it opens up 3D asset creation to a broader viewers, together with sport builders, digital artists, and designers who might not have specialised experience in 3D modeling. The system’s capability to generate fashions rapidly, effectively, and precisely not solely accelerates the artistic course of but additionally permits companies to scale their initiatives and scale back prices.

As well as, it doesn’t simply save time—it additionally ensures high-quality outputs. The AI-driven know-how ensures that every 3D mannequin retains vital visible and structural particulars, making them good for real-time purposes like gaming or digital simulations. This proposal represents a leap ahead within the integration of AI and 3D modeling, offering an answer that’s quick, dependable, and accessible to a variety of industries.

How Hunyuan3D-1.0 WorksHow Hunyuan3D-1.0 Works

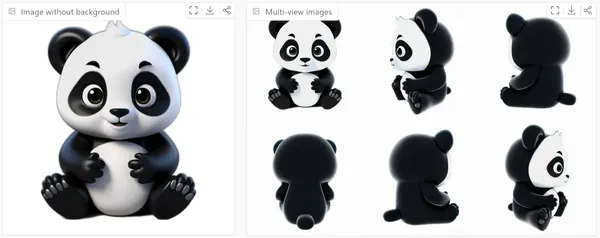

On this part, we talk about two principal levels of Hunyuan3D-1.0, which includes a multi-view diffusion mannequin for 2D-to-3D lifting and a sparse-view reconstruction mannequin.

Let’s break down these strategies to grasp how they work collectively to create high-quality 3D fashions from 2D photographs.

Multi-view Diffusion Mannequin

This technique makes use of the success of diffusion fashions in producing 2D photographs and extends it to create multi-view 3D photographs.

- The multi-view photographs are generated concurrently by organizing them in a grid.

- By scaling up the Zero-1-to-3++ mannequin, this method generates a 3× bigger mannequin.

- The mannequin makes use of a way known as “Reference consideration.” This system guides the diffusion mannequin to provide photographs with textures much like a reference picture.

- This includes including an additional situation picture throughout the denoising course of to make sure consistency throughout generated photographs.

- The mannequin renders the pictures with particular angles (elevation of 0° and a number of azimuths) and a white background.

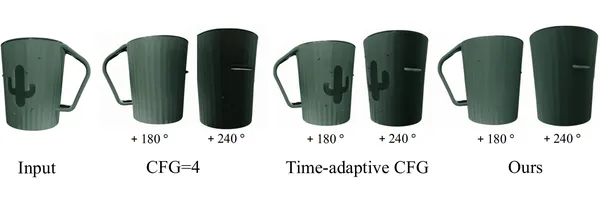

Adaptive Classifier-free Steering (CFG)

- In multi-view technology, a small CFG enhances texture element however introduces unacceptable artifacts, whereas a big CFG improves object geometry at the price of texture high quality.

- The efficiency of CFG scale values varies by view; increased scales protect extra particulars for entrance views however might result in darker again views.

- On this mannequin, adaptive CFG is proposed to regulate the CFG scale for various views and time steps.

- Intuitively, for entrance views and at early denoising time steps, increased CFG scale is about, which is then decreased because the denoising course of progresses and because the view of the generated picture diverges from the situation picture.

- This dynamic adjustment improves each the feel high quality and the geometry of the generated fashions.

- Thus, a extra balanced and high-quality multi-view technology is achieved.

Sparse-view Reconstruction Mannequin

This mannequin helps in turning the generated multi-view photographs into detailed 3D reconstructions utilizing a transformer-based method. The important thing to this technique is velocity and high quality, permitting the reconstruction course of to occur in lower than 2 seconds.

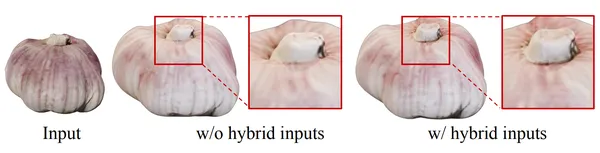

Hybrid Inputs

- The reconstruction mannequin makes use of each calibrated, and uncalibrated (user-provided) photographs for correct 3D reconstruction.

- Calibrated photographs assist information the mannequin’s understanding of the item’s construction, whereas uncalibrated photographs fill in gaps, particularly for views which can be laborious to seize with commonplace digital camera angles (like high or backside views).

Tremendous-resolution

- One problem with 3D reconstruction is that low-resolution photographs usually end in poor-quality fashions.

- To resolve this, the mannequin makes use of a “Tremendous-resolution module”.

- This module enhances the decision of triplanes (3D information planes), bettering the element within the closing 3D mannequin.

- By avoiding advanced self-attention on high-resolution information, the mannequin maintains effectivity whereas attaining clearer particulars.

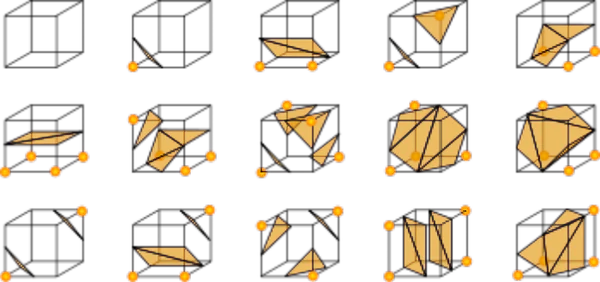

3D Illustration

- As a substitute of relying solely on implicit 3D representations (e.g., NeRF or Gaussian Splatting), this mannequin makes use of a mixture of implicit and specific representations.

- NeuS makes use of the Signed Distance Operate (SDF) to mannequin the form after which converts it into specific meshes with the Marching Cubes algorithm.

- Use these meshes instantly for texture mapping, getting ready the ultimate 3D outputs for creative refinements and real-world purposes.

Getting Began with Hunyuan3D-1.0

Clone the repository.

git clone https://github.com/tencent/Hunyuan3D-1

cd Hunyuan3D-1Set up Information for Linux

‘env_install.sh’ script file is used for organising the setting.

# step 1, create conda env

conda create -n hunyuan3d-1 python=3.9 or 3.10 or 3.11 or 3.12

conda activate hunyuan3d-1

# step 2. set up torch realated package deal

which pip # verify pip corresponds to python

# modify the cuda model in accordance with your machine (advisable)

pip set up torch torchvision --index-url https://obtain.pytorch.org/whl/cu121

# step 3. set up different packages

bash env_install.sh

Optionally, ‘xformers’ or ‘flash_attn’ might be put in to acclerate computation.

pip set up xformers --index-url https://obtain.pytorch.org/whl/cu121pip set up flash_attnMost setting errors are brought on by a mismatch between machine and packages. The model might be manually specified, as proven within the following profitable instances:

# python3.9

pip set up torch==2.0.1 torchvision==0.15.2 --index-url https://obtain.pytorch.org/whl/cu118when set up pytorch3d, the gcc model is ideally better than 9, and the gpu driver shouldn’t be too outdated.

Obtain Pretrained Fashions

The fashions can be found at https://huggingface.co/tencent/Hunyuan3D-1:

- Hunyuan3D-1/lite: lite mannequin for multi-view technology.

- Hunyuan3D-1/std: commonplace mannequin for multi-view technology.

- Hunyuan3D-1/svrm: sparse-view reconstruction mannequin.

To obtain the mannequin, first set up the ‘huggingface-cli’. (Detailed directions can be found right here.)

python3 -m pip set up "huggingface_hub[cli]"Then obtain the mannequin utilizing the next instructions:

mkdir weights

huggingface-cli obtain tencent/Hunyuan3D-1 --local-dir ./weights

mkdir weights/hunyuanDiT

huggingface-cli obtain Tencent-Hunyuan/HunyuanDiT-v1.1-Diffusers-Distilled --local-dir ./weights/hunyuanDiTInference

For textual content to 3d technology, it helps bilingual Chinese language and English:

python3 principal.py

--text_prompt "a beautiful rabbit"

--save_folder ./outputs/check/

--max_faces_num 90000

--do_texture_mapping

--do_renderFor picture to 3d technology:

python3 principal.py

--image_prompt "/path/to/your/picture"

--save_folder ./outputs/check/

--max_faces_num 90000

--do_texture_mapping

--do_renderUtilizing Gradio

The 2 variations of multi-view technology, std and lite might be inferenced as follows:

# std

python3 app.py

python3 app.py --save_memory

# lite

python3 app.py --use_lite

python3 app.py --use_lite --save_memory

Then the demo might be accessed by way of http://0.0.0.0:8080. It ought to be famous that the 0.0.0.0 right here must be X.X.X.X along with your server IP.

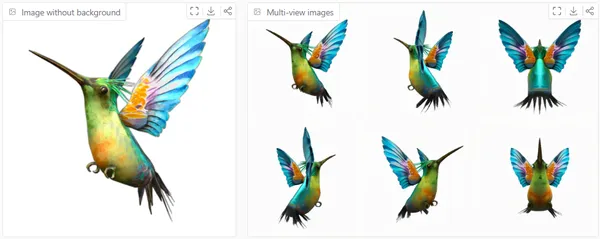

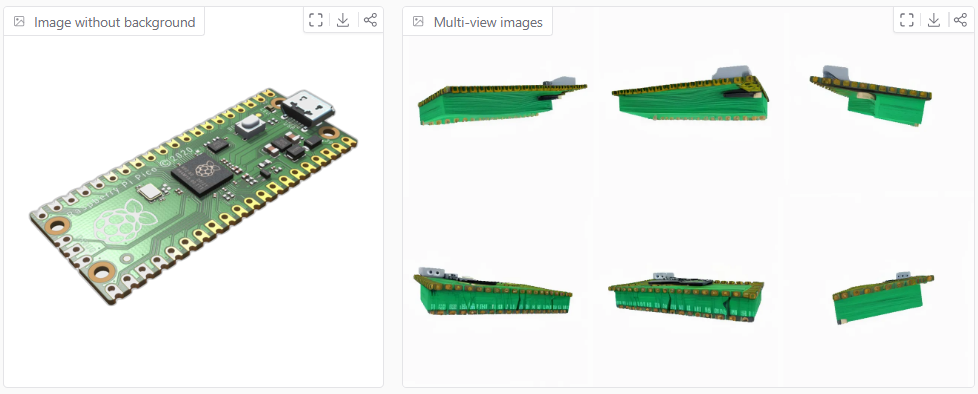

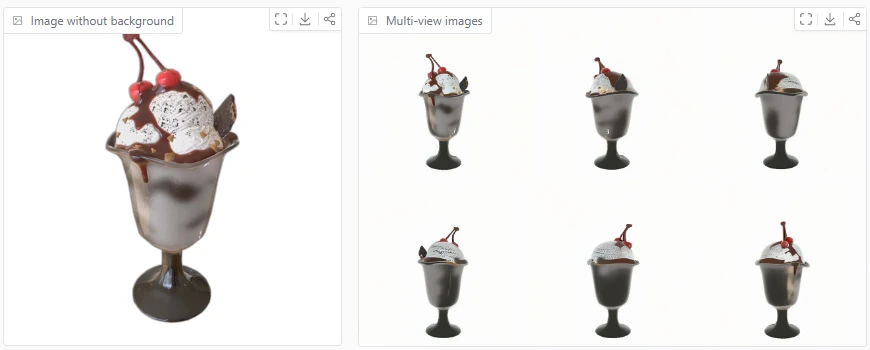

Examples of Generated Fashions

Generated utilizing Hugging Face House: https://huggingface.co/areas/tencent/Hunyuan3D-1

Example1: Buzzing Chook

Example2:

Raspberry Pi Pico

Example3: Sundae

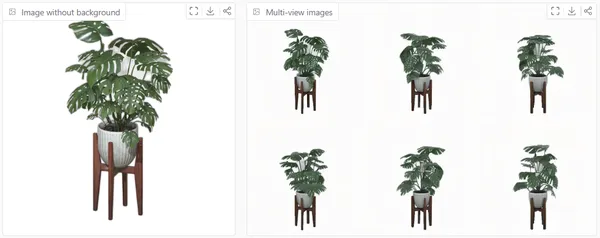

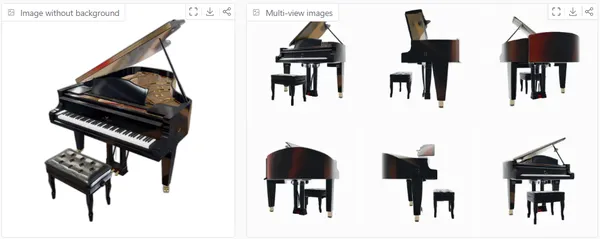

Example4: Monstera deliciosa

Example5: Grand Piano

Execs and Challenges of Hunyuan3D-1.0

- Excessive-quality 3D Outputs: Generates detailed and correct 3D fashions from minimal inputs.

- Pace: Delivers immediate reconstructions.

- Versatility: Adapts to each calibrated and uncalibrated information for various purposes.

Challenges

- Sparse-view Limitations: Struggles with uncertainties within the high and backside views on account of restricted enter views.

- Complexity in Decision Scaling: Growing triplane decision provides computational challenges regardless of optimizations.

- Dependence on Massive Datasets: Requires in depth information and coaching sources for high-quality outputs.

Actual-World Purposes

- Sport Growth: Create detailed 3D belongings for immersive gaming environments.

- E-Commerce: Generate practical 3D product previews for on-line buying.

- Digital Actuality: Construct correct 3D scenes for VR experiences.

- Healthcare: Visualize 3D anatomical fashions for medical coaching and diagnostics.

- Architectural Design: Render lifelike 3D layouts for planning and displays.

- Movie and Animation: Producing hyper-realistic visuals and CGI for motion pictures and animated productions.

- Customized Avatars: Creating customized, lifelike avatars for social media, digital conferences, or the metaverse.

- Industrial Prototyping: Streamlining product design and testing with correct 3D prototypes.

- Schooling and Coaching: Offering immersive 3D studying experiences for topics like biology, engineering, or geography.

- Digital Residence Excursions: Enhancing actual property with interactive 3D property walkthroughs for potential consumers.

Conclusion

Hunyuan3D-1.0 represents a big leap ahead within the realm of 3D reconstruction, providing a quick, environment friendly, and extremely correct answer for producing detailed 3D fashions from sparse inputs. By combining the facility of multi-view diffusion, adaptive steerage, and sparse-view reconstruction, this modern method pushes the boundaries of what’s potential in real-time 3D technology. The power to seamlessly combine each calibrated and uncalibrated photographs, coupled with the super-resolution and specific 3D representations, opens up thrilling prospects for a variety of purposes, from gaming and design to digital actuality. Hunyuan3D-1.0 balances geometric accuracy and texture element, revolutionizing industries reliant on 3D modeling and enhancing consumer experiences throughout numerous domains.

Furthermore, it permits for steady enchancment and customization, adapting to new traits in design and consumer wants. This stage of flexibility ensures that it stays on the forefront of 3D modeling know-how, providing companies a aggressive edge in an ever-evolving digital panorama. It’s greater than only a instrument—it’s a catalyst for innovation.

Key Takeaways

- The Hunyuan3D-1.0 technique effectively generates 3D fashions in beneath 10 seconds utilizing multi-view photographs and sparse-view reconstruction, making it perfect for sensible purposes.

- The adaptive CFG scale improves each the geometry and texture of generated 3D fashions, making certain high-quality outcomes for various views.

- The mix of calibrated and uncalibrated inputs, together with a super-resolution method, ensures extra correct and detailed 3D shapes, addressing challenges confronted by earlier strategies.

- By changing implicit shapes into specific meshes, the mannequin delivers 3D fashions which can be prepared for real-world use, permitting for additional creative refinement.

- This two-stage strategy of Hunyuan3D-1.0 ensures that advanced 3D mannequin creation just isn’t solely sooner but additionally extra accessible, making it a strong instrument for industries that depend on high-quality 3D belongings.

References

Steadily Requested Questions

A. No, it can’t utterly eradicate human intervention. Nevertheless, it may possibly considerably enhance the event workflow by drastically decreasing the time required to generate 3D fashions, offering practically full outputs. Customers should still have to make closing refinements or changes to make sure the fashions meet particular necessities, however the course of is way sooner and extra environment friendly than conventional strategies.

A. No, Hunyuan3D-1.0 simplifies the 3D modeling course of, making it accessible even to these with out specialised expertise in 3D design. The system automates the creation of 3D fashions with minimal enter, permitting anybody to generate high-quality belongings rapidly.

A. The lite mannequin generates 3D mesh from a single picture in about 10 seconds on an NVIDIA A100 GPU, whereas the usual mannequin takes ~25 seconds. These occasions exclude the UV map unwrapping and texture baking processes, which add 15 seconds.

The media proven on this article just isn’t owned by Analytics Vidhya and is used on the Writer’s discretion.