Enhancing Japan’s AI sovereignty and strengthening its analysis and growth capabilities, Japan’s Nationwide Institute of Superior Industrial Science and Know-how (AIST) will combine hundreds of NVIDIA H200 Tensor Core GPUs into its AI Bridging Cloud Infrastructure 3.0 supercomputer (ABCI 3.0). The Hewlett Packard Enterprise Cray XD system will function NVIDIA Quantum-2 InfiniBand networking for superior efficiency and scalability.

ABCI 3.0 is the newest iteration of Japan’s large-scale Open AI Computing Infrastructure designed to advance AI R&D. This collaboration underlines Japan’s dedication to advancing its AI capabilities and fortifying its technological independence.

“In August 2018, we launched ABCI, the world’s first large-scale open AI computing infrastructure,” mentioned AIST Govt Officer Yoshio Tanaka. “Constructing on our expertise over the previous a number of years managing ABCI, we’re now upgrading to ABCI 3.0. In collaboration with NVIDIA and HPE we goal to develop ABCI 3.0 right into a computing infrastructure that can advance additional analysis and growth capabilities for generative AI in Japan.”

“As generative AI prepares to catalyze world change, it’s essential to quickly domesticate analysis and growth capabilities inside Japan,” mentioned AIST Options Co. Producer and Head of ABCI Operations Hirotaka Ogawa. “I’m assured that this main improve of ABCI in our collaboration with NVIDIA and HPE will improve ABCI’s management in home business and academia, propelling Japan in the direction of world competitiveness in AI growth and serving because the bedrock for future innovation.”

ABCI 3.0: A New Period for Japanese AI Analysis and Growth

ABCI 3.0 is constructed and operated by AIST, its enterprise subsidiary, AIST Options, and its system integrator, Hewlett Packard Enterprise (HPE).

The ABCI 3.0 venture follows assist from Japan’s Ministry of Financial system, Commerce and Trade, often known as METI, for strengthening its computing sources by the Financial Safety Fund and is a part of a broader $1 billion initiative by METI that features each ABCI efforts and investments in cloud AI computing.

NVIDIA is intently collaborating with METI on analysis and schooling following a go to final 12 months by firm founder and CEO, Jensen Huang, who met with political and enterprise leaders, together with Japanese Prime Minister Fumio Kishida, to debate the way forward for AI.

NVIDIA’s Dedication to Japan’s Future

Huang pledged to collaborate on analysis, notably in generative AI, robotics and quantum computing, to spend money on AI startups and supply product assist, coaching and schooling on AI.

Throughout his go to, Huang emphasised that “AI factories” — next-generation knowledge facilities designed to deal with essentially the most computationally intensive AI duties — are essential for turning huge quantities of knowledge into intelligence.

“The AI manufacturing facility will develop into the bedrock of recent economies the world over,” Huang mentioned throughout a gathering with the Japanese press in December.

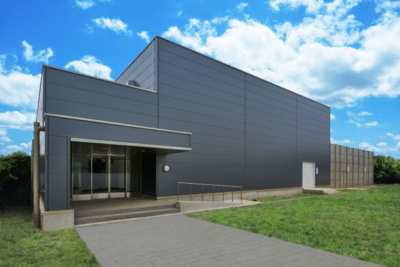

With its ultra-high-density knowledge middle and energy-efficient design, ABCI supplies a strong infrastructure for growing AI and massive knowledge purposes.

The system is predicted to return on-line by the top of this 12 months and supply state-of-the-art AI analysis and growth sources. Will probably be housed in Kashiwa, close to Tokyo.

Unmatched Computing Efficiency and Effectivity

The power will supply:

- 6 AI exaflops of computing capability, a measure of AI-specific efficiency with out sparsity

- 410 double-precision petaflops, a measure of normal computing capability

- Every node is linked by way of the Quantum-2 InfiniBand platform at 200GB/s of bisectional bandwidth.

NVIDIA expertise types the spine of this initiative, with lots of of nodes every outfitted with 8 NVLlink-connected H200 GPUs offering unprecedented computational efficiency and effectivity.

NVIDIA H200 is the primary GPU to supply over 140 gigabytes (GB) of HBM3e reminiscence at 4.8 terabytes per second (TB/s). The H200’s bigger and quicker reminiscence accelerates generative AI and LLMs, whereas advancing scientific computing for HPC workloads with higher vitality effectivity and decrease complete value of possession.

NVIDIA H200 GPUs are 15X extra energy-efficient than ABCI’s previous-generation structure for AI workloads akin to LLM token technology.

The combination of superior NVIDIA Quantum-2 InfiniBand with In-Community computing — the place networking units carry out computations on knowledge, offloading the work from the CPU — ensures environment friendly, high-speed, low-latency communication, essential for dealing with intensive AI workloads and huge datasets.

ABCI boasts world-class computing and knowledge processing energy, serving as a platform to speed up joint AI R&D with industries, academia and governments.

METI’s substantial funding is a testomony to Japan’s strategic imaginative and prescient to boost AI growth capabilities and speed up using generative AI.

By subsidizing AI supercomputer growth, Japan goals to cut back the time and prices of growing next-generation AI applied sciences, positioning itself as a frontrunner within the world AI panorama.