Massive Studying Fashions or LLMs are fairly widespread phrases when discussing Synthetic intelligence (AI). With the appearance of platforms like ChatGPT, these phrases have now change into a phrase of mouth for everybody. Immediately, they’re applied in engines like google and each social media app comparable to WhatsApp or Instagram. LLMs modified how we work together with the web as discovering related data or performing particular duties was by no means this straightforward earlier than.

What are Massive Language Fashions (LLMs)?

In generative AI, human language is perceived as a tough information kind. If a pc program is educated on sufficient information such that it may well analyze, perceive, and generate responses in pure language and different types of content material, it’s known as a Massive Language Mannequin (LLM). They’re educated on huge curated coaching information with sizes starting from hundreds to hundreds of thousands of gigabytes.

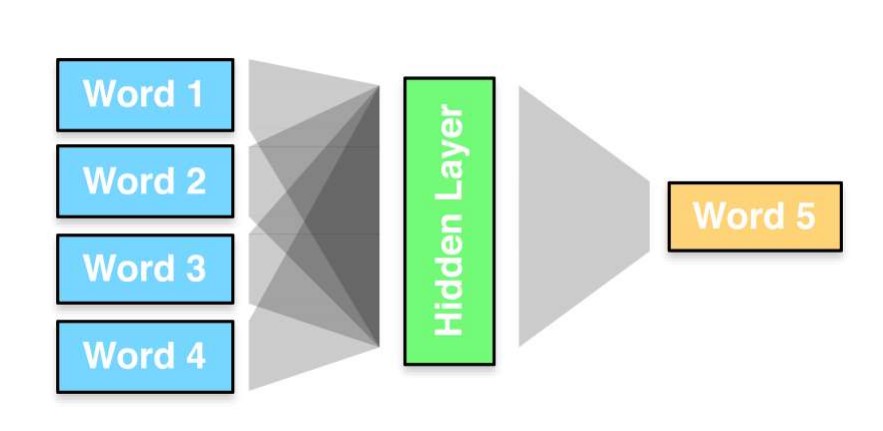

A straightforward method to describe LLM is an AI algorithm able to understanding and producing human language. Machine studying particularly Deep Studying is the spine of each LLM. It makes LLM able to decoding language enter primarily based on the patterns and complexity of characters and phrases in pure language.

LLMs are pre-trained on in depth information on the internet which reveals outcomes after comprehending complexity, sample, and relation within the language.

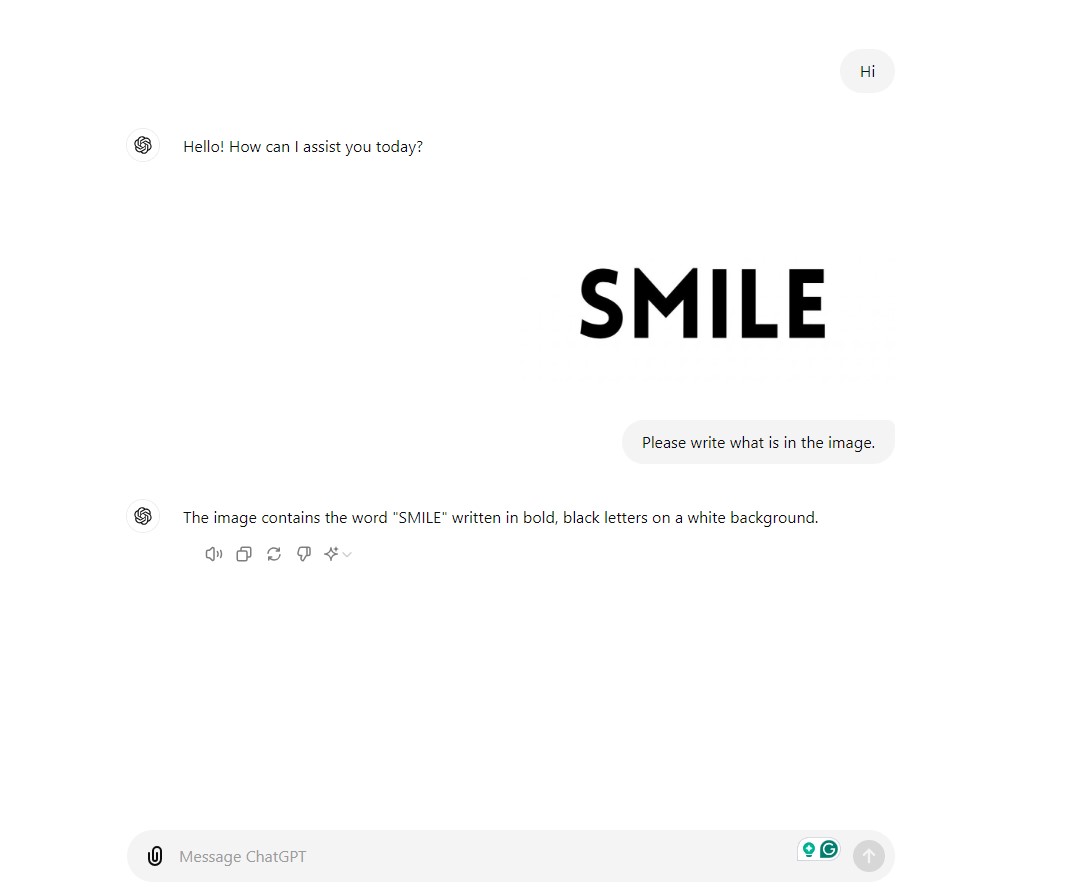

Presently, LLMs can comprehend and generate a variety of content material types like textual content, speech, footage, and movies, to call just a few. LLMs apply highly effective Pure Language Processing (NLP), machine translation, and Visible Query Answering (VQA).

Probably the most frequent examples of an LLM is a digital voice assistant comparable to Siri or Alexa. While you ask, “What’s the climate right this moment?”, the assistant will perceive your query and discover out what the climate is like. It then offers a logical reply. This clean interplay between machine and human occurs due to Massive Language Fashions. As a result of these fashions, the assistant can learn person enter in pure language and reply accordingly.

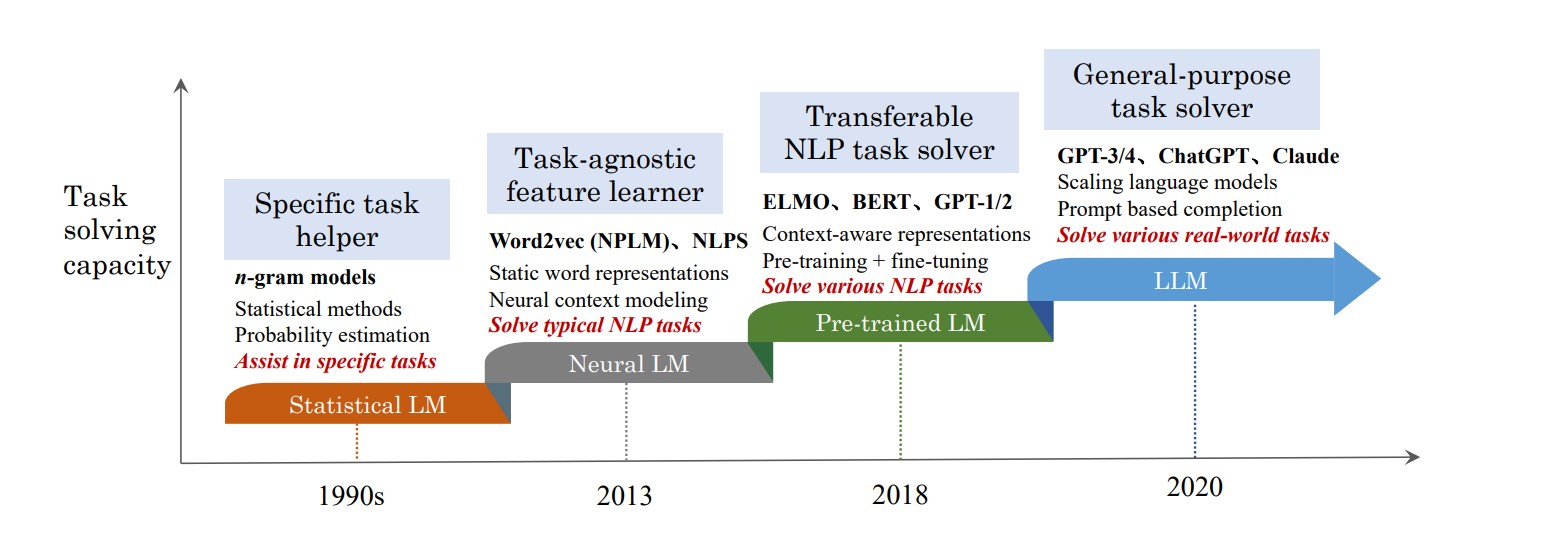

Emergence and Historical past of LLMs

Synthetic Neural Networks (ANNs) and Rule-based Fashions

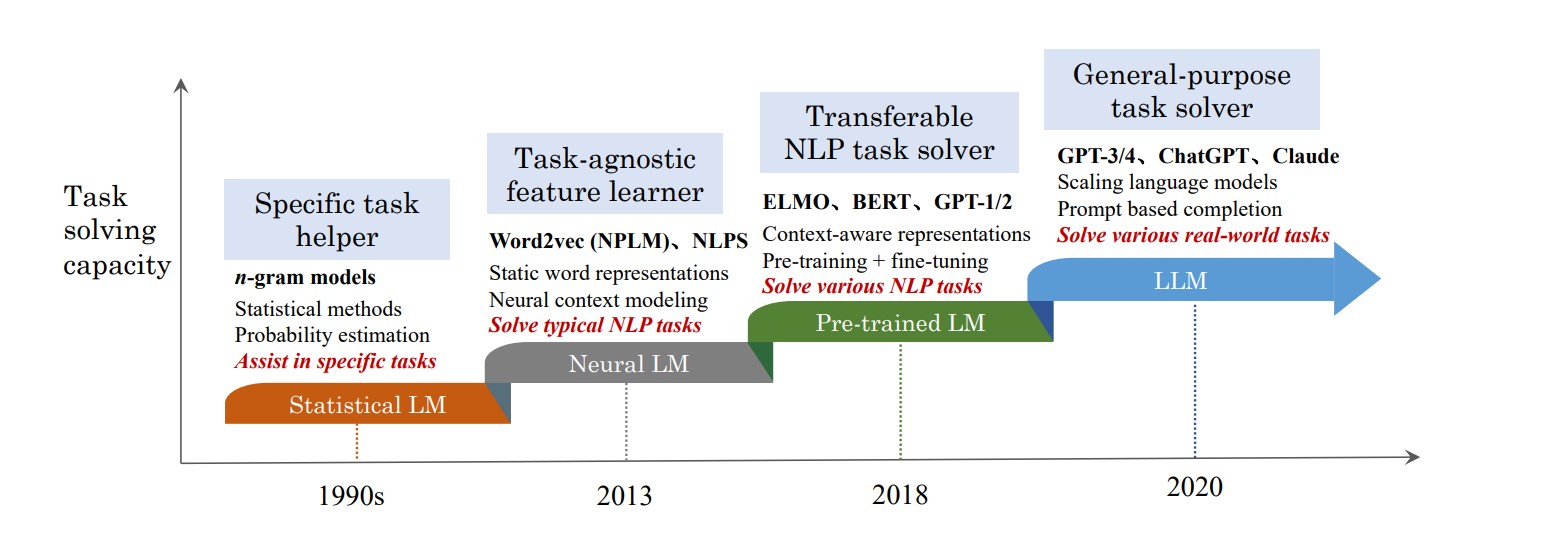

The inspiration of those Computational Linguistics fashions (CL) dates again to the Nineteen Forties when Warren McCulloch and Walter Pitts laid the groundwork for AI. This early analysis was not about designing a system however exploring the basics of Synthetic Neural Networks. Nonetheless, the very first language mannequin was a rule-based mannequin developed within the Nineteen Fifties. These fashions might perceive and produce pure language utilizing predefined guidelines however couldn’t comprehend complicated language or keep context.

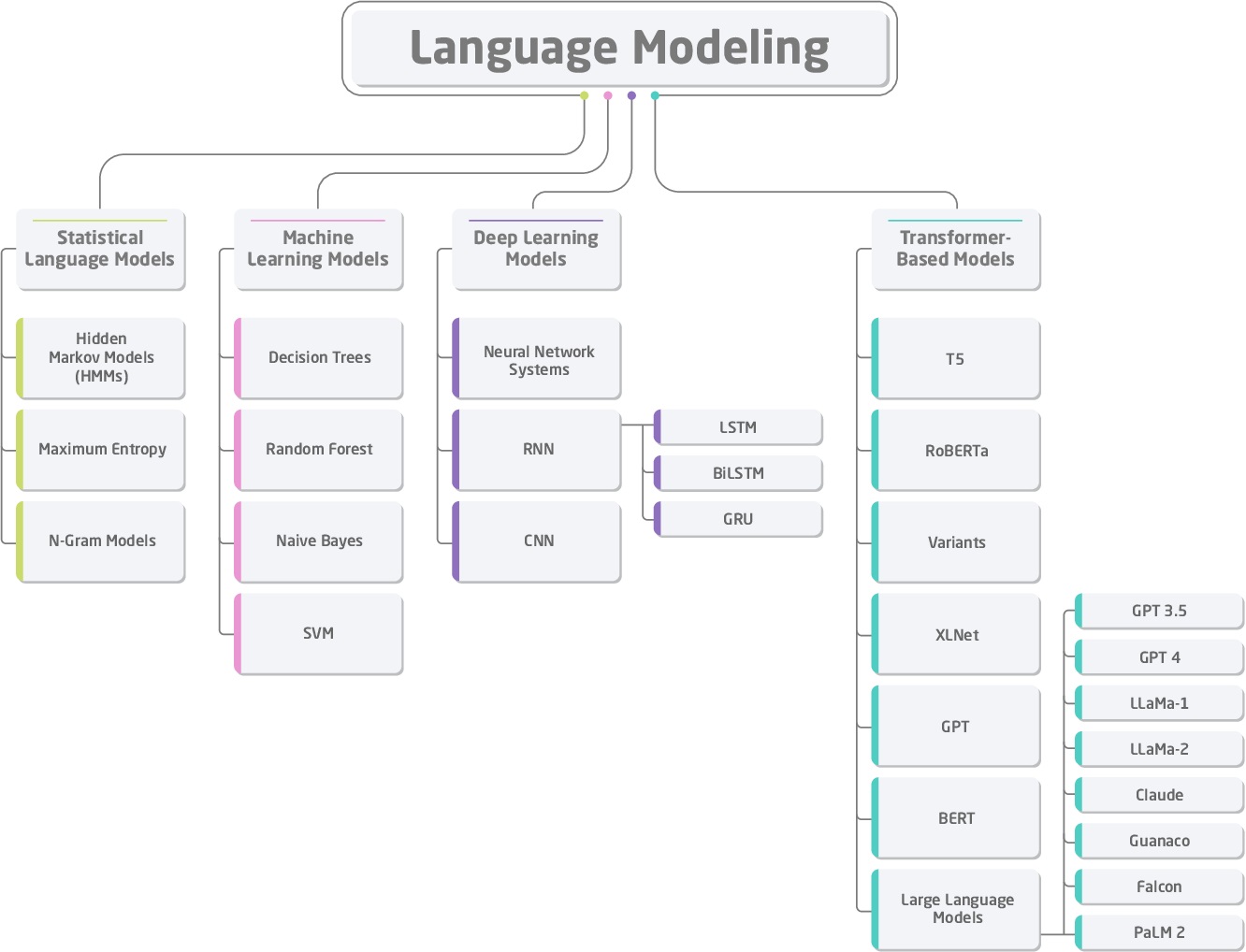

Statistics-based Fashions

After the prominence of statistical fashions, the language fashions developed within the 90s might predict and analyze language patterns. Utilizing possibilities, they’re relevant in speech recognition and machine translation.

Introduction of Phrase Embeddings

The introduction of the phrase embeddings initiated nice progress in LLM and NLP. These fashions created within the Mid-2000s might seize semantic relationships precisely by representing phrases in a steady vector house.

Recurrent Neural Community Language Fashions (RNNLM)

A decade later, Recurrent Neural Community Language Fashions ((RNNLM) have been launched to deal with sequential information. These RNN language fashions have been the primary to maintain context throughout completely different elements of the textual content for a greater understanding of language and output era.

Google Neural Machine Translation (GNMT)

In 2015, Google developed the revolutionary Google Neural Machine Translation (GNMT) for machine translation. The GNMT featured a deep neural community devoted to sentence-level translations quite than particular person word-base translations with a greater strategy to unsupervised studying.

It really works on the shared encoder-decoder-based structure with lengthy short-term reminiscence (LSTM) networks to seize context and the era of precise translations. Big datasets have been used to coach these fashions. Earlier than this mannequin, protecting some complicated patterns within the language and adapting to potential language buildings was not potential.

Current Growth

Lately, deep studying structure transformer-based language fashions like BERT (Bidirectional Encoder Representations from Transformers) and GPT-1 (Generative Pre-trained Transformer) have been launched by Google and OpenAI, respectively. Such fashions use a bidirectional strategy to grasp the context from each instructions in a sentence and likewise generate coherent textual content by predicting the subsequent phrase in a sequence to enhance duties like query answering and sentiment evaluation.

With the current launch of ChatGPT 4 and 4o, these fashions are getting extra subtle by including billions of parameters and setting new requirements in NLP duties.

Position of Massive Language Fashions in Trendy NLP

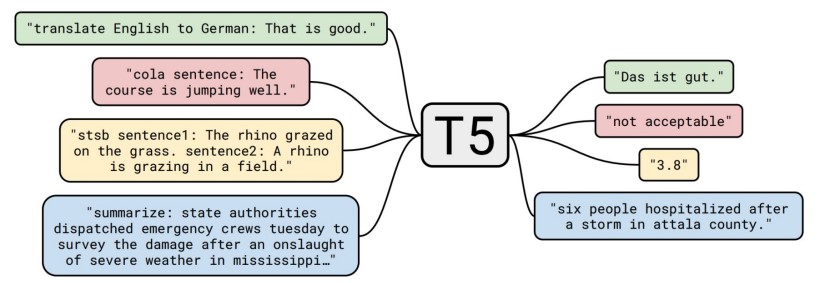

Massive Language Fashions are thought of subsets of Pure Language Processing and their progress additionally turns into necessary in Pure Language Processing (NLP). The fashions, comparable to BERT and GPT-3 (improved model of GPT-1 and GPT-2), made NLP duties higher and polished.

This language era mannequin requires massive quantities of knowledge units to coach and so they use architectures like transformers to keep up long-range dependencies in textual content. For instance, BERT can perceive the context of a phrase like “financial institution” to distinguish whether or not it refers to a monetary establishment or the aspect of a river.

OpenAI’s GPT-3, with its 175 billion parameters, is one other distinguished instance. Producing coherent and contextually related textual content is just made potential by OpenAI’s GPT-3 model. An instance of GPT-3’s functionality is its potential to finish sentences and paragraphs fluently, given a immediate.

LLM reveals excellent efficiency in duties involving data-to-text like suggesting primarily based in your preferences, translating to any language, and even inventive writing. Massive datasets ought to be used to coach these fashions after which fine-tuning is required primarily based on the precise utility.

LLMs give rise to challenges as properly whereas making nice progress. Issues like biases within the coaching set and the rising prices in computation want a large number of assets throughout intensive coaching and deployment.

Understanding The Working of LLMs – Transformer Structure

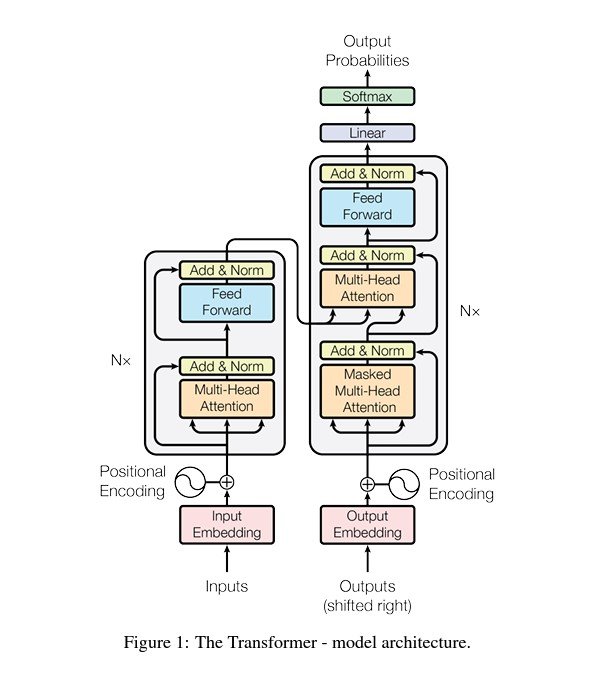

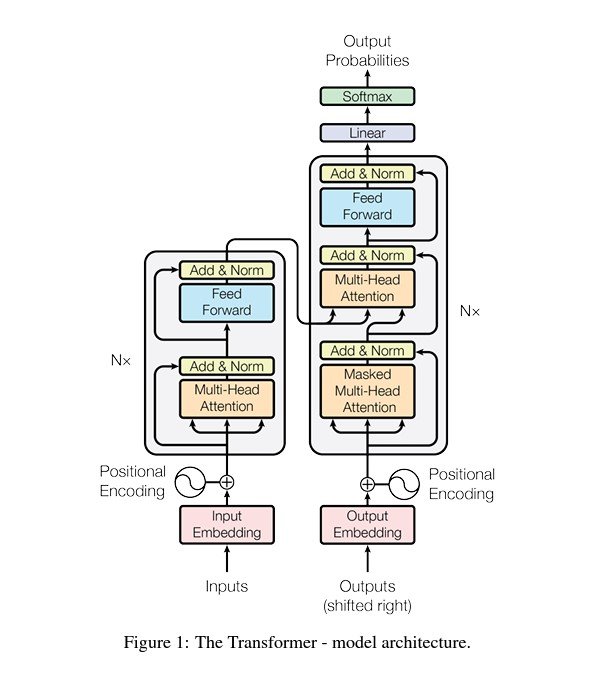

Deep studying structure Transformer serves because the cornerstone of contemporary LLMs and NLP. Not as a result of it’s comparatively environment friendly however because of the potential to deal with sequential information and seize long-range dependencies which can be long-needed in Massive Language Fashions. Launched by Vaswani et al. within the seminal paper “Consideration Is All You Want”, the Transformer mannequin revolutionized how language fashions course of and generate textual content.

Transformer Structure

A transformer structure primarily consists of an encoder and a decoder. Each comprise self-attention mechanisms and feed-forward neural networks. Fairly than processing the info body by body, transformers can course of enter information in parallel and keep long-range dependencies.

1. Tokenization

Each text-based enter is first tokenized into smaller models known as tokens. Tokenization converts every phrase into numbers representing a place in a predefined dictionary.

2. Embedding Layer

Tokens are handed by an embedding layer which then maps them to high-dimensional vectors to seize their semantic that means.

3. Positional Encoding

This step provides positional encoding to the embedding layer to assist the mannequin retain the order of tokens since transformers course of sequences in parallel.

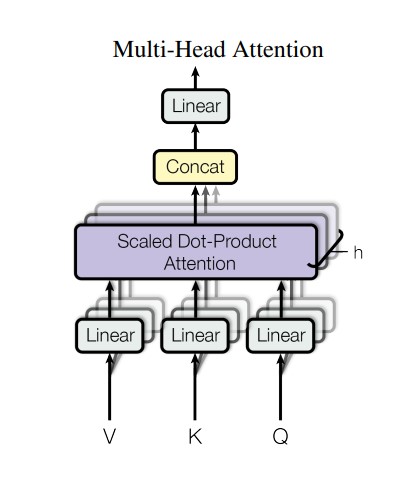

4. Self-Consideration Mechanism

For each token, the self-attention mechanism generates and calculates three vectors:

The dot-product of queries with keys determines the token relevance. The normalization of the outcomes is finished utilizing SoftMax after which utilized to the worth vectors to get context-aware phrase illustration.

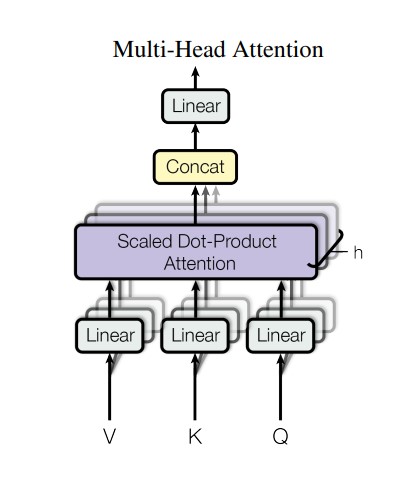

5. Multi-Head Consideration

Every head focuses on completely different enter sequences. The output is concatenated and linearly reworked leading to a greater understanding of complicated language buildings.

6. Feed-Ahead Neural Networks (FFNNs)

FFNNs course of every token independently. It consists of two linear transformations with a ReLU activation that provides non-linearity.

7. Encoder

The encoder processes the enter sequence and produces a context-rich illustration. It includes a number of layers of multi-head consideration and FFNNs.

8. Decoder

A decoder generates the output sequence. It processes the encoder’s output utilizing a further cross-attention mechanism, connecting sequences.

9. Output Era

The output is generated because the vector of logic for every token. The SoftMax layer is utilized to the output to transform them into chance scores. The token with the best rating is the subsequent phrase in sequence.

Instance

For a easy translation job by the Massive Language Mannequin, the encoder processes the enter sentence within the supply language to assemble a context-rich illustration, and the decoder generates a translated sentence within the goal language in keeping with the output generated by the encoder and the earlier tokens generated.

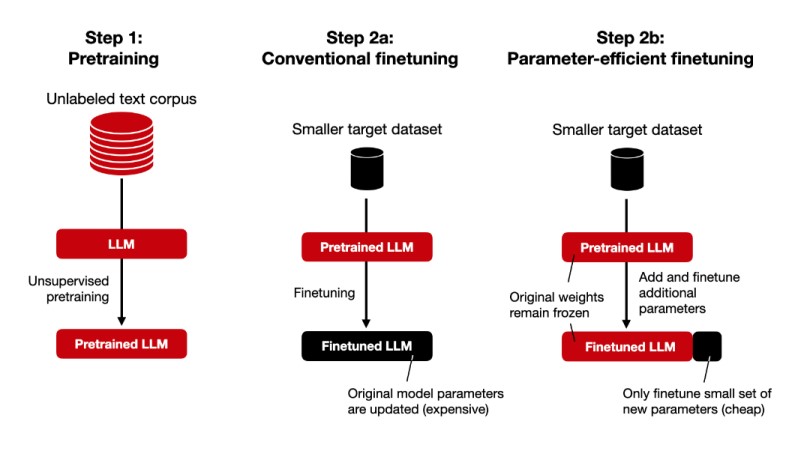

Customization and High-quality-Tuning of LLMs For A Particular Process

It’s potential to course of complete sentences concurrently utilizing the transformer’s self-attention mechanism. That is the muse behind a transformer structure. Nonetheless, to additional enhance its effectivity and make it relevant to a sure utility, a standard transformer mannequin wants fine-tuning.

Steps For High-quality-Tuning

- Information Assortment: Accumulate the info solely related to your particular job to make sure the mannequin achieves excessive accuracy.

- Information preprocessing: Based mostly in your dataset and its nature, normalize and tokenize textual content, take away cease phrases, and carry out morphological evaluation to organize information for coaching.

- Deciding on Mannequin: Select an applicable pre-trained mannequin (e.g., GPT-4, BERT) primarily based in your particular job necessities.

- Hyperparameter Tuning: For mannequin efficiency, alter the educational charge, batch measurement, variety of epochs, and dropout charge.

- High-quality-Tuning: Apply methods like LoRA or PEFT to fine-tune the mannequin on domain-specific information.

- Analysis and Deployment: Use metrics comparable to accuracy, precision, recall, and F1 rating to judge the mannequin and implement the fine-tuned mannequin in your job.

Massive Language Fashions’ Use-Instances and Purposes

Medication

Massive Language Fashions mixed with Pc Imaginative and prescient have change into an excellent device for radiologists. They’re utilizing LLMs for radiologic determination functions by the evaluation of photos to allow them to have second opinions. Common physicians and consultants additionally use LLMs like ChatGPT to get solutions to genetics-related questions from verified sources.

LLMs additionally automate the doctor-patient interplay, decreasing the chance of an infection or reduction for these unable to maneuver. It was an incredible breakthrough within the medical sector particularly throughout pandemics like COVID-19. Instruments like XrayGPT automate the evaluation of X-ray photos.

Schooling

Massive Language Fashions made studying materials extra interactive and simply accessible. With engines like google primarily based on AI fashions, academics can present college students with extra personalised programs and studying assets. Furthermore, AI instruments can provide one-on-one engagement and customised studying plans, comparable to Khanmigo, a Digital Tutor by Khan Academy, which makes use of scholar efficiency information to make focused suggestions.

A number of research present that ChatGPT’s efficiency on america Medical Licensing Examination (USMLE) was met or above the passing rating.

Finance

Threat evaluation, automated buying and selling, enterprise report evaluation, and assist reporting will be performed utilizing LLMs. Fashions like BloombergGPT obtain excellent outcomes for information classification, entity recognition, and question-answering duties.

LLMs built-in with Buyer Relation Administration Techniques (CRMs) have change into essential device for many companies as they automate most of their enterprise operations.

Different Purposes

- Builders are utilizing LLMs to jot down and debug their codes.

- Content material creation turns into tremendous straightforward with LLMs. They’ll generate blogs or YouTube scripts very quickly.

- LLMs can take enter of agricultural land and site and supply particulars on whether or not it’s good for agriculture or not.

- Instruments like PDFGPT assist automate literature opinions and extract related information or summarize textual content from the chosen analysis papers.

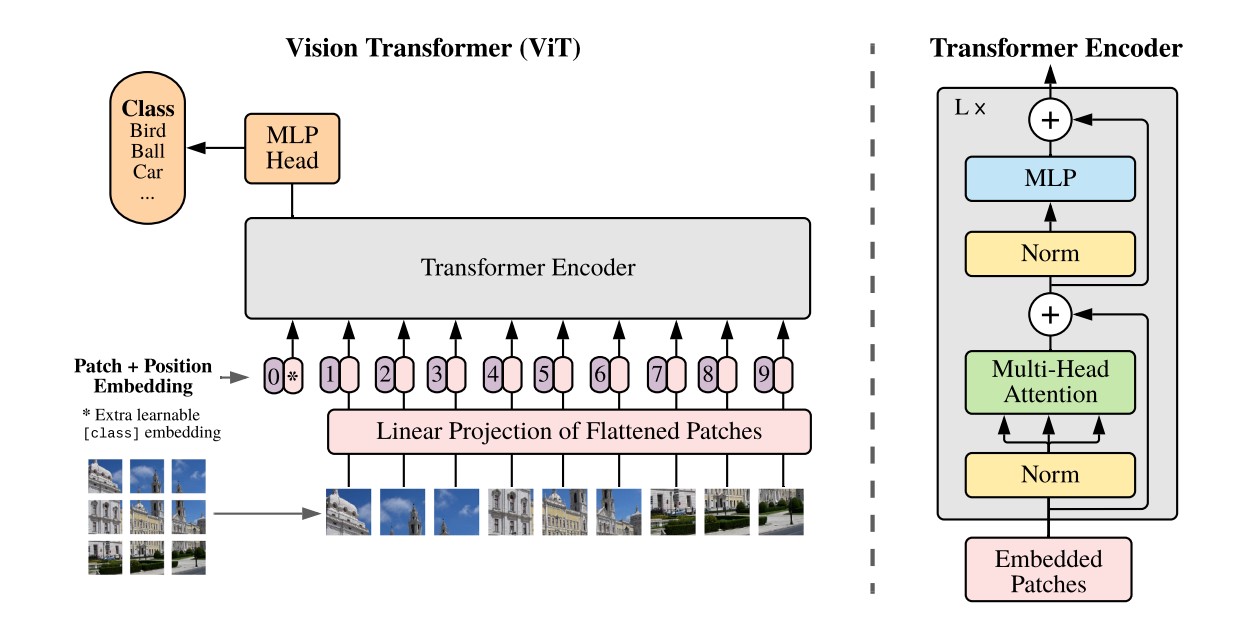

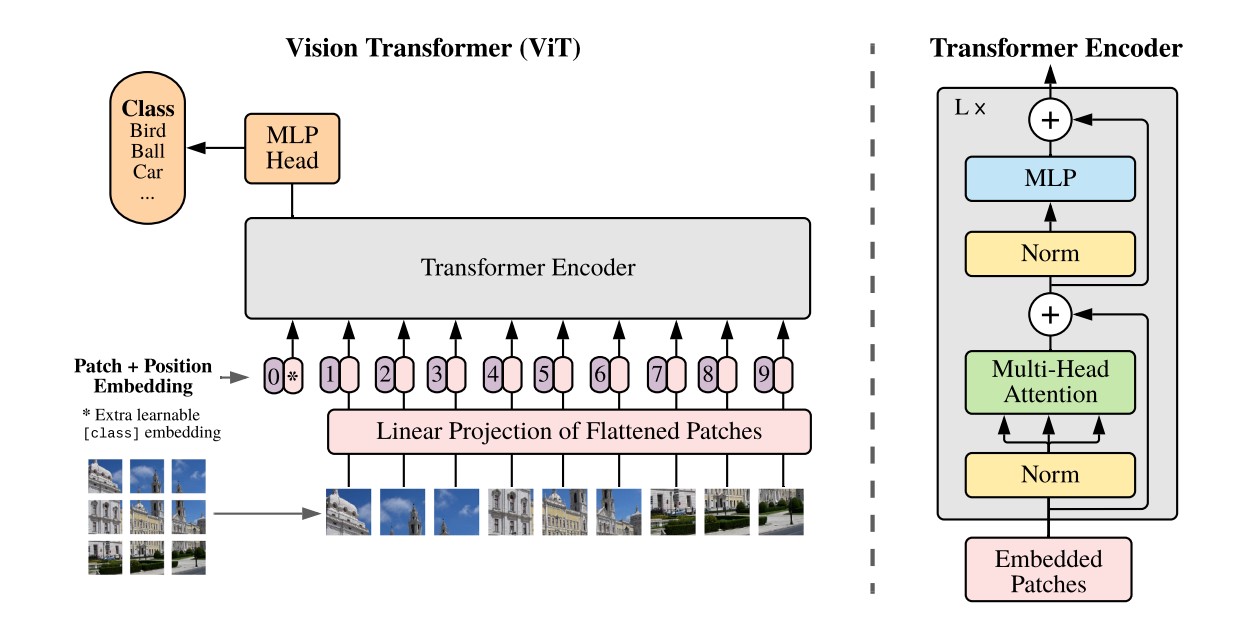

- Instruments like Imaginative and prescient Transformers (ViT) apply LLM ideas to picture recognition which helps in medical imaging.

What’s Subsequent?

Earlier than LLMs, it wasn’t straightforward to grasp and convey machine language. Nonetheless, Massive Language Fashions are part of our on a regular basis life making it too good to be true that we are able to discuss to computer systems. We will get extra personalised responses and perceive them due to their text-generation potential.

LLMs fill the long-awaited hole between machine and human communication. For the longer term, these fashions want extra task-specific modeling and improved and correct outcomes. Getting extra correct and complex with time, think about what we are able to obtain with the convergence of LLMs, Pc Imaginative and prescient, and Robotics.

Learn extra associated matters and blogs about LLMs and Deep Studying: