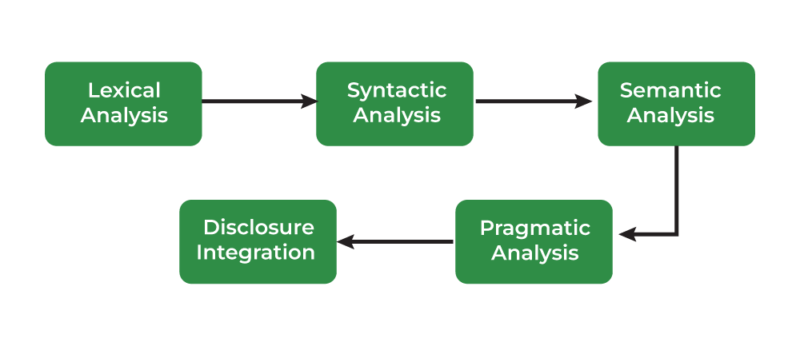

Pure Language Processing (NLP) is the sector of synthetic intelligence that focuses on the interplay between computer systems and human language. It entails a collection of levels or phases to course of and analyze language knowledge. The principle phases of NLP may be damaged down as follows.

We work together with language daily, effortlessly changing ideas into phrases. However for machines, understanding and manipulating human language is a posh problem. That is the place Pure Language Processing (NLP) is available in, a discipline of synthetic intelligence that empowers computer systems to know, interpret, and generate human language. However how precisely do machines obtain this feat? The reply lies in a collection of distinct phases that kind the spine of any NLP system.

Pure Language Processing (NLP) is a department of synthetic intelligence that focuses on enabling computer systems to know, interpret, and generate human language. NLP entails a number of levels or phases, every of which performs an important position in remodeling uncooked textual content knowledge into significant insights. Under are the standard phases of an NLP pipeline:

From Understanding to Motion

These phases aren’t all the time utterly separate, and so they usually overlap. Moreover, the precise methods used inside every part can fluctuate significantly relying on the duty and the chosen method. Nevertheless, understanding these core processes offers an important window into how machines are starting to “perceive” our language.

The ability of NLP lies not simply in understanding, but additionally in appearing upon what it understands. From voice assistants and chatbots to sentiment evaluation and machine translation, the purposes of NLP are huge and quickly increasing. As NLP know-how matures, it would proceed to revolutionize how we work together with machines and unlock new potentialities in almost each facet of our lives.

Phases of Pure Language Processing

1. Textual content Assortment

Instance: Gathering buyer evaluations, tweets, or information articles.

Description: This is step one, the place knowledge is collected for processing. It might embrace scraping textual content from web sites, utilizing obtainable datasets, or extracting textual content from paperwork (PDFs, Phrase recordsdata, and so forth.).

Lexical Evaluation, The Basis of Understanding: This primary part is all about breaking down the uncooked textual content into its primary constructing blocks, like phrases and punctuation marks. Think about it like sorting Lego bricks by shade and measurement.

- Stemming/Lemmatization: These methods scale back phrases to their root kinds, serving to to group comparable phrases collectively. “Operating” and “ran” would each be lowered to “run.” Stemming is a less complicated method that simply chops off endings, whereas lemmatization takes under consideration the context and produces dictionary-valid base kinds.The uncooked textual content knowledge is commonly noisy and unstructured, so preprocessing is step one to wash and format it for additional evaluation. Lemmatization: Much like stemming however extra subtle, it entails lowering phrases to their lemma (e.g., “higher” to “good”).

- Tokenization: This step entails splitting a textual content into particular person items referred to as “tokens.” These tokens could possibly be phrases, punctuation, numbers, and even particular person characters relying on the applying. For instance, the sentence “The cat sat on the mat.” can be tokenized into [“The”, “cat”, “sat”, “on”, “the”, “mat”, “.”].

- Cease Phrase Elimination: Frequent phrases (e.g., “the”, “is”, “and”) that don’t contribute a lot which means are sometimes eliminated.Many widespread phrases, like “the,” “a,” “is,” and “of,” don’t contribute a lot to the which means of a sentence. This step removes these “cease phrases” to cut back noise and enhance processing effectivity.

- Lowercasing: Changing all textual content to lowercase to keep away from distinguishing between “Apple” and “apple.”

- Eradicating Punctuation: Eliminating punctuation marks as they don’t sometimes add worth for a lot of NLP duties.

- Stemming: Lowering phrases to their base or root kind (e.g., “working” to “run”).

2. Textual content Illustration

After preprocessing, the subsequent step is to transform textual content right into a format that may be fed into machine studying algorithms. Frequent strategies embrace:

- Bag of Phrases (BoW): A easy mannequin the place every phrase is handled as a characteristic, and the textual content is represented by the frequency of phrases.

- TF-IDF (Time period Frequency-Inverse Doc Frequency): Weighs the significance of phrases by contemplating their frequency in a doc relative to their frequency in your entire corpus.

- Phrase Embeddings: Strategies like Word2Vec, GloVe, and FastText characterize phrases as dense vectors in a high-dimensional area, capturing semantic which means.

- Contextualized Embeddings: Fashions like BERT, GPT, and ELMo present dynamic embeddings primarily based on context, providing extra correct phrase representations.

- Description: Changing textual content right into a numerical format that machine studying fashions can perceive. Fashionable strategies embrace:

- Instance: The sentence “I really like pure language processing” is perhaps transformed right into a vector that represents its semantic which means.

3. Syntactic Evaluation: Understanding Sentence Construction

Phrase Sense Disambiguation: Analyzing the grammatical construction of sentences to know how phrases are associated. The result’s usually represented as a parse tree or a dependency tree.Many phrases have a number of meanings. This step goals to determine the proper which means of a phrase primarily based on its context. For instance, take into account the phrase “financial institution.” Is it a monetary establishment or the sting of a river? For the sentence “The cat sat on the mat,” syntactic evaluation would decide the relationships between “cat,” “sat,” and “mat.

Named Entity Recognition (NER): This entails figuring out and classifying named entities within the textual content, akin to folks, organizations, places, and dates. This permits the system to extract key components from a textual content and arrange info.

Semantic Relationship Extraction: This course of focuses on uncovering the relationships between these entities. For instance, understanding that “Apple” is a “firm” and that “Steve Jobs” was its “founder.” This helps perceive the connections throughout the textual content.

- Half-of-Speech (POS) Tagging: This entails figuring out the grammatical position of every phrase in a sentence, akin to noun, verb, adjective, and so forth. For instance, in “The cat sat”, “The” is a determiner, “cat” is a noun, and “sat” is a verb. Description: Figuring out the grammatical parts of a sentence, akin to nouns, verbs, adjectives, and so forth. This helps in understanding the syntactic construction of the sentence.

- Instance: Within the sentence “The cat runs quick,” “The” is a determiner, “cat” is a noun, and “runs” is a verb.

- Parsing: This deeper evaluation determines how phrases are grouped to kind phrases and sentences. It constructs a parse tree that highlights the relationships between phrases based on grammar guidelines. This helps the system perceive the underlying construction of the sentence.

- Dependency Parsing: This builds on parsing by figuring out how phrases rely upon one another. As an illustration, in “The cat ate the fish,” “ate” is the primary verb and “cat” is its topic, whereas “fish” is its object.

4. Semantic Evaluation

This part focuses on understanding the which means of phrases, phrases, and sentences.

- Named Entity Recognition (NER): Figuring out correct names, akin to folks, organizations, places, dates, and so forth. Figuring out entities within the textual content akin to names of individuals, locations, organizations, dates, and so forth. Within the sentence “Apple introduced a brand new product in New York on January 15,” “Apple” is a corporation, “New York” is a location, and “January 15” is a date.

- Phrase Sense Disambiguation: Figuring out the which means of a phrase primarily based on its context (e.g., distinguishing between “financial institution” as a monetary establishment and “financial institution” because the aspect of a river).

- Coreference Decision: Figuring out which phrases or phrases check with the identical entity in a textual content (e.g., “John” and “he”).

- Semantic Position Labeling: Assigning roles (e.g., agent, affected person, objective) to phrases in a sentence to know their relationships.

5. Pragmatic Evaluation

This part entails understanding the broader context of the textual content, together with implied which means, sentiment, and intent.

- Sentiment Evaluation: Figuring out whether or not the textual content expresses a constructive, unfavourable, or impartial sentiment.

- Intent Recognition: Figuring out the objective or goal behind a textual content, particularly in duties like chatbots and digital assistants (e.g., is the person asking a query or making a command?).

- Speech Acts: Recognizing the operate of a press release (e.g., is it an assertion, query, request?).

6. Discourse Evaluation: Past Single Sentences

Discourse evaluation entails understanding the connection between sentences or components of the textual content in bigger contexts, akin to paragraphs or conversations.

- Coherence and Cohesion: Guaranteeing that the textual content flows logically, with correct hyperlinks between concepts and sentences.

- Subject Modeling: Figuring out the primary themes or subjects inside a group of paperwork (e.g., Latent Dirichlet Allocation, or LDA).

- Summarization: Lowering a doc or textual content to its important content material, whereas sustaining its which means. This may be extractive (choosing components of the textual content) or abstractive (producing a brand new abstract).

- Description: Understanding the construction and coherence of longer items of textual content. This part entails analyzing how sentences join and circulate collectively to kind a coherent discourse.

- Instance: Understanding that in a narrative, “John was drained. He went to mattress early,” “He” refers to “John.”

- Within the sentences “John went to the shop. He purchased some milk,” the coreference decision identifies that “He” refers to “John.”

- This remaining part seems on the context surrounding a number of sentences and paragraphs to know the general circulate and which means of the textual content. It’s like analyzing the context across the Lego construction to know its position inside a bigger panorama.

- Anaphora Decision: This entails figuring out what a pronoun refers to. For instance, in “The canine chased the ball. It was quick,” “it” refers back to the “ball”.

- Coherence Evaluation: This step analyzes the logical construction and connections between completely different components of a textual content. It helps the system determine the general message, argument, and intent of the textual content.

7. Textual content Technology

This part entails producing human-like textual content from structured knowledge or primarily based on a given immediate.

- Language Modeling: Predicting the subsequent phrase or sequence of phrases given some context (e.g., GPT-3).

- Machine Translation: Translating textual content from one language to a different.

- Textual content-to-Speech (TTS) and Speech-to-Textual content (STT): Changing written textual content into spoken language or vice versa.

8. Submit-Processing and Analysis

After the primary NLP duties are carried out, outcomes should be refined and evaluated for high quality.

- Analysis Metrics: Measures like accuracy, precision, recall, F1-score, BLEU rating (for translation), ROUGE rating (for summarization), and so forth., are used to evaluate the efficiency of NLP fashions.

- Error Evaluation: Figuring out and understanding errors to enhance mannequin efficiency.

9. Utility/Deployment

Lastly, the NLP mannequin is built-in into real-world purposes. This might contain:

- Chatbots and Digital Assistants: Functions like Siri, Alexa, or customer support bots.

- Search Engines: Bettering search relevance by higher understanding queries.

- Machine Translation Techniques: Automated language translation instruments (e.g., Google Translate).

- Sentiment Evaluation Techniques: For analyzing public opinion in social media, evaluations, and so forth.

- Speech Recognition Techniques: For changing speech into textual content and vice versa.

10. Machine Studying/Deep Studying Fashions

Reinforcement Studying: Utilized in programs like chatbots the place actions are taken primarily based on person interplay.Key Concerns

Description: As soon as the textual content has been processed, varied machine studying or deep studying fashions are used to carry out duties akin to classification, translation, summarization, and query answering.

Supervised Studying: Algorithms are educated on labeled knowledge to carry out duties like sentiment evaluation, classification, or named entity recognition.

Unsupervised Studying: Algorithms are used to search out patterns in unlabeled knowledge, like matter modeling or clustering.

- Multilingual NLP: Dealing with textual content in a number of languages and addressing challenges like translation, tokenization, and phrase sense disambiguation.

- Bias in NLP: Addressing bias in knowledge and fashions to make sure equity and inclusivity.

- Area-Particular NLP: Customizing NLP for specialised fields like medication (bioNLP), legislation (authorized NLP), or finance.

These phases characterize a typical NLP pipeline, however relying on the applying and drawback at hand, not all phases could also be required or carried out in the identical order.

Conclusion

In conclusion, understanding the phases of NLP isn’t only a technical train; it’s a journey into the very coronary heart of how machines are studying to talk our language. As we progress on this discipline, we’ll proceed unlocking new methods for people and machines to speak and collaborate seamlessly.

Every of those phases performs an important position in enabling NLP programs to successfully interpret and generate human language. Relying on the duty (like machine translation, sentiment evaluation, and so forth.), some phases could also be emphasised greater than others.

Submit Views: 8