Secure Diffusion (SD) is a Generative AI mannequin that makes use of latent diffusion to generate beautiful photos. This deep studying mannequin can generate high-quality photos from textual content descriptions, different photos, and much more capabilities, revolutionizing the way in which artists and creators strategy picture creation. Regardless of its highly effective capabilities, studying to make use of Secure Diffusion successfully can have a steep studying curve.

On this complete information, we’ll break down the complexities. We’ll cowl all the pieces from the basics of the way it works to superior strategies for fine-tuning the mannequin to create distinctive and customized photos.

So, Let’s dive in for a inventive journey into Secure Diffusion!

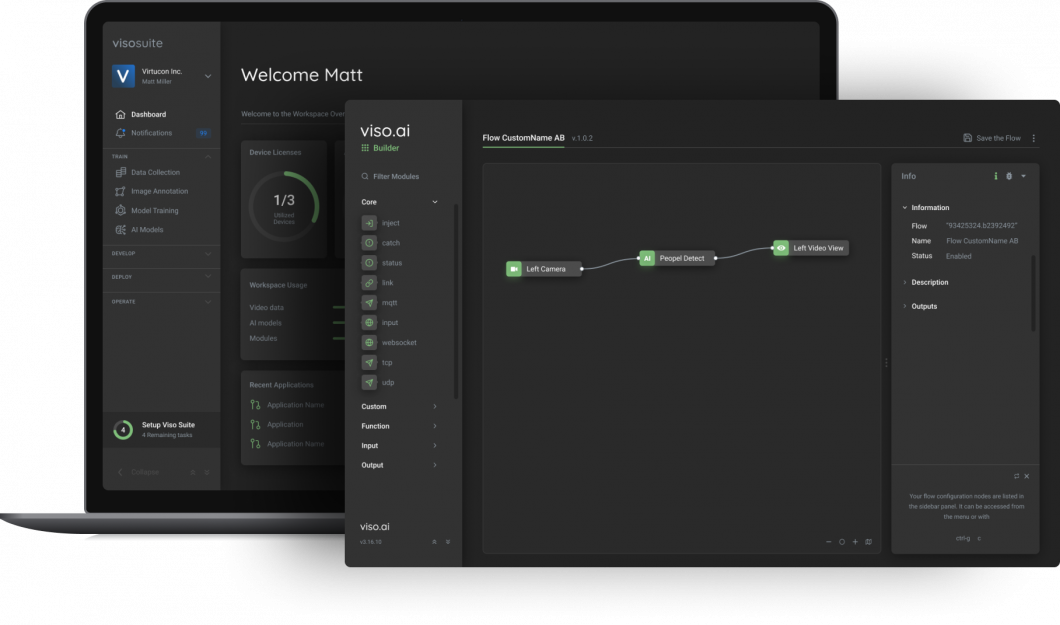

About us: Viso Suite is a versatile and scalable infrastructure developed for enterprises to combine pc imaginative and prescient into their tech ecosystems seamlessly. Viso Suite permits enterprise ML groups to coach, deploy, handle, and safe pc imaginative and prescient functions in a single interface. E book a demo with our group of consultants to be taught extra.

Understanding Secure Diffusion

Earlier than diving into the sensible features of Secure Diffusion, it is very important perceive the internal workings of this mannequin. Whereas it shares some core ideas with different generative AI fashions, there are additionally core variations. The latent areas idea and diffusion processes are shared, however Secure Diffusion (SD) has a novel structure and coaching methodologies.

By understanding how SD works, you’ll achieve the information wanted to make use of this mannequin, craft efficient prompts, and even fine-tune. So, let’s begin by answering some basic questions.

What’s Secure Diffusion?

Secure Diffusion is a latent diffusion generative mannequin made by researchers at CompVis. These latent diffusion fashions got here from the event of probabilistic diffusion fashions which trusted early strategies that use chance to pattern photos. After GANs and VAEs, latent diffusion got here as a robust growth in picture technology with many capabilities. These capabilities are a results of the combination of consideration mechanisms from Transformers.

- Textual content-to-image: conditioning technology primarily based on textual content prompts.

- Inpainting: Masking part of a picture and producing instead.

- Tremendous Decision: Rising picture high quality

- Semantic Synthesis: Producing Pictures primarily based on Semantic Masks.

- Picture conditioning: Situation the technology primarily based on a picture, creating picture variations or upscaling the picture.

These capabilities made latent diffusion expertise a state-of-the-art technique for picture technology. Later when the mannequin checkpoints have been launched, researchers and builders made customized fashions, making Secure Diffusion fashions sooner, extra reminiscence environment friendly, and extra performant. Since its launch, newer variations adopted akin to those under.

- SD v1.1-1.4: These have been launched by CompVis with 256×256 and 512×512 resolutions and virtually 1,000,000 coaching steps for the 1.4.

- SD 1.5: Launched by RunwayML with totally different weights resuming from earlier checkpoints.

- SD 2.0-2.1: Educated from scratch by Stabilityai, has as much as 768×768 decision with nice outcomes.

- SD XL 1.0/Turbo: Additionally from Stability AI, this pipeline makes use of an SD base mannequin to ship beautiful outcomes and improved image-to-image options.

- SD 3.0: An early preview of a household of fashions by Stabilityai as effectively. With parameters starting from 800M to 8B, taking us to a brand new degree of realism in picture technology.

Let’s now take a look at the essential structure of Secure diffusion fashions and their internal workings.

How Does Secure Diffusion Work?

Usually talking, diffusion fashions are educated to denoise random noise referred to as Gaussian noise step-by-step, till we get to the pattern of curiosity which is the picture. Diffusion fashions are probability-based, predicting the probability of a picture’s look.

These fashions confirmed nice outcomes, however the draw back was the velocity and resource-intensive nature of the denoising course of. Denoising is a sequential course of, occurring within the pixel house, which may develop into enormous with high-resolution photos.

The latent diffusion structure reduces reminiscence utilization and computing complexity by making use of the diffusion course of to a lower-dimensional latent house. This distinguishes latent diffusion fashions like Secure Diffusion from conventional ones: they generate compressed picture representations as a substitute of utilizing the Pixel house. To do that, latent diffusion has the parts under.

- U-Internet Spine: Utilizing the identical U-Internet as earlier diffusion fashions however with the addition of cross-attention layers for the denoising course of.

- VAE: An encoder encodes enter photos to latent representations for the U-Internet, whereas a decoder transforms the output again into a picture.

- Conditioning: Permits latent diffusion fashions to be conditioned in a number of methods, for instance, textual content conditioning permits for text-to-image technology.

Throughout inference, the secure diffusion AI mannequin takes a latent seed and a situation. The seed is used to generate a random picture illustration and the situation is encoded respectively.

For text-to-image fashions, the CLIP-ViT textual content encoder is used to generate textual content embeddings. The U-Internet then denoises the generated noise whereas being conditioned. The output of the U-Internet is then used to compute a denoised latent picture illustration through a scheduler algorithm.

Now that we’ve sufficient information of Secure Diffusion AI and its internal workings, we are able to transfer to the sensible steps.

Getting Began With Secure Diffusion

Picture technology fashions, particularly Secure Diffusion, require a considerable amount of coaching knowledge, thus coaching from scratch is often not the most effective path with these fashions. Nonetheless, inference and fine-tuning are nice methods to make use of Secure Diffusion fashions.

On this part, we’ll delve into the sensible facet of utilizing Secure Diffusion. The setup of our surroundings might be on Kaggle notebooks, which gives free entry to GPUs to run the mannequin. We’ll leverage the Diffusers library to streamline the method, and for this information, we’ll deal with Secure Diffusion XL 1.0, for several types of inference and parameter tuning. We’ll then take a look at fine-tuning and the method it includes.

Setup on Kaggle Notebooks

Kaggle notebooks present good GPU choices and a straightforward setup to work with. Secure Diffusion XL (SDXL) could be heavy to run regionally, so utilizing a hosted pocket book is useful. Whereas different choices like Google Colab can be found, they not permit Secure Diffusion fashions to be run on it.

So, to get began, log in or signal as much as Kaggle and create a brand new pocket book. As soon as that’s open now you can see the default pocket book view.

You’ll be able to rename the pocket book within the prime left nook. Subsequent, let’s delete that default cell as we gained’t be needing it by right-clicking and deleting the cell. Earlier than beginning with the code, let’s additionally arrange the GPU for a clean run.

Go to the three vertical dots, select accelerator, after which the P100 GPU. P100 is an effective GPU possibility that may permit us to run SDXL. Now that we’ve that setup, press the ability button, and let’s get the pocket book working. To begin with our code, let’s set up the wanted libraries.

pip set up diffusers invisible_watermark transformers speed up safetensors xformers --upgrade

After putting in the libraries, subsequent we use the Secure Diffusion XL.

Producing Your First Picture

Add a code block after which use the next code to import the libraries and cargo the Secure Diffusion XL pipeline.

from diffusers import DiffusionPipeline

import torch

pipe = DiffusionPipeline.from_pretrained("stabilityai/stable-diffusion-xl-base-1.0", torch_dtype=torch.float16, use_safetensors=True, variant="fp16").to("cuda")

This code might take a while to run, so let’s break it down. We import the DiffusionPipeline from the diffusers library, torch is Pytorch, permitting us to work with tensors.

Subsequent, we create the variable pipe which accommodates our mannequin. To load the mannequin we use the DiffusionPipeline and provides it the primary parameter which is the mannequin repository identifier from Hugging Face Hub “stabilityai/stable-diffusion-xl-base-1.0”. The torch_dtype=torch.float16 parameter units the info sort to be 16-bit floating level (FP16) to provide sooner computation and decreased reminiscence utilization.

The variant parameter specifies that we used FP16 after which the use_safetensors parameter specifies to save lots of the mannequin as a secure tensor. The final half is “.to(“cuda”)” which strikes the pipeline to the GPU.

The final step earlier than we infer the mannequin is to make the technology course of sooner and extra environment friendly.

pipe.enable_xformers_memory_efficient_attention()

Subsequent, let’s create a picture!

immediate = "A Cat using a horse and holding a sword" photos = pipe(immediate=immediate).photos[0]

The immediate is adjustable, modify it to no matter you need. Once you run it, inference ought to begin and your picture ought to be saved within the photos array. Let’s take a look at the generated picture.

from PIL import Picture

import matplotlib.pyplot as plt

photos.save("knight_cat.png")

import matplotlib.pyplot as plt

plt.imshow(photos)

plt.axis('off')

plt.present()

This code will save your output picture within the output folder on the proper facet of the Kaggle interface named “knight-cat.png”. Additionally, we show the picture utilizing the Matplot library. Here’s what the output appeared like.

Superior Textual content-To-Picture Era

That output appeared cool, however what if we would like extra management over the picture technology course of? We are able to do this utilizing some superior options. Let’s discover that. We have to load an extra pipeline that may permit us extra choices over the technology course of, which is the refiner pipeline. Assuming you continue to have your pocket book working and the Secure Diffusion XL pipeline loaded as pipe, we are able to use the under code to load the refiner.

refiner = DiffusionPipeline.from_pretrained(

"stabilityai/stable-diffusion-xl-refiner-1.0",

text_encoder_2=pipe.text_encoder_2,

vae=pipe.vae,

torch_dtype=torch.float16,

use_safetensors=True,

variant="fp16",

).to("cuda")

The refiner has related parameters to the SDXL pipeline however with a number of additions just like the “VAE” parameter which takes the VAE from the pipe we loaded, and the identical for the textual content encoder. Now that we loaded the refiner, we are able to outline the choices to regulate the technology.

n_steps = 60 high_noise_frac = 0.75 immediate = "Neon-lit cyberpunk metropolis, rain-slicked streets reflecting the colourful indicators, flying autos, lone determine in a trench coat disappearing into an alley."

These choices will have an effect on the technology course of drastically, the n_steps determines the variety of denoising steps the mannequin will take. The high_noise_frac is a proportion worth figuring out how a lot work to separate between the bottom mannequin (pipe) and the refiner. In our case, we tried 0.75 which suggests the bottom mannequin does 75% (45 steps) of the work, and 25% by the refiner (15 steps).

Earlier than producing a picture with our settings, we may take an extra step that may assist us cut back GPU reminiscence utilization.

pipe.enable_model_cpu_offload()

Now, to run inference on each pipelines we are able to do the next.

picture = pipe(

immediate=immediate,

num_inference_steps=n_steps,

denoising_end=high_noise_frac,

output_type="latent",

).photos

picture = refiner(

immediate=immediate,

num_inference_steps=n_steps,

denoising_start=high_noise_frac,

picture=picture,

).photos[0]

Working it will run each the refiner and the Secure Diffusion XL pipeline with the settings we outlined. Then we are able to show and save the generated picture identical to earlier than.

import matplotlib.pyplot as plt

photos.save("cyberpunk-city.png")

plt.imshow(picture)

plt.axis('off')

plt.present()

Here’s what the output appears to be like like.

Making an attempt totally different values for the “n_steps” and “high_noise_frac” will help you discover how they make a distinction within the generated picture. A fast tip: Attempt utilizing totally different prompts for the refiner and base.

Exploring Different Options

We beforehand talked about the capabilities of Secure Diffusion in different duties like image-to-image technology and inpainting. We are able to use virtually the identical code to make use of these options, studying the documentation could be useful as effectively. Here’s a fast code to make use of the image-to-image function, assuming you may have run the earlier code.

from diffusers import AutoPipelineForImage2Image

from diffusers.utils import load_image, make_image_grid

pipeline = AutoPipelineForImage2Image.from_pipe(pipe).to("cuda")

url = "https://huggingface.co/datasets/huggingface/documentation-images/resolve/important/diffusers/sdxl-text2img.png"

init_image = load_image(url)

immediate = "a cat carrying sun shades within the jungle"

picture = pipeline(immediate, picture=init_image, energy=0.8, guidance_scale=10.5).photos[0]

make_image_grid([init_image, image], rows=1, cols=2)

This code will use an instance picture from the HuggingFace datasets because the situation and cargo it by means of the URL. You should utilize your picture there. We’re loading the image-to-image pipeline, however to save lots of reminiscence we load it from our already loaded pipe.

There are parameters like energy that management the affect of the preliminary picture on the ultimate outcome. The steerage scale determines how carefully the mannequin follows the textual content immediate. Under is what the output appears to be like like.

We are able to see how the generated picture (on the proper) adopted the type of the situation picture on the left. Picture-to-image technology is a cool function with Secure Diffusion displaying the ability of latent diffusion mannequin structure and the totally different circumstances we are able to have. Our recommendation is to discover the documentation and check out totally different duties, parameters, and even different Secure Diffusion variations. The code is comparable, so go on the market and discover.

Older variations like SD 1.5 may even permit extra complicated tunings for the parameters, and perhaps even a wider vary of duties. These fashions can carry out effectively and use fewer computational sources, probably permitting a greater experimenting expertise. To take the following step in the direction of mastering Secure Diffusion AI, allow us to discover fine-tuning.

High quality-Tuning Secure Diffusion

High quality-tunning or switch studying is a way utilized in deep studying to additional prepare a pre-trained mannequin on a smaller, focused dataset. This enables the mannequin to keep up its capabilities, but in addition achieve new specified information. So, we are able to take a mannequin like Secure Diffusion, which has been educated on a large dataset of photos, and refine it additional on a smaller, extra centered dataset.

Let’s discover how this works, its makes use of, and common strategies for Secure Diffusion fine-tuning.

What’s High quality-tunning and Why Do It?

Generalization is a giant downside in terms of pc imaginative and prescient or picture technology fashions. This is actually because you might need a selected area of interest use that was not represented effectively within the mannequin’s coaching knowledge. In addition to the inevitable bias in pc imaginative and prescient datasets.

This strategy often includes a number of steps, akin to accumulating the dataset, preprocessing, and cleansing it in accordance with the anticipated enter of Secure Diffusion. The dataset will often be lots of or hundreds of photos, which remains to be a lot smaller than the unique coaching knowledge.

The principle idea in fine-tuning is freezing some layers, which is completed by retaining the preliminary layers of the mannequin, that often seize fundamental options and textures, unchanged or frozen. Whereas later layers are adjusted and proceed coaching on the brand new knowledge.

One other essential metric is the training price which determines how a lot a mannequin’s weights are adjusted throughout coaching. Nonetheless, fine-tuning has a number of benefits and downsides.

Benefits:

- Efficiency: Permitting Secure Diffusion to carry out higher on a selected area of interest.

- Effectivity: High quality-tuning a pre-trained mannequin is far sooner and cheaper than coaching from scratch.

- Democratization: Making fashions extra accessible by means of totally different niches.

Drawbacks:

- Overfitting: High quality-tuning with the improper parameters can lead the mannequin to overfit, forgetting its normal coaching knowledge.

- Reliance: When fine-tuning a pre-trained mannequin we depend on the earlier coaching it needed to be ample to proceed. Additionally, if the unique mannequin had biases or safety points, we are able to count on these to persist.

Kinds of High quality-tuning for Secure Diffusion

High quality-tuning Secure Diffusion has been a well-liked vacation spot for many builders. A number of strategies have been developed to fine-tune these fashions simply, even with out code.

- Dreambooth: a fine-tuning approach that may educate Secure Diffusion new ideas utilizing solely (3~5) photos. Permitting anybody to personalize their mannequin utilizing a number of photos of the topic. (Utilized to Secure Diffusion 1.4)

- Textual Inversion: This strategy permits for studying new concepts from just some instance photos. It accomplishes this by creating new “ideas” throughout the embedding house of the textual content encoder utilized within the picture technology pipeline. These specialised ideas can then be built-in into textual content prompts to supply very granular management over the generated photos. (Utilized to Secure Diffusion 1.5)

- Textual content-To-Picture High quality-Tuning: That is the classical manner of fine-tuning, the place you’ll put together a dataset in accordance with the anticipated format and prepare some layers of the mannequin on it. This technique permits for larger management over the method, however on the identical time, it’s simple to overfit or run into points like catastrophic forgetting.

What’s Subsequent for Secure Diffusion?

Secure Diffusion AI has improved the world of picture technology endlessly. Whether or not it’s producing photorealistic landscapes, creating characters, and even social media posts, the one restrict is our creativeness. Researchers are utilizing Secure Diffusion for duties apart from picture technology, like Pure Language Processing (NLP) and audio duties.

On the subject of real-world affect, we’re already seeing this in lots of industries. Artists and designers are creating beautiful graphics, paintings, and logos. Advertising and marketing groups are making participating campaigns, and educators are exploring customized studying experiences utilizing this expertise. We are able to even transcend that with video creation and picture enhancing.

Utilizing Secure Diffusion is pretty simple by means of platforms like HuggingFace, or libraries like Diffusers, however new instruments like ComfyUI are making it much more accessible with no-code interfaces. This implies extra folks can experiment with it. Nonetheless, as with every highly effective device, we should take into account moral implications. Issues like deepfakes, copyright infringement, and biases within the coaching knowledge generally is a actual concern, and lift essential questions on accountable AI use.

The place will Secure Diffusion and generative AI take us subsequent? The way forward for AI-generated content material is thrilling and it’s as much as us to take a accountable path, guaranteeing this expertise enhances creativity, drives innovation, and respects moral boundaries.

When you loved studying this weblog, we advocate our different blogs: