In our earlier put up, we mentioned issues round selecting a vector database for our hypothetical retrieval augmented era (RAG) use case. However when constructing a RAG software we regularly must make one other essential determination: select a vector embedding mannequin, a essential part of many generative AI functions.

A vector embedding mannequin is accountable for the transformation of unstructured knowledge (textual content, photos, audio, video) right into a vector of numbers that seize semantic similarity between knowledge objects. Embedding fashions are extensively used past RAG functions, together with advice methods, engines like google, databases, and different knowledge processing methods.

Understanding their function, internals, benefits, and drawbacks is essential and that’s what we’ll cowl at the moment. Whereas we’ll be discussing textual content embedding fashions solely, fashions for different sorts of unstructured knowledge work equally.

What Is an Embedding Mannequin?

Machine studying fashions don’t work with textual content instantly, they require numbers as enter. Since textual content is ubiquitous, over time, the ML group developed many options that deal with the conversion from textual content to numbers. There are various approaches of various complexity, however we’ll assessment simply a few of them.

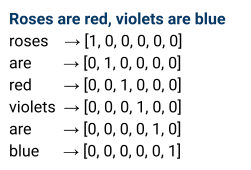

A easy instance is one-hot encoding: deal with phrases of a textual content as categorical variables and map every phrase to a vector of 0s and single 1.

Sadly, this embedding method just isn’t very sensible, because it results in a lot of distinctive classes and ends in unmanageable dimensionality of output vectors in most sensible circumstances. Additionally, one-hot encoding doesn’t put related vectors nearer to at least one one other in a vector area.

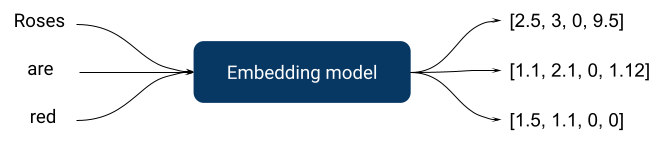

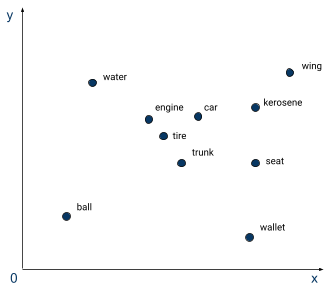

Embedding fashions have been invented to sort out these points. Similar to one-hot encoding, they take textual content as enter and return vectors of numbers as output, however they’re extra complicated as they’re taught with supervised duties, usually utilizing a neural community. A supervised process could be, for instance, predicting product assessment sentiment rating. On this case, the ensuing embedding mannequin would place critiques of comparable sentiment nearer to one another in a vector area. The selection of a supervised process is essential to producing related embeddings when constructing an embedding mannequin.

On the diagram above we are able to see phrase embeddings solely, however we regularly want greater than that since human language is extra complicated than simply many phrases put collectively. Semantics, phrase order, and different linguistic parameters ought to all be taken into consideration, which implies we have to take it to the following stage – sentence embedding fashions.

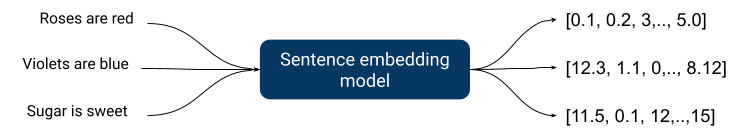

Sentence embeddings affiliate an enter sentence with a vector of numbers, and, as anticipated, are far more complicated internally since they must seize extra complicated relationships.

Due to progress in deep studying, all state-of-the-art embedding fashions are created with deep neural nets, since they higher seize complicated relationships inherent to a human language.

A superb embedding mannequin ought to:

- Be quick since usually it’s only a preprocessing step in a bigger software

- Return vectors of manageable dimensions

- Return vectors that seize sufficient details about similarity to be sensible

Let’s now shortly look into how most embedding fashions are organized internally.

Fashionable Neural Networks Structure

As we simply talked about, all well-performing state-of-the-art embedding fashions are deep neural networks.

That is an actively creating subject and most high performing fashions are related to some novel structure enchancment. Let’s briefly cowl two essential architectures: BERT and GPT.

BERT (Bidirectional Encoder Representations from Transformers) was revealed in 2018 by researchers at Google and described the applying of the bidirectional coaching of “transformer”, a preferred consideration mannequin, to language modeling. Customary transformers embody two separate mechanisms: an encoder for studying textual content enter and a decoder that makes a prediction.

BERT makes use of an encoder that reads the whole sentence of phrases without delay which permits the mannequin to study the context of a phrase primarily based on all of its environment, left and proper in contrast to legacy approaches that checked out a textual content sequence from left to proper or proper to left. Earlier than feeding phrase sequences into BERT, some phrases are changed with [MASK] tokens after which the mannequin makes an attempt to foretell the unique worth of the masked phrases, primarily based on the context offered by the opposite, non-masked phrases within the sequence.

Customary BERT doesn’t carry out very nicely in most benchmarks and BERT fashions require task-specific fine-tuning. However it’s open-source, has been round since 2018, and has comparatively modest system necessities (could be skilled on a single medium-range GPU). Consequently, it grew to become extremely popular for a lot of text-related duties. It’s quick, customizable, and small. For instance, a extremely popular all-Mini-LM mannequin is a modified model of BERT.

GPT (Generative Pre-Educated Transformer) by OpenAI is completely different. In contrast to BERT, It’s unidirectional, i.e. textual content is processed in a single route and makes use of a decoder from a transformer structure that’s appropriate for predicting the following phrase in a sequence. These fashions are slower and produce very excessive dimensional embeddings, however they normally have many extra parameters, don’t require fine-tuning, and are extra relevant to many duties out of the field. GPT just isn’t open supply and is offered as a paid API.

Context Size and Coaching Knowledge

One other essential parameter of an embedding mannequin is context size. Context size is the variety of tokens a mannequin can bear in mind when working with a textual content. An extended context window permits the mannequin to know extra complicated relationships inside a wider physique of textual content. Consequently, fashions can present outputs of upper high quality, e.g. seize semantic similarity higher.

To leverage an extended context, coaching knowledge ought to embody longer items of coherent textual content: books, articles, and so forth. Nevertheless, rising context window size will increase the complexity of a mannequin and will increase compute and reminiscence necessities for coaching.

There are strategies that assist handle useful resource necessities e.g. approximate consideration, however they do that at a value to high quality. That’s one other trade-off that impacts high quality and prices: bigger context lengths seize extra complicated relationships of a human language, however require extra assets.

Additionally, as at all times, the standard of coaching knowledge is essential for all fashions. Embedding fashions aren’t any exception.

Semantic Search and Data Retrieval

Utilizing embedding fashions for semantic search is a comparatively new method. For many years, individuals used different applied sciences: boolean fashions, latent semantic indexing (LSI), and numerous probabilistic fashions.

A few of these approaches work moderately nicely for a lot of current use circumstances and are nonetheless extensively used within the business.

One of the crucial widespread conventional probabilistic fashions is BM25 (BM is “finest matching”), a search relevance rating operate. It’s used to estimate the relevance of a doc to a search question and ranks paperwork primarily based on the question phrases from every listed doc. Solely not too long ago have embedding fashions began persistently outperforming it, however BM25 continues to be used quite a bit since it’s less complicated than utilizing embedding fashions, it has decrease laptop necessities, and the outcomes are explainable.

Benchmarks

Not each mannequin kind has a complete analysis method that helps to decide on an current mannequin.

Thankfully, textual content embedding fashions have frequent benchmark suites similar to:

The article “BEIR: A Heterogeneous Benchmark for Zero-shot Analysis of Data Retrieval Fashions” proposed a reference set of benchmarks and datasets for data retrieval duties. The unique BEIR benchmark consists of a set of 19 datasets and strategies for search high quality analysis. Strategies embody: question-answering, fact-checking, and entity retrieval. Now anybody who releases a textual content embedding mannequin for data retrieval duties can run the benchmark and see how their mannequin ranks in opposition to the competitors.

Large Textual content Embedding Benchmarks embody BEIR and different elements that cowl 58 datasets and 112 languages. The general public leaderboard for MTEB outcomes could be discovered right here.

These benchmarks have been run on a whole lot of current fashions and their leaderboards are very helpful to make an knowledgeable alternative about mannequin choice.

Utilizing Embedding Fashions in a Manufacturing Atmosphere

Benchmark scores on commonplace duties are essential, however they characterize just one dimension.

After we use an embedding mannequin for search, we run it twice:

- When doing offline indexing of accessible knowledge

- When embedding a person question for a search request

There are two essential penalties of this.

The primary is that we now have to reindex all current knowledge once we change or improve an embedding mannequin. All methods constructed utilizing embedding fashions needs to be designed with upgradability in thoughts as a result of newer and higher fashions are launched on a regular basis and, more often than not, upgrading a mannequin is the simplest method to enhance total system efficiency. An embedding mannequin is a much less steady part of the system infrastructure on this case.

The second consequence of utilizing an embedding mannequin for person queries is that the inference latency turns into essential when the variety of customers goes up. Mannequin inference takes extra time for better-performing fashions, particularly in the event that they require GPU to run: having latency larger than 100ms for a small question just isn’t unprecedented for fashions which have greater than 1B parameters. It seems that smaller, leaner fashions are nonetheless essential in a higher-load manufacturing situation.

The tradeoff between high quality and latency is actual and we must always at all times bear in mind about it when selecting an embedding mannequin.

As we now have talked about above, embedding fashions assist handle output vector dimensionality which impacts the efficiency of many algorithms downstream. Usually the smaller the mannequin, the shorter the output vector size, however, usually, it’s nonetheless too nice for smaller fashions. That’s when we have to use dimensionality discount algorithms similar to PCA (principal part evaluation), SNE / tSNE (stochastic neighbor embedding), and UMAP (uniform manifold approximation).

One other place we are able to use dimensionality discount is earlier than storing embeddings in a database. Ensuing vector embeddings will occupy much less area and retrieval pace might be sooner, however will come at a worth for the standard downstream. Vector databases are sometimes not the first storage, so embeddings could be regenerated with higher precision from the unique supply knowledge. Their use helps to cut back the output vector size and, consequently, makes the system sooner and leaner.

Making the Proper Alternative

There’s an abundance of things and trade-offs that needs to be thought-about when selecting an embedding mannequin for a use case. The rating of a possible mannequin in frequent benchmarks is essential, however we must always not overlook that it’s the bigger fashions which have a greater rating. Bigger fashions have larger inference time which may severely restrict their use in low latency eventualities as usually an embedding mannequin is a pre-processing step in a bigger pipeline. Additionally, bigger fashions require GPUs to run.

For those who intend to make use of a mannequin in a low-latency situation, it’s higher to give attention to latency first after which see which fashions with acceptable latency have the best-in-class efficiency. Additionally, when constructing a system with an embedding mannequin it is best to plan for modifications since higher fashions are launched on a regular basis and infrequently it’s the only method to enhance the efficiency of your system.

Concerning the creator

Nick Volynets is a senior knowledge engineer working with the workplace of the CTO the place he enjoys being on the coronary heart of DataRobot innovation. He’s thinking about giant scale machine studying and enthusiastic about AI and its impression.