Editor’s word: This text, initially printed on March 13, 2023, has been up to date.

The mics had been reside and tape was rolling within the studio the place the Miles Davis Quintet was recording dozens of tunes in 1956 for Status Information.

When an engineer requested for the following music’s title, Davis shot again, “I’ll play it, and inform you what it’s later.”

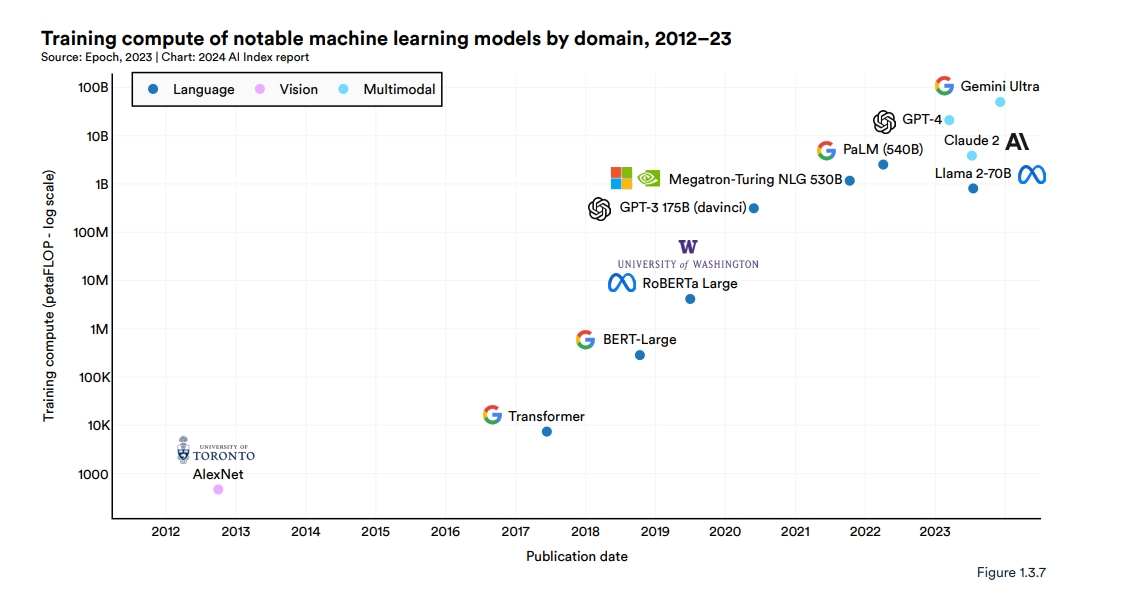

Just like the prolific jazz trumpeter and composer, researchers have been producing AI fashions at a feverish tempo, exploring new architectures and use circumstances. In line with the 2024 AI Index report from the Stanford Institute for Human-Centered Synthetic Intelligence, 149 basis fashions had been printed in 2023, greater than double the quantity launched in 2022.

They stated transformer fashions, giant language fashions (LLMs), imaginative and prescient language fashions (VLMs) and different neural networks nonetheless being constructed are a part of an essential new class they dubbed basis fashions.

Basis Fashions Outlined

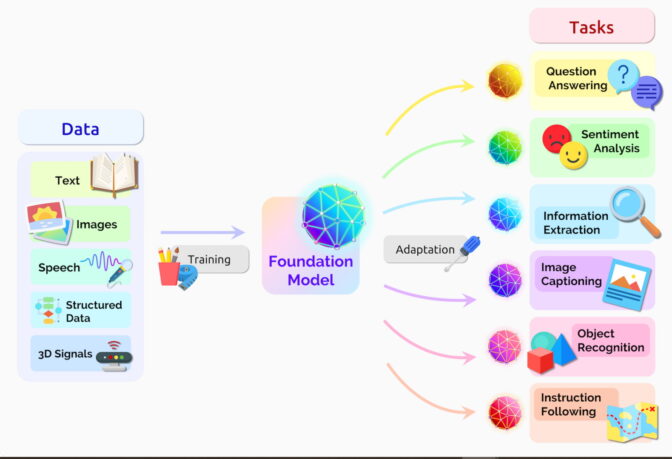

A basis mannequin is an AI neural community — skilled on mountains of uncooked knowledge, typically with unsupervised studying — that may be tailored to perform a broad vary of duties.

Two essential ideas assist outline this umbrella class: Information gathering is simpler, and alternatives are as huge because the horizon.

No Labels, A number of Alternative

Basis fashions typically be taught from unlabeled datasets, saving the time and expense of manually describing every merchandise in huge collections.

Earlier neural networks had been narrowly tuned for particular duties. With a little bit fine-tuning, basis fashions can deal with jobs from translating textual content to analyzing medical photos to performing agent-based behaviors.

“I believe we’ve uncovered a really small fraction of the capabilities of current basis fashions, not to mention future ones,” stated Percy Liang, the middle’s director, within the opening speak of the first workshop on basis fashions.

AI’s Emergence and Homogenization

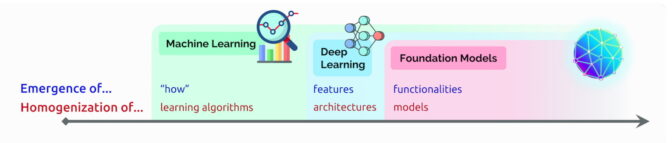

In that speak, Liang coined two phrases to explain basis fashions:

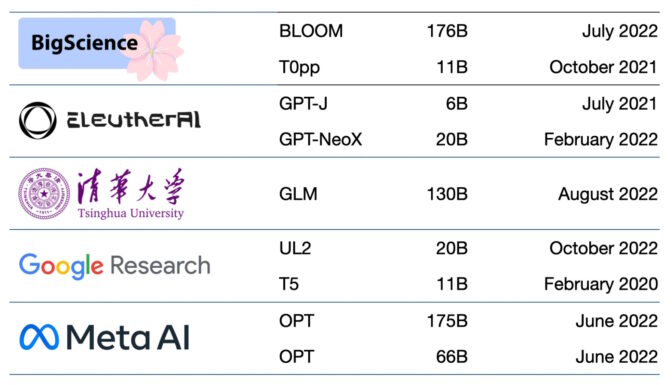

Emergence refers to AI options nonetheless being found, comparable to the numerous nascent expertise in basis fashions. He calls the mixing of AI algorithms and mannequin architectures homogenization, a pattern that helped kind basis fashions. (See chart beneath.)

The sphere continues to maneuver quick.

The sphere continues to maneuver quick.

A yr after the group outlined basis fashions, different tech watchers coined a associated time period — generative AI. It’s an umbrella time period for transformers, giant language fashions, diffusion fashions and different neural networks capturing folks’s imaginations as a result of they’ll create textual content, photos, music, software program, movies and extra.

Generative AI has the potential to yield trillions of {dollars} of financial worth, stated executives from the enterprise agency Sequoia Capital who shared their views in a latest AI Podcast.

A Transient Historical past of Basis Fashions

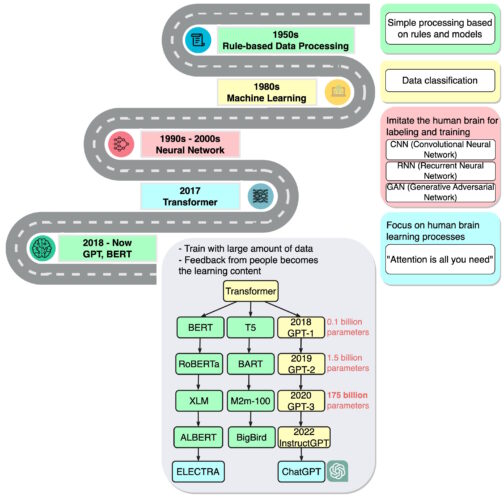

“We’re in a time the place easy strategies like neural networks are giving us an explosion of recent capabilities,” stated Ashish Vaswani, an entrepreneur and former senior employees analysis scientist at Google Mind who led work on the seminal 2017 paper on transformers.

That work impressed researchers who created BERT and different giant language fashions, making 2018 “a watershed second” for pure language processing, a report on AI stated on the finish of that yr.

Google launched BERT as open-source software program, spawning a household of follow-ons and setting off a race to construct ever bigger, extra highly effective LLMs. Then it utilized the expertise to its search engine so customers might ask questions in easy sentences.

In 2020, researchers at OpenAI introduced one other landmark transformer, GPT-3. Inside weeks, folks had been utilizing it to create poems, packages, songs, web sites and extra.

“Language fashions have a variety of useful purposes for society,” the researchers wrote.

Their work additionally confirmed how giant and compute-intensive these fashions might be. GPT-3 was skilled on a dataset with almost a trillion phrases, and it sports activities a whopping 175 billion parameters, a key measure of the facility and complexity of neural networks. In 2024, Google launched Gemini Extremely, a state-of-the-art basis mannequin that requires 50 billion petaflops.

“I simply bear in mind being type of blown away by the issues that it might do,” stated Liang, talking of GPT-3 in a podcast.

The newest iteration, ChatGPT — skilled on 10,000 NVIDIA GPUs — is much more partaking, attracting over 100 million customers in simply two months. Its launch has been known as the iPhone second for AI as a result of it helped so many individuals see how they might use the expertise.

Going Multimodal

Basis fashions have additionally expanded to course of and generate a number of knowledge sorts, or modalities, comparable to textual content, photos, audio and video. VLMs are one kind of multimodal fashions that may perceive video, picture and textual content inputs whereas producing textual content or visible output.

Educated on 355,000 movies and a pair of.8 million photos,

Cosmos Nemotron 34B is a number one VLM that permits the power to question and summarize photos and video from the bodily or digital world.

From Textual content to Photos

About the identical time ChatGPT debuted, one other class of neural networks, known as diffusion fashions, made a splash. Their means to show textual content descriptions into inventive photos attracted informal customers to create wonderful photos that went viral on social media.

The primary paper to explain a diffusion mannequin arrived with little fanfare in 2015. However like transformers, the brand new approach quickly caught hearth.

In a tweet, Midjourney CEO David Holz revealed that his diffusion-based, text-to-image service has greater than 4.4 million customers. Serving them requires greater than 10,000 NVIDIA GPUs primarily for AI inference, he stated in an interview (subscription required).

Towards Fashions That Perceive the Bodily World

The subsequent frontier of synthetic intelligence is bodily AI, which allows autonomous machines like robots and self-driving automobiles to work together with the actual world.

AI efficiency for autonomous automobiles or robots requires intensive coaching and testing. To make sure bodily AI techniques are secure, builders want to coach and take a look at their techniques on huge quantities of information, which might be expensive and time-consuming.

World basis fashions, which may simulate real-world environments and predict correct outcomes primarily based on textual content, picture, or video enter, supply a promising resolution.

Bodily AI improvement groups are utilizing NVIDIA Cosmos world basis fashions, a collection of pre-trained autoregressive and diffusion fashions skilled on 20 million hours of driving and robotics knowledge, with the NVIDIA Omniverse platform to generate huge quantities of controllable, physics-based artificial knowledge for bodily AI. Awarded the Greatest AI And Greatest Total Awards at CES 2025, Cosmos world basis fashions are open fashions that may be personalized for downstream use circumstances or enhance precision on a particular process utilizing use case-specific knowledge.

Dozens of Fashions in Use

Lots of of basis fashions are actually accessible. One paper catalogs and classifies greater than 50 main transformer fashions alone (see chart beneath).

The Stanford group benchmarked 30 basis fashions, noting the sphere is shifting so quick they didn’t overview some new and distinguished ones.

Startup NLP Cloud, a member of the NVIDIA Inception program that nurtures cutting-edge startups, says it makes use of about 25 giant language fashions in a industrial providing that serves airways, pharmacies and different customers. Specialists count on {that a} rising share of the fashions can be made open supply on websites like Hugging Face’s mannequin hub.

Basis fashions preserve getting bigger and extra complicated, too.

That’s why — reasonably than constructing new fashions from scratch — many companies are already customizing pretrained basis fashions to turbocharge their journeys into AI, utilizing on-line companies like NVIDIA AI Basis Fashions.

The accuracy and reliability of generative AI is rising because of methods like retrieval-augmented technology, aka RAG, that lets basis fashions faucet into exterior sources like a company information base.

AI Foundations for Enterprise

One other new framework, the NVIDIA NeMo framework, goals to let any enterprise create its personal billion- or trillion-parameter transformers to energy customized chatbots, private assistants and different AI purposes.

It created the 530-billion parameter Megatron-Turing Pure Language Era mannequin (MT-NLG) that powers TJ, the Toy Jensen avatar that gave a part of the keynote at NVIDIA GTC final yr.

Basis fashions — linked to 3D platforms like NVIDIA Omniverse — can be key to simplifying improvement of the metaverse, the 3D evolution of the web. These fashions will energy purposes and belongings for leisure and industrial customers.

Factories and warehouses are already making use of basis fashions inside digital twins, lifelike simulations that assist discover extra environment friendly methods to work.

Basis fashions can ease the job of coaching autonomous automobiles and robots that help people on manufacturing facility flooring and logistics facilities. Additionally they assist practice autonomous automobiles by creating lifelike environments just like the one beneath.

New makes use of for basis fashions are rising every day, as are challenges in making use of them.

A number of papers on basis and generative AI fashions describing dangers comparable to:

- amplifying bias implicit within the huge datasets used to coach fashions,

- introducing inaccurate or deceptive data in photos or movies, and

- violating mental property rights of current works.

“On condition that future AI techniques will probably rely closely on basis fashions, it’s crucial that we, as a group, come collectively to develop extra rigorous rules for basis fashions and steerage for his or her accountable improvement and deployment,” stated the Stanford paper on basis fashions.

Present concepts for safeguards embrace filtering prompts and their outputs, recalibrating fashions on the fly and scrubbing huge datasets.

“These are points we’re engaged on as a analysis group,” stated Bryan Catanzaro, vp of utilized deep studying analysis at NVIDIA. “For these fashions to be really broadly deployed, we’ve to take a position so much in security.”

It’s yet another discipline AI researchers and builders are plowing as they create the longer term.