Introduction

Synthetic intelligence has revolutionized due to Secure Diffusion, which makes producing high-quality pictures from noise or textual content descriptions attainable. A number of important parts come collectively on this potent generative mannequin to create wonderful visible results. The 5 essential elements of Diffusion Fashions—the ahead and reverse processes, the noise schedule, positional encoding, and neural community structure—will all be coated on this article.

We are going to implement elements of diffusion fashions as we undergo the article. We can be utilizing the Vogue MNIST Dataset for this.

Overview

- Uncover how Secure Diffusion transforms AI picture technology, bringing high-quality visuals from mere noise or textual content descriptions.

- Learn the way pictures degrade to noise, coaching AI fashions to grasp the artwork of visible reconstruction.

- Discover how AI reconstructs high-quality pictures from noise, reversing the degradation course of step-by-step.

- Perceive the position of distinctive vector representations in guiding AI by way of various noise ranges throughout picture technology.

- Delve into the symmetrical encoder-decoder construction of UNet, which excels at producing fantastic particulars and world constructions.

- Look at the important noise schedule in diffusion fashions, balancing technology high quality and computational effectivity for high-fidelity AI outputs.

Ahead Diffusion Course of

The ahead course of is the preliminary stage of Secure Diffusion, the place a picture is step by step reworked into noise. This course of is essential for coaching the mannequin to grasp how pictures degrade over time.

Vital points of the ahead course of include:

- Gaussian noise is step by step added to the picture by the mannequin over a number of timesteps in tiny increments.

- The Markov property states that each step in a ahead course of solely depends upon the step earlier than it, making a Markov chain.

- Gaussian convergence: The information distribution converges to a Gaussian distribution after a adequate variety of steps.

Listed here are the elements of the diffusion mannequin:

Implementation of the Ahead Diffusion Course of

The code on this pocket book is customized from Brian Pulfer’s DDPM implementation in his GitHub repo.

Importing mandatory libraries

# Import of libraries

import random

import imageio

import numpy as np

from argparse import ArgumentParser

from tqdm.auto import tqdm

import matplotlib.pyplot as plt

import einops

import torch

import torch.nn as nn

from torch.optim import Adam

from torch.utils.knowledge import DataLoader

from torchvision.transforms import Compose, ToTensor, Lambda

from torchvision.datasets.mnist import MNIST, FashionMNIST

# Setting reproducibility

SEED = 0

random.seed(SEED)

np.random.seed(SEED)

torch.manual_seed(SEED)

# Definitions

STORE_PATH_MNIST = f"ddpm_model_mnist.pt"

STORE_PATH_FASHION = f"ddpm_model_fashion.pt"Setting SEED for reproducibility

no_train = False

vogue = True

batch_size = 128

n_epochs = 20

lr = 0.001

store_path = "ddpm_fashion.pt" if vogue else "ddpm_mnist.pt"Setting some parameters, no_train is about to False. This suggests that we’ll prepare the mannequin, and never use any pretrained mannequin. Batch_size, n_epochs, and lr are common deep-learning parameters. We can be utilizing the Vogue MNIST dataset right here.

Loading Knowledge

# Loading the info (changing every picture right into a tensor and normalizing between [-1, 1])

remodel = Compose([

ToTensor(),

Lambda(lambda x: (x - 0.5) * 2)]

)

ds_fn = FashionMNIST if vogue else MNIST

dataset = ds_fn("./datasets", obtain=True, prepare=True, remodel=remodel)

loader = DataLoader(dataset, batch_size, shuffle=True)We are going to use the pytorch knowledge loader to load our Vogue MNIST Dataset.

Ahead Diffusion Course of perform

def ahead(self, x0, t, eta=None):

n, c, h, w = x0.form

a_bar = self.alpha_bars[t]

if eta is None:

eta = torch.randn(n, c, h, w).to(self.gadget)

noisy = a_bar.sqrt().reshape(n, 1, 1, 1) * x0 + (1 - a_bar).sqrt().reshape(n, 1, 1, 1) * eta

return noisyThe above perform implements the ahead diffusion equation on to the specified step. Observe: Right here, we don’t induce noise at every timestep; as an alternative, we learn the picture on the timestep instantly.

def show_forward(ddpm, loader, gadget):

# Displaying the ahead course of

for batch in loader:

imgs = batch[0]

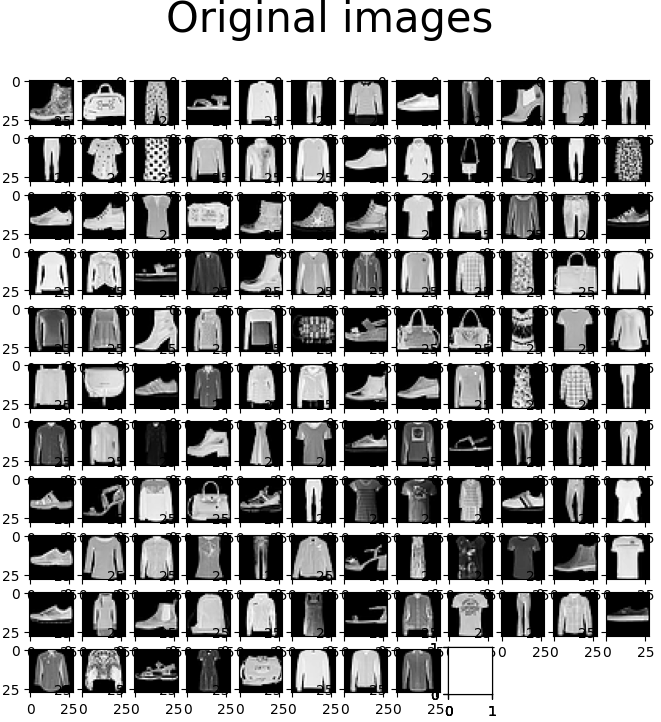

show_images(imgs, "Authentic pictures")

for % in [0.25, 0.5, 0.75, 1]:

show_images(

ddpm(imgs.to(gadget),

[int(percent * ddpm.n_steps) - 1 for _ in range(len(imgs))]),

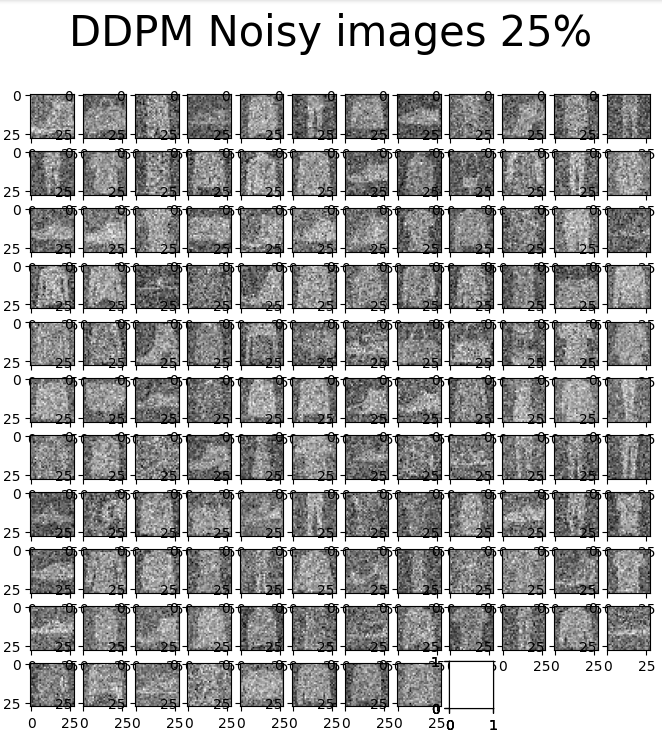

f"DDPM Noisy pictures {int(% * 100)}%"

)

breakThe above code will assist us visualize the picture noise at completely different ranges: 25%, 50%, 75%, and 100%.

Reverse Diffusion Course of

The core of steady diffusion is the reverse course of, which teaches the mannequin to piece collectively noisy pictures into high-quality ones. This course of, employed for coaching and picture technology, is the other of the ahead course of.

Vital points of the other process:

- Iterative denoising: The unique picture is progressively revealed because the mannequin step by step removes the noise.

- Noise prediction: The mannequin makes predictions in regards to the noise within the present picture at every step.

- Managed technology: Extra management over the creation of pictures is feasible because of the reverse course of, which allows interventions at specific timesteps.

Additionally Learn: Unraveling the Energy of Diffusion Fashions in Fashionable AI

Implementation of Reverse Diffusion Course of

def backward(self, x, t):

# Run every picture by way of the community for every timestep t within the vector t.

# The community returns its estimation of the noise that was added.

return self.community(x, t)Effectively you might say that the reverse diffusion course of perform could be very easy, it’s as a result of in reverse diffusion the community (we are going to look into the community half quickly) predicts the quantity of noise, calculates the loss with the unique noise to study. Therefore, the code could be very easy. Beneath is the code for your complete DDPM – Denoise Diffusion Probabilistic Mannequin.

The under code creates a Denoising Diffusion Probabilistic Mannequin (DDPM) and defines the MyDDPM PyTorch module. The ahead diffusion course of is carried out by the ahead method, which provides noise to an enter picture based mostly on a predetermined timestep. The backward strategy, important for the reverse diffusion course of, estimates the noise in a given noisy picture at a selected timestep utilizing a neural community. The category additionally initializes diffusion course of parameters corresponding to alpha and beta schedules.

# DDPM class

class MyDDPM(nn.Module):

def __init__(self, community, n_steps=200, min_beta=10 ** -4, max_beta=0.02, gadget=None, image_chw=(1, 28, 28)):

tremendous(MyDDPM, self).__init__()

self.n_steps = n_steps

self.gadget = gadget

self.image_chw = image_chw

self.community = community.to(gadget)

self.betas = torch.linspace(min_beta, max_beta, n_steps).to(

gadget) # Variety of steps is usually within the order of hundreds

self.alphas = 1 - self.betas

self.alpha_bars = torch.tensor([torch.prod(self.alphas[:i + 1]) for i in vary(len(self.alphas))]).to(gadget)

def ahead(self, x0, t, eta=None):

# Make enter picture extra noisy (we are able to instantly skip to the specified step)

n, c, h, w = x0.form

a_bar = self.alpha_bars[t]

if eta is None:

eta = torch.randn(n, c, h, w).to(self.gadget)

noisy = a_bar.sqrt().reshape(n, 1, 1, 1) * x0 + (1 - a_bar).sqrt().reshape(n, 1, 1, 1) * eta

return noisy

def backward(self, x, t):

# Run every picture by way of the community for every timestep t within the vector t.

# The community returns its estimation of the noise that was added.

return self.community(x, t)The parameters are n_steps, which tells us the variety of timesteps within the coaching course of. min_beta and max_beta point out the noise schedule, which we are going to focus on quickly.

def generate_new_images(ddpm, n_samples=16, gadget=None, frames_per_gif=100, gif_name="sampling.gif", c=1, h=28, w=28):

"""Given a DDPM mannequin, a lot of samples to be generated and a tool, returns some newly generated samples"""

frame_idxs = np.linspace(0, ddpm.n_steps, frames_per_gif).astype(np.uint)

frames = []

with torch.no_grad():

if gadget is None:

gadget = ddpm.gadget

# Ranging from random noise

x = torch.randn(n_samples, c, h, w).to(gadget)

for idx, t in enumerate(listing(vary(ddpm.n_steps))[::-1]):

# Estimating noise to be eliminated

time_tensor = (torch.ones(n_samples, 1) * t).to(gadget).lengthy()

eta_theta = ddpm.backward(x, time_tensor)

alpha_t = ddpm.alphas[t]

alpha_t_bar = ddpm.alpha_bars[t]

# Partially denoising the picture

x = (1 / alpha_t.sqrt()) * (x - (1 - alpha_t) / (1 - alpha_t_bar).sqrt() * eta_theta)

if t > 0:

z = torch.randn(n_samples, c, h, w).to(gadget)

# Possibility 1: sigma_t squared = beta_t

beta_t = ddpm.betas[t]

sigma_t = beta_t.sqrt()

# Possibility 2: sigma_t squared = beta_tilda_t

# prev_alpha_t_bar = ddpm.alpha_bars[t-1] if t > 0 else ddpm.alphas[0]

# beta_tilda_t = ((1 - prev_alpha_t_bar)/(1 - alpha_t_bar)) * beta_t

# sigma_t = beta_tilda_t.sqrt()

# Including some extra noise like in Langevin Dynamics vogue

x = x + sigma_t * z

# Including frames to the GIF

if idx in frame_idxs or t == 0:

# Placing digits in vary [0, 255]

normalized = x.clone()

for i in vary(len(normalized)):

normalized[i] -= torch.min(normalized[i])

normalized[i] *= 255 / torch.max(normalized[i])

# Reshaping batch (n, c, h, w) to be a (as a lot because it will get) sq. body

body = einops.rearrange(normalized, "(b1 b2) c h w -> (b1 h) (b2 w) c", b1=int(n_samples ** 0.5))

body = body.cpu().numpy().astype(np.uint8)

# Rendering body

frames.append(body)

# Storing the gif

with imageio.get_writer(gif_name, mode="I") as author:

for idx, body in enumerate(frames):

# Convert grayscale body to RGB

rgb_frame = np.repeat(body, 3, axis=-1)

author.append_data(rgb_frame)

if idx == len(frames) - 1:

for _ in vary(frames_per_gif // 3):

author.append_data(rgb_frame)

return xThe above code is our perform for producing new pictures. It creates 16 new pictures. The reverse strategy of these 16 new pictures is captured at every timestep, however solely 100 are taken from 200 timesteps. Then, these 100 frames are changed into GIFs to indicate the visualization of our model-generating pictures.

The above code can be generated as soon as the community is about. Now, let’s look into the neural community.

Additionally learn: Implementing Diffusion Fashions for Inventive AI Artwork Technology

Neural Community Structure

Earlier than we glance into the structure of our neural community, which we are going to use to generate pictures. We should always know that the diffusion mannequin’s parameters are shared throughout completely different timesteps. It should take away noise from pictures with extensively completely different ranges of noise. Therefore, we’ve positional encoding, which encodes the timestep utilizing a sinusoidal perform to deal with this.

Implementation of Positional Encoding

Key points of positional encoding:

- Distinct illustration: Every timestep is given a singular vector illustration.

- Noise degree consciousness: Helps the mannequin perceive the present noise degree, permitting for applicable denoising choices.

- Course of steerage: Guides the mannequin by way of completely different phases of the diffusion course of.

def sinusoidal_embedding(n, d):

# Returns the usual positional embedding

embedding = torch.zeros(n, d)

wk = torch.tensor([1 / 10_000 ** (2 * j / d) for j in range(d)])

wk = wk.reshape((1, d))

t = torch.arange(n).reshape((n, 1))

embedding[:,::2] = torch.sin(t * wk[:,::2])

embedding[:,1::2] = torch.cos(t * wk[:,::2])

return embeddingNow that we’ve seen positional encoding to tell apart between timesteps, we are going to look into our Neural Community Structure. UNet is the commonest structure used within the diffusion mannequin as a result of it really works on the picture’s pixel degree. It includes a symmetric encoder-decoder construction with skip connections between corresponding layers. In Secure Diffusion, U-Web predicts the noise at every denoising step. Its skill to seize and mix options at completely different scales makes it notably efficient for picture technology duties, permitting the mannequin to take care of fantastic particulars and world construction within the generated pictures.

Let’s declare UNet for our steady diffusion course of.

class MyUNet(nn.Module):

'''

Vanilla UNet Implementation with Timesteps Positional Ecndoing being utilized in each block along with Standard enter from earlier block

'''

def __init__(self, n_steps=1000, time_emb_dim=100):

tremendous(MyUNet, self).__init__()

# Sinusoidal embedding

self.time_embed = nn.Embedding(n_steps, time_emb_dim)

self.time_embed.weight.knowledge = sinusoidal_embedding(n_steps, time_emb_dim)

self.time_embed.requires_grad_(False)

# First half

self.te1 = self._make_te(time_emb_dim, 1)

self.b1 = nn.Sequential(

MyBlock((1, 28, 28), 1, 10),

MyBlock((10, 28, 28), 10, 10),

MyBlock((10, 28, 28), 10, 10)

)

self.down1 = nn.Conv2d(10, 10, 4, 2, 1)

self.te2 = self._make_te(time_emb_dim, 10)

self.b2 = nn.Sequential(

MyBlock((10, 14, 14), 10, 20),

MyBlock((20, 14, 14), 20, 20),

MyBlock((20, 14, 14), 20, 20)

)

self.down2 = nn.Conv2d(20, 20, 4, 2, 1)

self.te3 = self._make_te(time_emb_dim, 20)

self.b3 = nn.Sequential(

MyBlock((20, 7, 7), 20, 40),

MyBlock((40, 7, 7), 40, 40),

MyBlock((40, 7, 7), 40, 40)

)

self.down3 = nn.Sequential(

nn.Conv2d(40, 40, 2, 1),

nn.SiLU(),

nn.Conv2d(40, 40, 4, 2, 1)

)

# Bottleneck

self.te_mid = self._make_te(time_emb_dim, 40)

self.b_mid = nn.Sequential(

MyBlock((40, 3, 3), 40, 20),

MyBlock((20, 3, 3), 20, 20),

MyBlock((20, 3, 3), 20, 40)

)

# Second half

self.up1 = nn.Sequential(

nn.ConvTranspose2d(40, 40, 4, 2, 1),

nn.SiLU(),

nn.ConvTranspose2d(40, 40, 2, 1)

)

self.te4 = self._make_te(time_emb_dim, 80)

self.b4 = nn.Sequential(

MyBlock((80, 7, 7), 80, 40),

MyBlock((40, 7, 7), 40, 20),

MyBlock((20, 7, 7), 20, 20)

)

self.up2 = nn.ConvTranspose2d(20, 20, 4, 2, 1)

self.te5 = self._make_te(time_emb_dim, 40)

self.b5 = nn.Sequential(

MyBlock((40, 14, 14), 40, 20),

MyBlock((20, 14, 14), 20, 10),

MyBlock((10, 14, 14), 10, 10)

)

self.up3 = nn.ConvTranspose2d(10, 10, 4, 2, 1)

self.te_out = self._make_te(time_emb_dim, 20)

self.b_out = nn.Sequential(

MyBlock((20, 28, 28), 20, 10),

MyBlock((10, 28, 28), 10, 10),

MyBlock((10, 28, 28), 10, 10, normalize=False)

)

self.conv_out = nn.Conv2d(10, 1, 3, 1, 1)

def ahead(self, x, t):

# x is (N, 2, 28, 28) (picture with positional embedding stacked on channel dimension)

t = self.time_embed(t)

n = len(x)

out1 = self.b1(x + self.te1(t).reshape(n, -1, 1, 1)) # (N, 10, 28, 28)

out2 = self.b2(self.down1(out1) + self.te2(t).reshape(n, -1, 1, 1)) # (N, 20, 14, 14)

out3 = self.b3(self.down2(out2) + self.te3(t).reshape(n, -1, 1, 1)) # (N, 40, 7, 7)

out_mid = self.b_mid(self.down3(out3) + self.te_mid(t).reshape(n, -1, 1, 1)) # (N, 40, 3, 3)

out4 = torch.cat((out3, self.up1(out_mid)), dim=1) # (N, 80, 7, 7)

out4 = self.b4(out4 + self.te4(t).reshape(n, -1, 1, 1)) # (N, 20, 7, 7)

out5 = torch.cat((out2, self.up2(out4)), dim=1) # (N, 40, 14, 14)

out5 = self.b5(out5 + self.te5(t).reshape(n, -1, 1, 1)) # (N, 10, 14, 14)

out = torch.cat((out1, self.up3(out5)), dim=1) # (N, 20, 28, 28)

out = self.b_out(out + self.te_out(t).reshape(n, -1, 1, 1)) # (N, 1, 28, 28)

out = self.conv_out(out)

return out

def _make_te(self, dim_in, dim_out):

return nn.Sequential(

nn.Linear(dim_in, dim_out),

nn.SiLU(),

nn.Linear(dim_out, dim_out)

)Instantiating the mannequin

# Defining mannequin

n_steps, min_beta, max_beta = 1000, 10 ** -4, 0.02 # Initially utilized by the authors

ddpm = MyDDPM(MyUNet(n_steps), n_steps=n_steps, min_beta=min_beta, max_beta=max_beta, gadget=gadget)

#Variety of parameters within the mannequin to be realized.

sum([p.numel() for p in ddpm.parameters()])Visualization of Ahead diffusion

show_forward(ddpm, loader, gadget)

The above-mentioned pictures are authentic Vogue MNIST pictures with none noise. Right here, we are going to take these pictures and slowly inducing noise into them.

We are able to observe from the above-mentioned pictures that there’s noise within the pictures, however it’s not tough to acknowledge them. We add noise as per our noise schedule. The above pictures comprise 25% of the noise as per the linear noise schedule.

We are able to see that the noise is being step by step added till 100% of the picture is noise. The above picture reveals 50% of the noise added as per noise schedule and at 50% we’re unable to recognise pictures, that is thought of a downside of linear noise schedule and up to date diffusion fashions use extra superior strategies to induce noise.

Producing Photos Earlier than Coaching

generated = generate_new_images(ddpm, gif_name="before_training.gif")

show_images(generated, "Photos generated earlier than coaching")We are able to see that the mannequin is aware of nothing in regards to the dataset and might generate solely noise. Earlier than we begin coaching our mannequin, we are going to focus on the noise schedule.

Noise Schedule

The noise schedule is a important part in diffusion fashions. It determines how noise is added through the ahead course of and eliminated through the reverse course of. It additionally defines the speed at which data is destroyed and reconstructed, considerably impacting the mannequin’s efficiency and the standard of generated samples.

A well-designed noise schedule balances the trade-off between technology high quality and computational effectivity. Too fast noise addition can result in data loss and poor reconstruction, whereas too sluggish a schedule may end up in unnecessarily lengthy computation occasions. Superior strategies like cosine schedules can optimize this course of, permitting for quicker sampling with out sacrificing output high quality. The noise schedule additionally influences the mannequin’s skill to seize completely different ranges of element, from coarse constructions to fantastic textures, making it a key think about reaching high-fidelity generations.

In our DDPM mannequin, we are going to use a Linear Schedule the place noise is added linearly, however there are different latest developments in Secure diffusion. Now that we perceive the Noise schedule let’s prepare our mannequin.

Mannequin Coaching

In mannequin coaching, we absorb our Neural Community and prepare them upon the pictures that we get from ahead diffusion; the under perform takes our mannequin, dataset, variety of epochs, and optimizer used. eta is the unique quantity of noise added to the picture, and eta_theta is the noise predicted by the mannequin. Upon understanding the MSE loss, utilizing the eta and eta_theta mannequin, it learns to foretell noise current within the picture.

def training_loop(ddpm, loader, n_epochs, optim, gadget, show=False, store_path="ddpm_model.pt"):

mse = nn.MSELoss()

best_loss = float("inf")

n_steps = ddpm.n_steps

for epoch in tqdm(vary(n_epochs), desc=f"Coaching progress", color="#00ff00"):

epoch_loss = 0.0

for step, batch in enumerate(tqdm(loader, depart=False, desc=f"Epoch {epoch + 1}/{n_epochs}", color="#005500")):

# Loading knowledge

x0 = batch[0].to(gadget)

n = len(x0)

# Choosing some noise for every of the pictures within the batch, a timestep and the respective alpha_bars

eta = torch.randn_like(x0).to(gadget)

t = torch.randint(0, n_steps, (n,)).to(gadget)

# Computing the noisy picture based mostly on x0 and the time-step (ahead course of)

noisy_imgs = ddpm(x0, t, eta)

# Getting mannequin estimation of noise based mostly on the pictures and the time-step

eta_theta = ddpm.backward(noisy_imgs, t.reshape(n, -1))

# Optimizing the MSE between the noise plugged and the expected noise

loss = mse(eta_theta, eta)

optim.zero_grad()

loss.backward()

optim.step()

epoch_loss += loss.merchandise() * len(x0) / len(loader.dataset)

# Show pictures generated at this epoch

if show:

show_images(generate_new_images(ddpm, gadget=gadget), f"Photos generated at epoch {epoch + 1}")

log_string = f"Loss at epoch {epoch + 1}: {epoch_loss:.3f}"

# Storing the mannequin

if best_loss > epoch_loss:

best_loss = epoch_loss

torch.save(ddpm.state_dict(), store_path)

log_string += " --> Greatest mannequin ever (saved)"

print(log_string)

# Coaching

# Estimate - on T4 it takes round 9 minutes to do 20 epochs

store_path = "ddpm_fashion.pt" if vogue else "ddpm_mnist.pt"

if not no_train:

training_loop(ddpm, loader, n_epochs, optim=Adam(ddpm.parameters(), lr), gadget=gadget, store_path=store_path)An individual with fundamental pytorch and deep studying information would say that that is simply regular mannequin coaching, and sure, it’s. We have now predicted noise from our mannequin and true noise from ahead diffusion. Utilizing these two, we discover loss utilizing MSE and replace our community’s weightage to discover ways to predict and take away noise.

Mannequin Testing

# Loading the skilled mannequin

best_model = MyDDPM(MyUNet(), n_steps=n_steps, gadget=gadget)

best_model.load_state_dict(torch.load(store_path, map_location=gadget))

best_model.eval()

print("Mannequin loaded")

print("Producing new pictures")

generated = generate_new_images(

best_model,

n_samples=100,

gadget=gadget,

gif_name="vogue.gif" if vogue else "mnist.gif"

)

show_images(generated, "Ultimate outcome")We are going to strive producing new pictures (100 pictures), seize the reverse course of, and make it right into a gif.

from IPython.show import Picture

Picture(open('vogue.gif' if vogue else 'mnist.gif','rb').learn())

The above GIF reveals us our community producing 100 pictures; it begins from pure noise and does a reverse diffusion course of; therefore, ultimately, we get 100 newly generated pictures based mostly on the training from our MNIST dataset.

Conclusion

Secure Diffusion’s spectacular picture technology capabilities outcome from the intricate interaction of those 5 key elements. The ahead and reverse processes work in tandem to study the connection between clear and noisy pictures. The noise schedule optimizes the addition and removing of noise, whereas positional encoding supplies essential temporal data. Lastly, the neural community structure combines every part, studying to generate high-quality pictures from noise or textual content descriptions.

As analysis advances, we are able to anticipate additional refinements in every part, probably resulting in extra spectacular image-generation capabilities. The way forward for AI-generated artwork and content material seems to be brighter than ever, because of the strong basis laid by Secure Diffusion and its key elements.

If you wish to grasp steady diffusion, checkout our unique GenAI Pinnacle Program right this moment!

Often Requested Questions

Ans. The ahead course of step by step provides noise to a picture, whereas the reverse course of removes noise to generate a high-quality picture.

Ans. The noise schedule determines how noise is added and eliminated, considerably impacting the mannequin’s efficiency and the standard of generated pictures.

Ans. Positional encoding helps the mannequin perceive the present noise degree and stage of the diffusion course of, offering a singular illustration for every timestep.

Ans. U-Web and Transformer architectures are generally used because the spine for Secure Diffusion fashions.

Ans. The reverse diffusion course of iteratively removes noise from a loud enter, step by step reconstructing a high-quality picture by way of a number of denoising steps.