In fashions, the unbiased variables should be not or solely barely depending on one another, i.e. that they don’t seem to be correlated. Nevertheless, if such a dependency exists, that is known as Multicollinearity and results in unstable fashions and outcomes which can be tough to interpret. The variance inflation issue is a decisive metric for recognizing multicollinearity and signifies the extent to which the correlation with different predictors will increase the variance of a regression coefficient. A excessive worth of this metric signifies a excessive correlation of the variable with different unbiased variables within the mannequin.

Within the following article, we glance intimately at multicollinearity and the VIF as a measurement software. We additionally present how the VIF may be interpreted and what measures may be taken to scale back it. We additionally evaluate the indicator with different strategies for measuring multicollinearity.

What’s Multicollinearity?

Multicollinearity is a phenomenon that happens in regression evaluation when two or extra variables are strongly correlated with one another so {that a} change in a single variable results in a change within the different variable. Because of this, the event of an unbiased variable may be predicted utterly or a minimum of partially by one other variable. This complicates the prediction of linear regression to find out the affect of an unbiased variable on the dependent variable.

A distinction may be made between two sorts of multicollinearity:

- Good Multicollinearity: a variable is a precise linear mixture of one other variable, for instance when two variables measure the identical factor in numerous items, similar to weight in kilograms and kilos.

- Excessive Diploma of Multicollinearity: Right here, one variable is strongly, however not utterly, defined by a minimum of one different variable. For instance, there’s a excessive correlation between an individual’s schooling and their earnings, however it isn’t excellent multicollinearity.

The prevalence of multicollinearity in regressions results in severe issues as, for instance, the regression coefficients change into unstable and react very strongly to new information, in order that the general prediction high quality suffers. Varied strategies can be utilized to acknowledge multicollinearity, such because the correlation matrix or the variance inflation issue, which we’ll take a look at in additional element within the subsequent part.

What’s the Variance Inflation Issue (VIF)?

The variance inflation issue (VIF) describes a diagnostic software for regression fashions that helps to detect multicollinearity. It signifies the issue by which the variance of a coefficient will increase as a result of correlation with different variables. A excessive VIF worth signifies a powerful multicollinearity of the variable with different unbiased variables. This negatively influences the regression coefficient estimate and ends in excessive customary errors. It’s due to this fact vital to calculate the VIF in order that multicollinearity is acknowledged at an early stage and countermeasures may be taken. :

[] [VIF = frac{1}{(1 – R^2)}]

Right here (R^2) is the so-called coefficient of willpower of the regression of characteristic (i) towards all different unbiased variables. A excessive (R^2) worth signifies that a big proportion of the variables may be defined by the opposite options, in order that multicollinearity is suspected.

In a regression with the three unbiased variables (X_1), (X_2) and (X_3), for instance, one would practice a regression with (X_1) because the dependent variable and (X_2) and (X_3) as unbiased variables. With the assistance of this mannequin, (R_{1}^2) might then be calculated and inserted into the formulation for the VIF. This process would then be repeated for the remaining mixtures of the three unbiased variables.

A typical threshold worth is VIF > 10, which signifies sturdy multicollinearity. Within the following part, we glance in additional element on the interpretation of the variance inflation issue.

How can completely different Values of the Variance Inflation Issue be interpreted?

After calculating the VIF, you will need to be capable to consider what assertion the worth makes in regards to the scenario within the mannequin and to have the ability to deduce whether or not measures are vital. The values may be interpreted as follows:

- VIF = 1: This worth signifies that there isn’t any multicollinearity between the analyzed variable and the opposite variables. Which means that no additional motion is required.

- VIF between 1 and 5: If the worth is within the vary between 1 and 5, then there may be multicollinearity between the variables, however this isn’t giant sufficient to symbolize an precise drawback. Relatively, the dependency remains to be reasonable sufficient that it may be absorbed by the mannequin itself.

- VIF > 5: In such a case, there may be already a excessive diploma of multicollinearity, which requires intervention in any case. The usual error of the predictor is more likely to be considerably extreme, so the regression coefficient could also be unreliable. Consideration must be given to combining the correlated predictors into one variable.

- VIF > 10: With such a price, the variable has severe multicollinearity and the regression mannequin may be very more likely to be unstable. On this case, consideration must be given to eradicating the variable to acquire a extra highly effective mannequin.

General, a excessive VIF worth signifies that the variable could also be redundant, as it’s extremely correlated with different variables. In such instances, numerous measures must be taken to scale back multicollinearity.

What measures assist to scale back the VIF?

There are numerous methods to avoid the results of multicollinearity and thus additionally scale back the variance inflation issue. The most well-liked measures embrace:

- Eradicating extremely correlated variables: Particularly with a excessive VIF worth, eradicating particular person variables with excessive multicollinearity is an effective software. This could enhance the outcomes of the regression, as redundant variables estimate the coefficients extra unstable.

- Principal part evaluation (PCA): The core concept of principal part evaluation is that a number of variables in a knowledge set could measure the identical factor, i.e. be correlated. Which means that the assorted dimensions may be mixed into fewer so-called principal elements with out compromising the importance of the information set. Top, for instance, is very correlated with shoe measurement, as tall folks typically have taller sneakers and vice versa. Which means that the correlated variables are then mixed into uncorrelated major elements, which reduces multicollinearity with out shedding vital data. Nevertheless, that is additionally accompanied by a lack of interpretability, because the principal elements don’t symbolize actual traits, however a mix of various variables.

- Regularization Strategies: Regularization includes numerous strategies which can be utilized in statistics and machine studying to manage the complexity of a mannequin. It helps to react robustly to new and unseen information and thus allows the generalizability of the mannequin. That is achieved by including a penalty time period to the mannequin’s optimization perform to forestall the mannequin from adapting an excessive amount of to the coaching information. This method reduces the affect of extremely correlated variables and lowers the VIF. On the similar time, nonetheless, the accuracy of the mannequin just isn’t affected.

These strategies can be utilized to successfully scale back the VIF and fight multicollinearity in a regression. This makes the outcomes of the mannequin extra steady and the usual error may be higher managed.

How does the VIF evaluate to different strategies?

The variance inflation issue is a extensively used method to measure multicollinearity in a knowledge set. Nevertheless, different strategies can provide particular benefits and downsides in comparison with the VIF, relying on the appliance.

Correlation Matrix

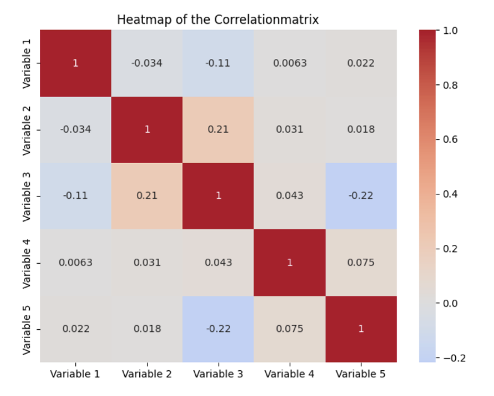

The correlation matrix is a statistical technique for quantifying and evaluating the relationships between completely different variables in a knowledge set. The pairwise correlations between all mixtures of two variables are proven in a tabular construction. Every cell within the matrix incorporates the so-called correlation coefficient between the 2 variables outlined within the column and the row.

This worth may be between -1 and 1 and supplies data on how the 2 variables relate to one another. A optimistic worth signifies a optimistic correlation, which means that a rise in a single variable results in a rise within the different variable. The precise worth of the correlation coefficient supplies data on how strongly the variables transfer about one another. With a destructive correlation coefficient, the variables transfer in reverse instructions, which means that a rise in a single variable results in a lower within the different variable. Lastly, a coefficient of 0 signifies that there isn’t any correlation.

A correlation matrix due to this fact fulfills the aim of presenting the correlations in a knowledge set in a fast and easy-to-understand method and thus types the idea for subsequent steps, similar to mannequin choice. This makes it potential, for instance, to acknowledge multicollinearity, which might trigger issues with regression fashions, because the parameters to be discovered are distorted.

In comparison with the VIF, the correlation matrix solely provides a floor evaluation of the correlations between variables. Nevertheless, the most important distinction is that the correlation matrix solely reveals the pairwise comparisons between variables and never the simultaneous results between a number of variables. As well as, the VIF is extra helpful for quantifying precisely how a lot multicollinearity impacts the estimate of the coefficients.

Eigenvalue Decomposition

Eigenvalue decomposition is a technique that builds on the correlation matrix and mathematically helps to establish multicollinearity. Both the correlation matrix or the covariance matrix can be utilized. Usually, small eigenvalues point out a stronger, linear dependency between the variables and are due to this fact an indication of multicollinearity.

In comparison with the VIF, the eigenvalue decomposition provides a deeper mathematical evaluation and might in some instances additionally assist to detect multicollinearity that will have remained hidden by the VIF. Nevertheless, this technique is far more advanced and tough to interpret.

The VIF is a straightforward and easy-to-understand technique for detecting multicollinearity. In comparison with different strategies, it performs effectively as a result of it permits a exact and direct evaluation that’s on the degree of the person variables.

The best way to detect Multicollinearity in Python?

Recognizing multicollinearity is a vital step in information preprocessing in machine studying to coach a mannequin that’s as significant and strong as potential. On this part, we due to this fact take a more in-depth take a look at how the VIF may be calculated in Python and the way the correlation matrix is created.

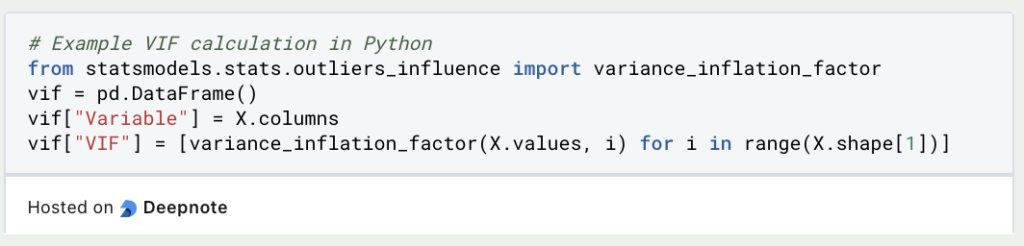

Calculating the Variance Inflation Think about Python

The Variance Inflation Issue may be simply used and imported in Python through the statsmodels library. Assuming we have already got a Pandas DataFrame in a variable X that incorporates the unbiased variables, we will merely create a brand new, empty DataFrame for calculating the VIFs. The variable names and values are then saved on this body.

A brand new row is created for every unbiased variable in X within the Variable column. It’s then iterated by all variables within the information set and the variance inflation issue is calculated for the values of the variables and once more saved in an inventory. This record is then saved as column VIF within the DataFrame.

Calculating the Correlation Matrix

In Python, a correlation matrix may be simply calculated utilizing Pandas after which visualized as a heatmap utilizing Seaborn. As an example this, we generate random information utilizing NumPy and retailer it in a DataFrame. As quickly as the information is saved in a DataFrame, the correlation matrix may be created utilizing the corr() perform.

If no parameters are outlined throughout the perform, the Pearson coefficient is utilized by default to calculate the correlation matrix. In any other case, you may as well outline a unique correlation coefficient utilizing the strategy parameter.

Lastly, the heatmap is visualized utilizing seaborn. To do that, the heatmap() perform is known as and the correlation matrix is handed. Amongst different issues, the parameters can be utilized to find out whether or not the labels must be added and the colour palette may be specified. The diagram is then displayed with the assistance of matplolib.

That is what it is best to take with you

- The variance inflation issue is a key indicator for recognizing multicollinearity in a regression mannequin.

- The coefficient of willpower of the unbiased variables is used for the calculation. Not solely the correlation between two variables may be measured, but in addition mixtures of variables.

- Usually, a response must be taken if the VIF is larger than 5, and applicable measures must be launched. For instance, the affected variables may be faraway from the information set or the principal part evaluation may be carried out.

- In Python, the VIF may be calculated instantly utilizing statsmodels. To do that, the information should be saved in a DataFrame. The correlation matrix will also be calculated utilizing Seaborn to detect multicollinearity.